AWS Announces General Availability of Anthropic’s Claude Opus 4.7 Model in Amazon Bedrock, Advancing Enterprise AI Capabilities

Amazon Web Services (AWS) today announced the general availability of Anthropic’s Claude Opus 4.7 model within Amazon Bedrock, marking a significant advancement in generative AI offerings for enterprise customers. This latest iteration of Anthropic’s most intelligent model is engineered to deliver enhanced performance across critical enterprise workflows, including sophisticated coding tasks, long-running agentic applications, and demanding professional knowledge work. The integration of Claude Opus 4.7 on Amazon Bedrock underscores AWS’s commitment to providing a secure, scalable, and fully managed service for deploying leading foundation models, enabling businesses to innovate rapidly and responsibly.

Introduction to Claude Opus 4.7: A Leap in AI Reasoning and Performance

Claude Opus 4.7 represents a substantial upgrade in Anthropic’s suite of large language models, specifically designed to tackle complex problem-solving and nuanced understanding required in enterprise environments. According to Anthropic, the model exhibits marked improvements across various production-level workflows. Its capabilities extend to more effective agentic coding, where AI agents can autonomously generate and refine code, handle intricate knowledge work requiring deep contextual understanding, and excel in visual understanding tasks. A cornerstone of Opus 4.7’s enhanced performance is its improved ability to navigate ambiguity, demonstrating more thorough problem-solving methodologies and adhering to instructions with greater precision. This makes it particularly well-suited for tasks that previously required extensive human oversight or complex multi-step processes.

The model’s launch is not merely an incremental update; it signifies a qualitative shift in how AI can support and augment human expertise. Developers and data scientists leveraging Opus 4.7 can expect a model that works more intuitively with complex prompts, delivers more robust outputs, and integrates more seamlessly into existing enterprise systems. This means faster development cycles for AI-powered applications, more accurate data analysis, and more sophisticated automated agents capable of handling a wider range of scenarios.

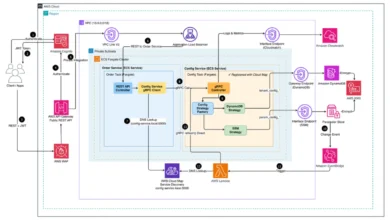

Amazon Bedrock’s Next-Generation Inference Engine: Powering Enterprise-Grade AI

A critical component enabling the advanced capabilities of Claude Opus 4.7 on AWS is Amazon Bedrock’s next-generation inference engine. This underlying infrastructure is engineered to provide enterprise-grade reliability, scalability, and security for production workloads. The new inference engine incorporates brand-new scheduling and scaling logic, a sophisticated system designed to dynamically allocate capacity to requests. This innovation significantly improves the availability of the model, particularly for steady-state workloads that require consistent performance, while simultaneously ensuring sufficient room for rapidly scaling services during peak demand. For businesses, this translates into dependable access to high-performance AI, minimizing downtime and ensuring consistent operational efficiency.

Beyond performance, data privacy and security remain paramount, especially when dealing with sensitive enterprise data. Bedrock’s new inference engine features "zero operator access," a critical security measure ensuring that customer prompts and responses are never visible to either Anthropic or AWS operators. This robust privacy framework is a cornerstone of Bedrock’s offering, addressing a primary concern for enterprises hesitant to deploy AI solutions that might expose proprietary or confidential information. By maintaining stringent data isolation, AWS empowers organizations to leverage cutting-edge AI without compromising their data governance and compliance requirements. This commitment to privacy is particularly appealing to industries like finance, healthcare, and legal, where data confidentiality is non-negotiable.

The Strategic Alliance: AWS and Anthropic in the Generative AI Landscape

The collaboration between AWS and Anthropic is a strategic alliance that has significantly shaped the generative AI landscape. AWS, as a leading cloud provider, has positioned Amazon Bedrock as a foundational service for building and scaling generative AI applications. Bedrock offers access to a diverse range of foundation models (FMs) from various AI companies, alongside AWS’s own models, providing customers with flexibility and choice. Anthropic, an AI safety and research company, has gained prominence for its commitment to developing safe and steerable AI systems, notably through its "Constitutional AI" approach, which imbues models with a set of principles to guide their behavior.

This partnership is mutually beneficial. For Anthropic, Bedrock offers a vast, secure, and highly scalable platform to distribute its advanced models to a global enterprise customer base. For AWS, integrating Anthropic’s cutting-edge models like Claude Opus 4.7 enriches its Bedrock offering, providing customers with access to some of the most capable FMs on the market. This synergy allows enterprises to leverage best-in-class AI models within a robust and familiar cloud environment, accelerating their AI adoption journeys. The continuous integration of newer, more powerful models like Opus 4.7 into Bedrock ensures that AWS remains at the forefront of the rapidly evolving generative AI market, offering competitive and innovative solutions to its clients.

A Chronology of Advancements and Market Context

The release of Claude Opus 4.7 follows a rapid succession of advancements in the generative AI field. In recent years, the industry has witnessed an explosion of innovation, driven by breakthroughs in transformer architectures and access to unprecedented computational resources. AWS launched Amazon Bedrock in late 2023, signaling its intent to democratize access to FMs and simplify their deployment for enterprises. Since its inception, Bedrock has continually expanded its model catalog, integrating models from various providers, including AI21 Labs, Cohere, Meta, Stability AI, and Anthropic.

Anthropic’s Claude series has been a prominent player in this evolving landscape. Earlier versions of Claude (e.g., Claude 2, Claude 3 family including Haiku, Sonnet, and Opus 3.0, and now Opus 4.7) have progressively demonstrated increased reasoning capabilities, longer context windows, and improved safety features. Each iteration has pushed the boundaries of what large language models can achieve, from sophisticated conversational AI to complex data analysis and content generation. The current announcement of Opus 4.7 is a testament to this ongoing, rapid pace of innovation, reflecting both Anthropic’s research prowess and AWS’s ability to quickly integrate and operationalize these advancements for its customers. This release occurs amidst a broader industry trend where enterprises are increasingly looking to adopt generative AI for competitive advantage, making robust and secure platforms like Bedrock essential.

Practical Implementation for Developers: Getting Started with Claude Opus 4.7

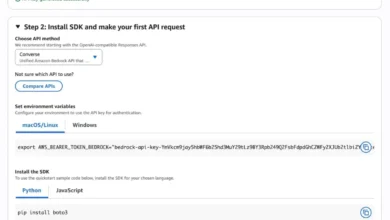

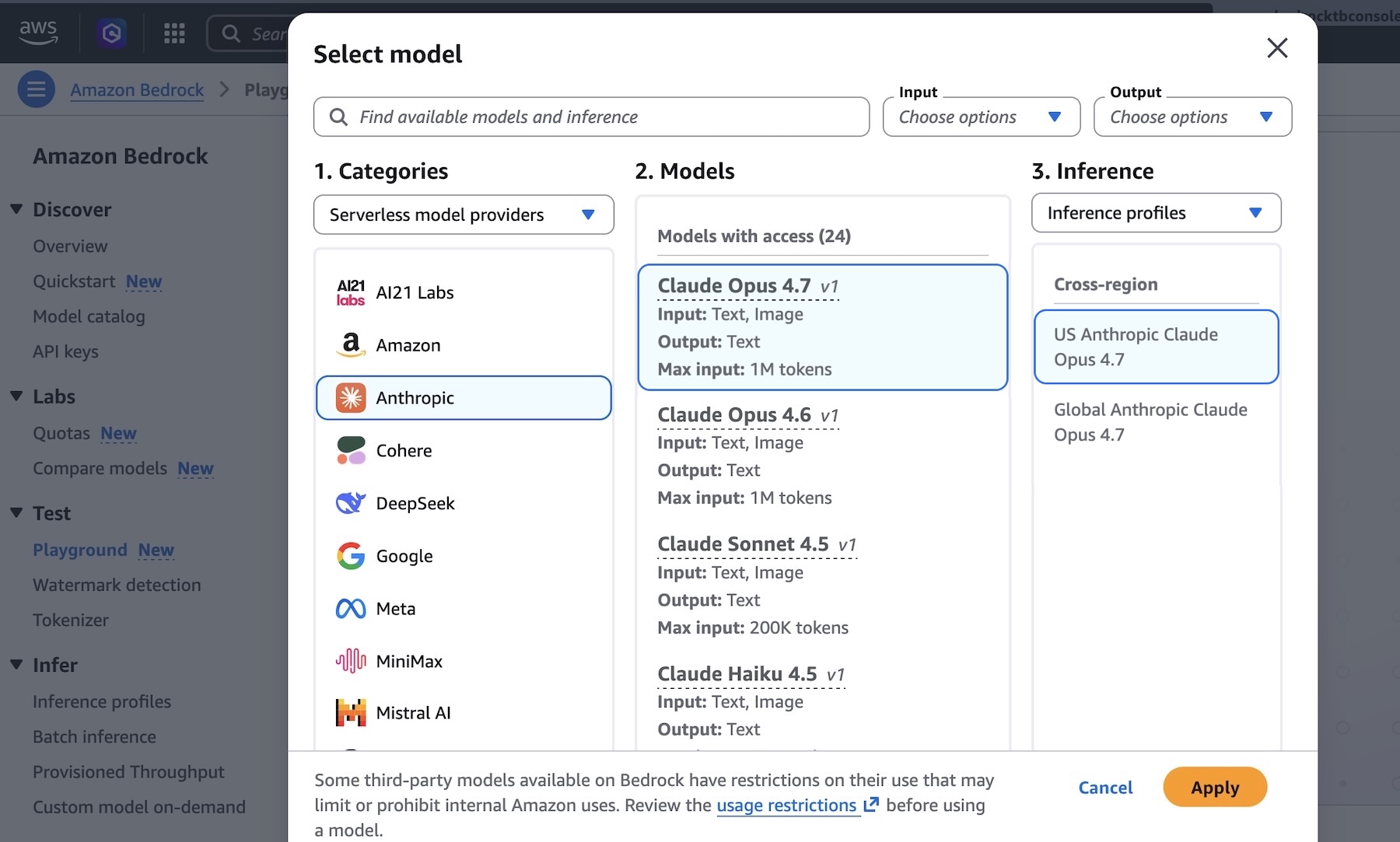

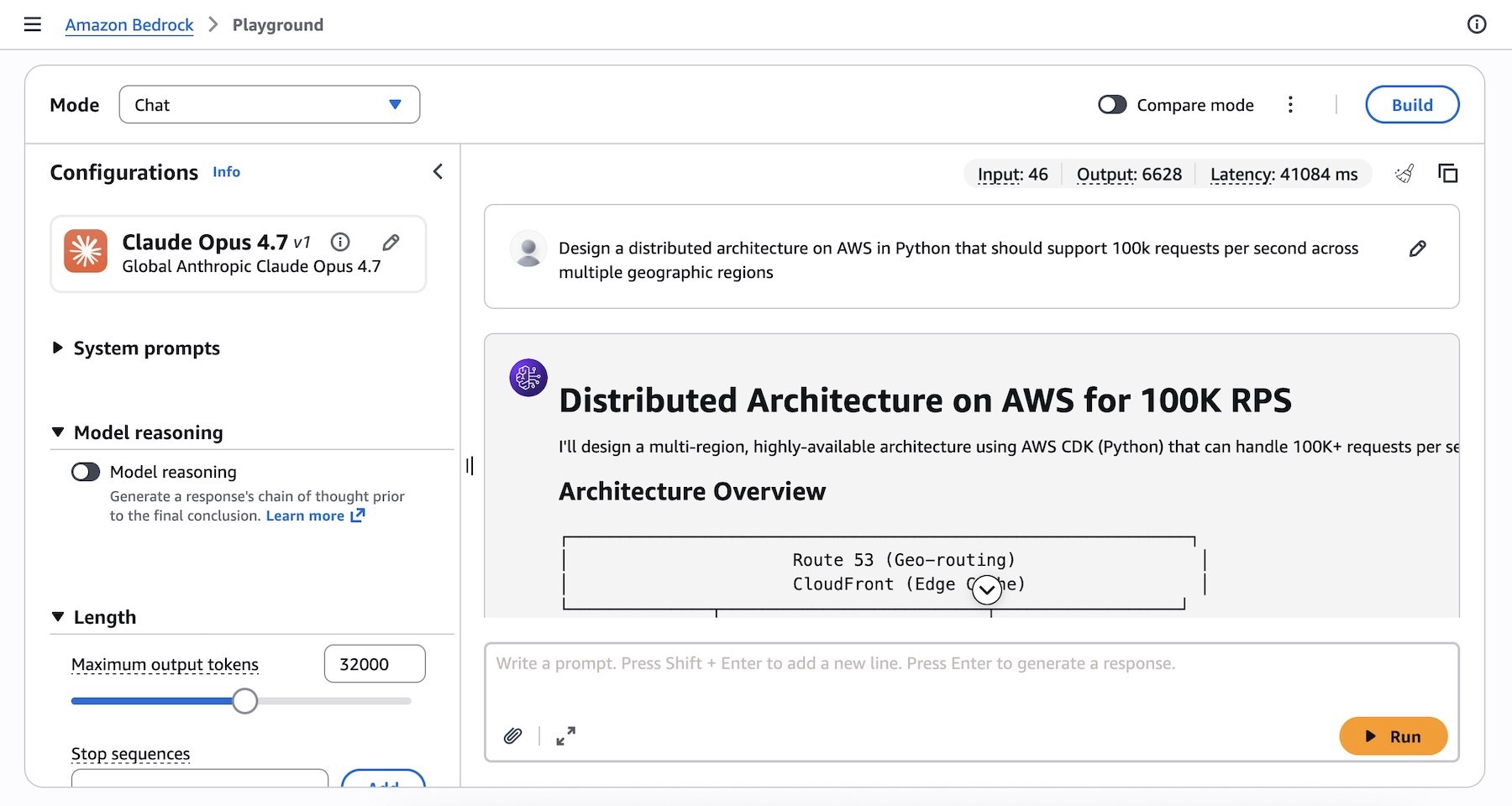

Developers and technical teams can begin experimenting with Claude Opus 4.7 immediately through the Amazon Bedrock console. The intuitive interface allows users to navigate to the "Playground" under the "Test" menu, where they can select "Claude Opus 4.7" as their model of choice. This provides a direct environment for testing complex prompts, such as designing distributed architectures or resolving intricate coding challenges.

For programmatic access, AWS offers multiple avenues. Users can leverage the Anthropic Messages API, which is supported via the bedrock-runtime endpoint through the anthropic[bedrock] SDK package. This provides a streamlined experience for integrating Claude Opus 4.7 into existing applications and workflows. An example Python snippet demonstrates how to initialize the AnthropicBedrockMantle client and create a message:

from anthropic import AnthropicBedrockMantle

# Initialize the Bedrock Mantle client (uses SigV4 auth automatically)

mantle_client = AnthropicBedrockMantle(aws_region="us-east-1")

# Create a message using the Messages API

message = mantle_client.messages.create(

model="us.anthropic.claude-opus-4-7",

max_tokens=32000,

messages=[

"role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions"

]

)

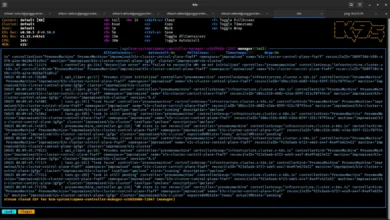

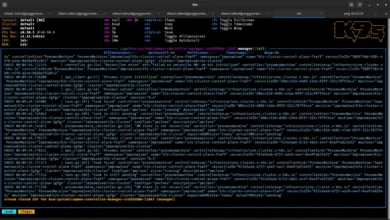

print(message.content[0].text)Alternatively, developers can interact with the model directly via the AWS Command Line Interface (AWS CLI) and the InvokeModel API, offering flexibility for scripting and automation:

aws bedrock-runtime invoke-model

--model-id us.anthropic.claude-opus-4-7

--region us-east-1

--body '"anthropic_version":"bedrock-2023-05-31", "messages": ["role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions."], "max_tokens": 32000'

--cli-binary-format raw-in-base64-out

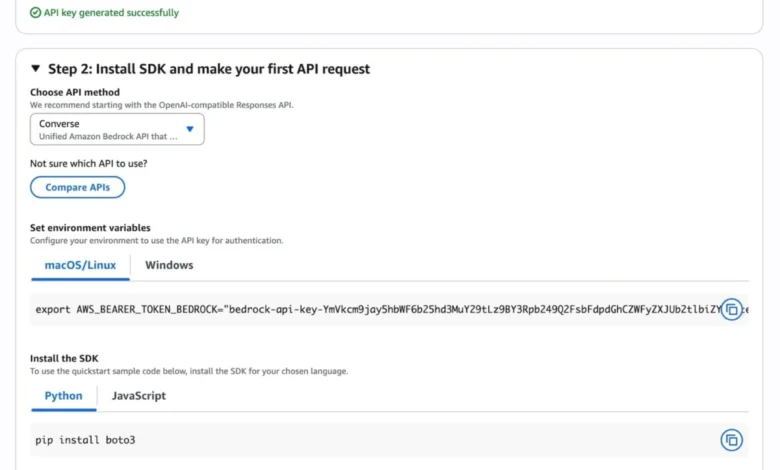

invoke-model-output.txtFor those new to Bedrock API calls, the console’s "Quickstart" option provides a guided experience, allowing users to generate short-term API keys for testing and access sample codes tailored to specific use cases. Furthermore, Claude Opus 4.7 supports "Adaptive thinking," a feature that dynamically allocates thinking token budgets based on the complexity of each request, optimizing resource usage and enhancing reasoning capabilities. Comprehensive documentation and code examples across various programming languages are available on the AWS and Anthropic platforms, facilitating rapid development and deployment.

Broader Impact and Implications for Enterprise Innovation

The introduction of Claude Opus 4.7 on Amazon Bedrock carries significant implications for enterprise innovation and the competitive landscape of generative AI. For businesses, it unlocks new possibilities across a spectrum of applications:

- Software Development: The model’s enhanced agentic coding capabilities can accelerate software development cycles, automate code generation, assist with debugging, and even refactor legacy codebases, freeing up developers to focus on higher-level architectural challenges and innovation.

- Customer Service: More intelligent and context-aware AI agents powered by Opus 4.7 can provide superior customer support, handling complex queries, personalizing interactions, and resolving issues more efficiently, leading to improved customer satisfaction.

- Content Generation and Curation: From marketing copy and technical documentation to creative writing and summarization of vast datasets, Opus 4.7 can generate high-quality, coherent content that adheres to specific brand guidelines and tones.

- Data Analysis and Research: The model’s ability to process and understand long-running tasks and complex data patterns makes it invaluable for market research, financial analysis, scientific discovery, and extracting insights from unstructured data.

- Strategic Decision Making: By processing vast amounts of information and providing nuanced summaries or analyses, Opus 4.7 can aid in strategic planning, risk assessment, and scenario modeling, helping leaders make more informed decisions.

The availability of such an advanced model within a managed service like Bedrock lowers the barrier to entry for enterprises seeking to harness the power of generative AI. Instead of investing heavily in specialized hardware, talent for model training, and complex infrastructure management, businesses can leverage AWS’s scalable and secure cloud environment to deploy and manage FMs efficiently. This democratization of advanced AI capabilities is expected to fuel a new wave of innovation across industries, enabling companies of all sizes to integrate sophisticated AI into their core operations.

Commitment to Responsible AI and Future Outlook

Anthropic’s strong emphasis on AI safety and responsible development, combined with AWS’s robust security and privacy features, ensures that Claude Opus 4.7 is not only powerful but also designed for ethical deployment. The "zero operator access" feature on Bedrock, coupled with Anthropic’s Constitutional AI principles, aims to mitigate risks associated with large language models, such as bias, misinformation, and misuse. This focus on responsible AI is crucial for fostering trust and widespread adoption of generative AI technologies in sensitive enterprise contexts.

Looking ahead, the continuous evolution of models like Claude Opus 4.7 suggests a future where AI systems become even more integrated, intelligent, and adaptable. The iterative improvements in reasoning, visual understanding, and instruction following pave the way for increasingly autonomous AI agents capable of performing multi-step tasks with minimal human intervention. As AWS continues to expand Bedrock’s regional availability (currently in US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm) Regions), more enterprises globally will gain access to these transformative tools, further accelerating the digital transformation journey across various sectors. The dynamic allocation of resources and enhanced privacy features of Bedrock’s inference engine will be critical enablers for this widespread adoption, ensuring that cutting-edge AI is both performant and secure.

Enterprises are encouraged to explore Claude Opus 4.7 in the Amazon Bedrock console today and provide feedback through AWS re:Post for Amazon Bedrock or their usual AWS Support contacts, contributing to the ongoing refinement and expansion of these vital AI services.