Amazon Web Services Unveils S3 Files, Bridging Object and File Storage for Enhanced Cloud Workloads

Amazon Web Services (AWS) has announced the launch of Amazon S3 Files, a groundbreaking new file system designed to seamlessly integrate any AWS compute resource with Amazon Simple Storage Service (Amazon S3). This innovation marks a significant evolution in cloud storage, effectively dissolving the long-standing architectural trade-off between the scalability, durability, and cost-efficiency of object storage and the interactive, hierarchical capabilities of traditional file systems. The introduction of S3 Files is poised to simplify cloud architectures, unlock new possibilities for data-intensive applications, and solidify Amazon S3’s role as a universal data hub across diverse computing environments.

The Historical Divide: Object Storage vs. File Systems

For over a decade, cloud architects and developers have grappled with the fundamental distinctions between object storage and file systems. Amazon S3, launched in 2006, revolutionized cloud storage by offering unparalleled scalability, 11 nines of durability, and cost-effectiveness for vast amounts of unstructured data. Its object-based nature, however, meant data was treated as immutable objects, akin to books in a library where an entire "book" (object) must be replaced for any modification, rather than editing individual "pages" (bytes). This model excelled for data lakes, backups, archives, and web content delivery, but posed challenges for applications requiring byte-range writes, shared access, or POSIX-compliant file system semantics.

Conversely, traditional file systems, represented in AWS by services like Amazon Elastic File System (EFS) and Amazon FSx, provide the familiar hierarchical structure, metadata richness, and concurrent read/write access that many legacy and modern applications demand. These systems are crucial for workloads that need to frequently modify small parts of files, share data across multiple compute instances, or rely on specific file system protocols. The necessity of choosing between these two distinct storage paradigms often led to complex data synchronization strategies, data duplication, or architectural compromises that impacted performance, cost, or operational simplicity. Developers frequently found themselves building intricate pipelines to move data between S3 and file systems, incurring additional latency, cost, and management overhead. This architectural friction has been a persistent challenge for organizations striving to leverage the best of both worlds.

S3 Files: A Paradigm Shift in Cloud Storage

With S3 Files, Amazon S3 becomes the first and only cloud object store to offer fully-featured, high-performance file system access directly to your data. This innovation means that S3 buckets can now be exposed as native file systems, allowing AWS compute instances, containers, and functions to interact with S3 data using standard file system commands. This eliminates the need for developers to choose between the inherent benefits of S3 and the interactive capabilities of a file system. Instead, S3 data can now be accessed directly from any Amazon Elastic Compute Cloud (EC2) instance, containers running on Amazon Elastic Container Service (ECS) or Amazon Elastic Kubernetes Service (EKS), or AWS Lambda functions.

The underlying technology leverages the Network File System (NFS) v4.1+ protocol, enabling comprehensive file system operations such as creating, reading, updating, and deleting files. This robust support for POSIX-compliant semantics ensures broad compatibility with existing applications and tools that expect a file system interface. The significance here is profound: applications previously constrained by S3’s object model can now seamlessly operate on S3 data without modification, dramatically expanding S3’s utility across enterprise workloads.

Technical Architecture and Performance Optimization

At its core, S3 Files intelligently manages data movement and access to optimize performance and cost. When users interact with specific files and directories through the S3 file system, associated file metadata and contents are dynamically placed onto a high-performance storage tier. This tier, powered by Amazon Elastic File System (EFS) technology, delivers sub-millisecond latencies, typically around 1 millisecond, for active data. This ensures that frequently accessed or "hot" data benefits from the speed and responsiveness expected of a local file system.

For files not requiring such low-latency access, particularly those involved in large sequential reads, S3 Files automatically serves these directly from Amazon S3. This strategy maximizes throughput by leveraging S3’s immense bandwidth capabilities for bulk data transfers, avoiding unnecessary caching on the high-performance tier. Furthermore, for byte-range reads, only the requested bytes are transferred, minimizing data movement and associated costs. This intelligent tiering and data management system is a key differentiator, allowing organizations to optimize for both performance and cost across diverse access patterns.

The system also incorporates intelligent pre-fetching capabilities, anticipating data access needs to further enhance performance. Users retain fine-grained control over what data is stored on the high-performance tier, with options to load full file data or metadata only, allowing for tailored optimization based on specific workload requirements. This level of control ensures that resources are allocated efficiently, aligning performance characteristics with application demands.

Crucially, S3 Files supports concurrent access from multiple compute resources, maintaining NFS close-to-open consistency. This feature is vital for interactive, shared workloads where multiple users or processes might be modifying data simultaneously. Use cases range from collaborative agentic AI systems that share file-based tools and Python libraries to machine learning training pipelines that process and mutate large datasets in concert. The ability to share data across compute clusters without duplication is a significant cost and operational advantage, eliminating the need for complex data synchronization mechanisms that were previously required.

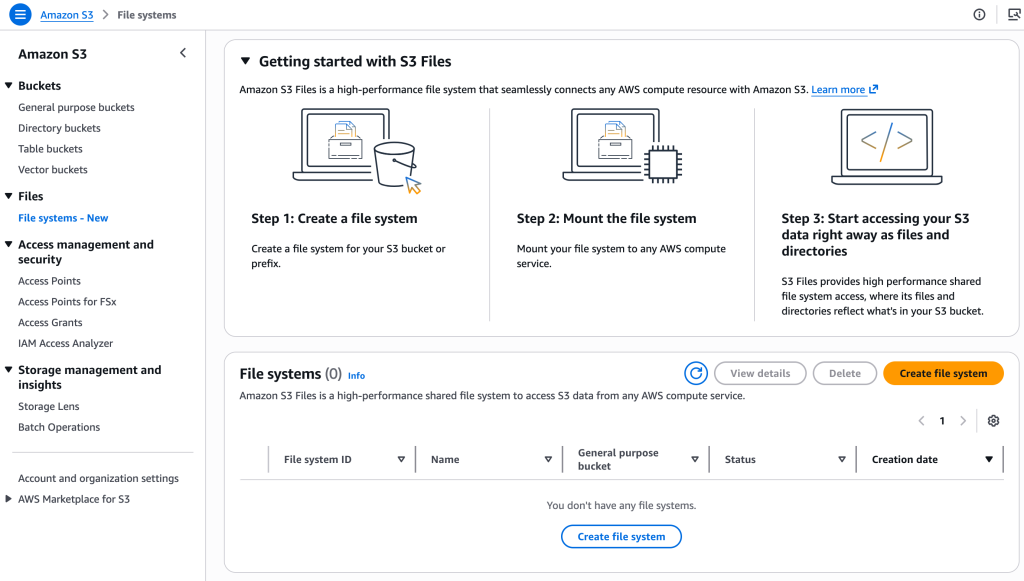

Practical Implementation and Workflow

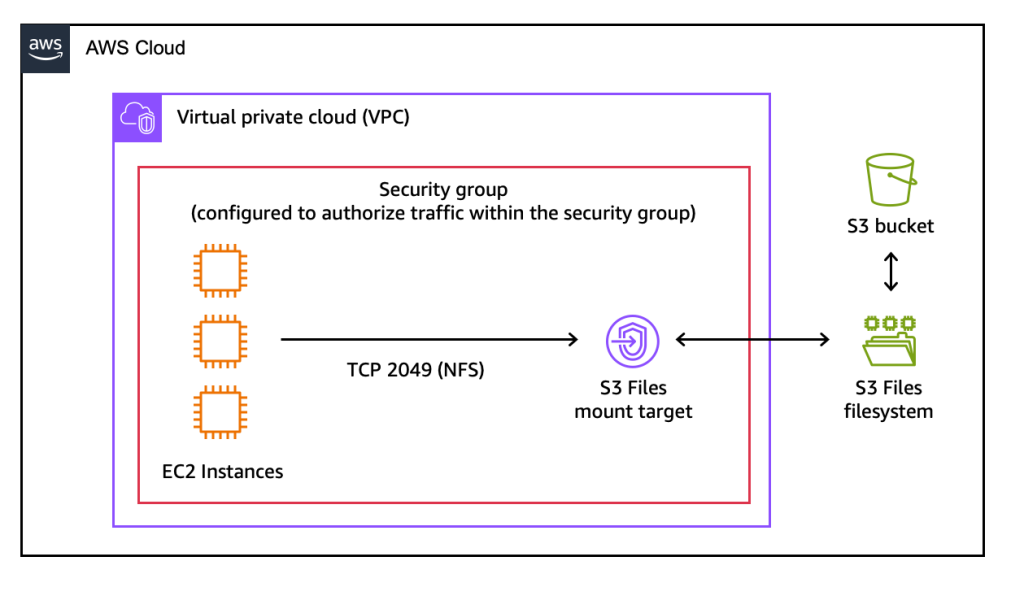

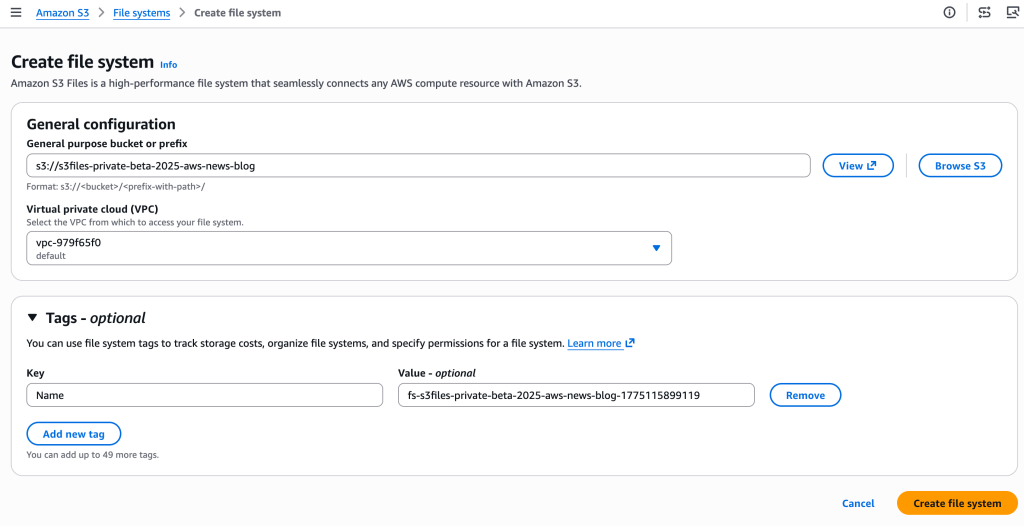

Getting started with Amazon S3 Files is designed to be straightforward. Users can create an S3 file system through the AWS Management Console, AWS Command Line Interface (CLI), or infrastructure as code (IaC) tools. The process involves specifying an existing general-purpose S3 bucket to be exposed as a file system. Upon creation, the service automatically provisions mount targets – network endpoints within the user’s Virtual Private Cloud (VPC) – that allow EC2 instances to connect to the S3 file system.

Once connected, an EC2 instance can mount the S3 file system using standard Linux commands, treating the S3 bucket like any other local file system directory. For example, after creating a directory /home/ec2-user/s3files, a simple sudo mount -t s3files <mount_target_ID>:/ /home/ec2-user/s3files command makes the S3 bucket’s contents accessible. Any standard file operation, such as echo "Hello S3 Files" > s3files/hello.txt, will create a file that is automatically synchronized back to the S3 bucket. Updates made through the file system are reflected as new objects or new versions of existing objects in the S3 bucket within minutes. Conversely, changes made directly to objects in the S3 bucket are visible in the mounted file system typically within seconds, though sometimes up to a minute or longer, ensuring strong eventual consistency across both access paradigms.

Strategic Positioning within the AWS Storage Ecosystem

The introduction of S3 Files naturally raises questions about its relationship with other AWS file services like Amazon EFS and Amazon FSx. AWS emphasizes that S3 Files is not a replacement but a complementary offering, designed to address a specific niche: providing interactive, shared access to data that already resides in Amazon S3 through a high-performance file system interface.

-

Amazon S3 Files: Best suited for workloads where multiple compute resources (production applications, agentic AI agents, ML training pipelines) need to collaboratively read, write, and mutate data directly stored in S3. It provides shared access across clusters without data duplication, sub-millisecond latency for active data, and automatic synchronization with S3. Its primary value proposition is making S3 a universal data lake accessible via both object and file APIs.

-

Amazon FSx: This family of services is ideal for workloads migrating from on-premises Network Attached Storage (NAS) environments, offering familiar features and compatibility with specific file systems. This includes high-performance computing (HPC) and GPU cluster storage with Amazon FSx for Lustre, or applications requiring the specific capabilities of Amazon FSx for NetApp ONTAP, Amazon FSx for OpenZFS, or Amazon FSx for Windows File Server. FSx services are chosen when applications have strong dependencies on particular file system protocols, features, or performance characteristics not offered by general-purpose file systems or when they need to integrate seamlessly with existing enterprise environments.

-

Amazon EFS: While S3 Files leverages EFS technology for its high-performance tier, EFS itself remains a general-purpose, scalable, elastic NFS file system designed primarily for Linux-based workloads that require shared file storage. EFS is suitable for a wide range of applications that need a fully managed, highly available, and durable file system without the underlying S3 object store as its primary data repository.

In essence, AWS continues to provide a comprehensive suite of storage options, each optimized for different use cases. S3 Files fills a crucial gap, empowering developers to leverage S3’s unmatched scale and durability while gaining the flexibility and performance of a file system for interactive and collaborative workloads.

Pricing and Global Availability

Amazon S3 Files is immediately available in all commercial AWS Regions, underscoring its broad applicability and strategic importance. The pricing model is designed to be transparent and aligns with typical cloud consumption patterns. Customers incur costs for the portion of data stored in their S3 file system (the high-performance tier), for small file read and all write operations to the file system, and for S3 requests generated during data synchronization between the file system and the underlying S3 bucket. Detailed pricing information is available on the Amazon S3 pricing page, allowing organizations to accurately estimate costs based on their specific usage patterns and data volumes.

Broader Implications and Future Outlook

The launch of Amazon S3 Files represents a significant step forward in cloud storage, with profound implications for cloud architecture and application development.

- Simplifying Cloud Architectures: By eliminating the need for separate data stores and complex synchronization logic, S3 Files helps reduce architectural complexity and operational overhead. Data silos are naturally broken down as S3 becomes a central, universally accessible repository.

- Empowering AI and Machine Learning: The ability to access S3 data directly as a file system is a game-changer for AI and ML workloads. Agentic AI systems, which often rely on file-based Python libraries, shell scripts, and collaborative tools, can now operate seamlessly on vast datasets stored in S3. ML training pipelines can process and mutate data more efficiently, reducing the time and effort required for data preparation and feature engineering.

- Unlocking New Use Cases for S3: S3 Files expands the range of applications that can natively leverage S3. Production tools, data analytics platforms, and scientific computing workloads that traditionally required file system interfaces can now directly tap into S3’s scale and durability without costly re-architecting.

- Enhanced Developer Productivity: Developers can now use familiar file system commands and existing toolchains to interact with S3 data, significantly reducing the learning curve and accelerating development cycles for new and existing applications.

- Economic Advantages: By leveraging S3’s cost-effectiveness for long-term storage and dynamically caching active data on a high-performance tier, organizations can achieve an optimal balance of performance and cost, avoiding the over-provisioning often associated with dedicated file systems for all data.

Industry analysts are likely to view S3 Files as a pivotal development that further solidifies AWS’s leadership in cloud storage. This move addresses a persistent pain point for customers, simplifying the management of diverse data types and enabling more sophisticated workloads directly on S3. It underscores a trend towards converged storage solutions that offer the best attributes of different paradigms without requiring complex compromises. As organizations continue to generate and rely on ever-increasing volumes of data, the ability to access this data with unprecedented flexibility and performance will be critical for innovation and competitive advantage. Amazon S3, once primarily known for object storage, is now firmly positioned as the foundational data layer for virtually any cloud workload.