Declarative Multi-Cluster Management: K3s on Proxmox Achieves Breakthrough with k0rdent’s BYOT Approach

April 17, 2026 – In a significant development for the cloud-native ecosystem, Improwised Tech, in collaboration with a CNCF Ambassador, has successfully demonstrated a robust, declarative method for deploying and managing lightweight Kubernetes (K3s) clusters on on-premise Proxmox infrastructure. This innovative approach, leveraging the multi-cluster management capabilities of k0rdent and its "Bring Your Own Template" (BYOT) methodology, addresses long-standing challenges associated with running Kubernetes in self-hosted environments, offering unparalleled repeatability, scalability, and operational cleanliness. The announcement comes as Kubernetes celebrates its 12th anniversary, a testament to its journey from a Google side project to the bedrock of modern infrastructure, now extending its reach efficiently into hybrid and edge computing landscapes.

The Enduring Challenge of On-Premise Kubernetes

For over a decade, Kubernetes has revolutionized how applications are deployed and managed, evolving into the de facto operating system for cloud-native workloads. Its widespread adoption spans multi-cloud, hybrid, and edge environments, powering everything from mainframes to GPU-accelerated systems. However, while public cloud providers abstract much of the underlying infrastructure complexity, running Kubernetes on-premise has historically presented a unique set of hurdles. Organizations opting for self-hosted solutions, often due to data sovereignty, cost considerations, or specific performance requirements, frequently encounter significant operational friction.

Common pain points include:

- Manual Provisioning: The initial setup of virtual machines, networking, and operating system configurations often involves tedious manual steps or complex, brittle scripts.

- Lack of Repeatability: Achieving identical cluster configurations across multiple on-premise deployments or environments is challenging, leading to "snowflake" clusters that are difficult to troubleshoot and maintain.

- Scaling Difficulties: Expanding or contracting an on-premise cluster typically requires significant manual intervention, making elastic scaling cumbersome and slow.

- Configuration Drift: Maintaining a consistent desired state over time is a constant battle, with manual changes often leading to inconsistencies and unexpected behavior.

- Complex Upgrades: Kubernetes upgrades, already intricate, become even more daunting when tied to custom, hand-crafted infrastructure setups.

- Reproducibility for Disaster Recovery: Rebuilding a cluster from scratch in the event of a disaster can be a lengthy and error-prone process without a fully declarative approach.

These challenges have often led to on-premise Kubernetes deployments being perceived as high-effort, high-maintenance endeavors, contrasting sharply with the streamlined experience offered by managed cloud services. The new solution presented by Shivani Rathod of Improwised Tech and Prithvi Raj, a CNCF Ambassador, directly confronts these issues.

k0rdent and K3s: A Synergistic Solution for On-Premise Excellence

The core of this breakthrough lies in the intelligent combination of k0rdent for declarative multi-cluster management and K3s for lightweight Kubernetes deployment on Proxmox. k0rdent, while not explicitly detailed in its foundational architecture within the initial communication, clearly operates on principles akin to the Cluster API (CAPI) project. CAPI provides declarative APIs for creating, configuring, and managing Kubernetes clusters, extending the Kubernetes API to manage infrastructure. By abstracting the underlying infrastructure into a set of Kubernetes-native objects, CAPI enables a consistent way to provision and manage clusters across diverse environments, be it public clouds, private clouds, or bare metal. k0rdent appears to leverage or implement a similar provider-based model, allowing for infrastructure, control plane, and bootstrap providers to be swapped out as needed.

K3s, developed by Rancher Labs (now SUSE), is a fully compliant Kubernetes distribution optimized for resource-constrained environments. Its minimal footprint, rapid installation time, and reduced dependencies make it an ideal choice for edge computing, IoT, and, crucially, on-premise deployments where resource efficiency is paramount. Unlike full-fat Kubernetes, K3s bundles critical components into a single binary, simplifying installation and ongoing management. Its design philosophy aligns perfectly with the need for a "friendly to an on-prem setup" solution.

"Our goal was to eliminate the manual toil and inconsistencies that plague on-premise Kubernetes deployments," stated Shivani Rathod, reflecting on the project’s inception. "We sought a solution that was declarative, repeatable, and clean, while still being highly adaptable to self-hosted environments like Proxmox. k0rdent, with its emphasis on reconciliation and its BYOT flexibility, provided the perfect framework."

The "Bring Your Own Template" (BYOT) Paradigm: Democratizing Infrastructure Provisioning

A cornerstone of this innovative deployment method is k0rdent’s "Bring Your Own Template" (BYOT) approach, particularly critical for integrating with Proxmox. Proxmox, an open-source server virtualization management solution, is a popular choice for self-hosting due to its robust features and cost-effectiveness. However, k0rdent, like many cluster management tools, does not ship with a native Proxmox infrastructure provider out-of-the-box. The BYOT model empowers users to bridge this gap by creating custom infrastructure providers tailored to their specific environments.

Instead of dynamically building VM images on every provision, the Improwised Tech team opted to use existing Proxmox VM templates. These pre-configured templates already included essential elements like cloud-init enablement, SSH access configuration, and base operating system packages. This strategic decision yielded significant benefits:

- Speed: Provisioning new VMs became dramatically faster, as only cloning and minimal customization were required, rather than full image builds.

- Consistency: Leveraging standardized templates ensured that every provisioned VM started from a known, stable base, reducing configuration drift at the infrastructure layer.

- Reduced Complexity: The provisioning process was simplified, as the custom Helm chart for Proxmox only needed to handle VM instantiation and configuration, not image creation.

- Flexibility: It allowed for fine-grained control over the base VM image, enabling specific security hardening, pre-installed tools, or custom configurations to be baked in.

This BYOT approach not only filled a critical gap for Proxmox users but also highlighted a broader principle: if a specific infrastructure isn’t natively supported, the framework allows users to extend its capabilities, transforming custom infrastructure into a first-class citizen within the declarative management paradigm.

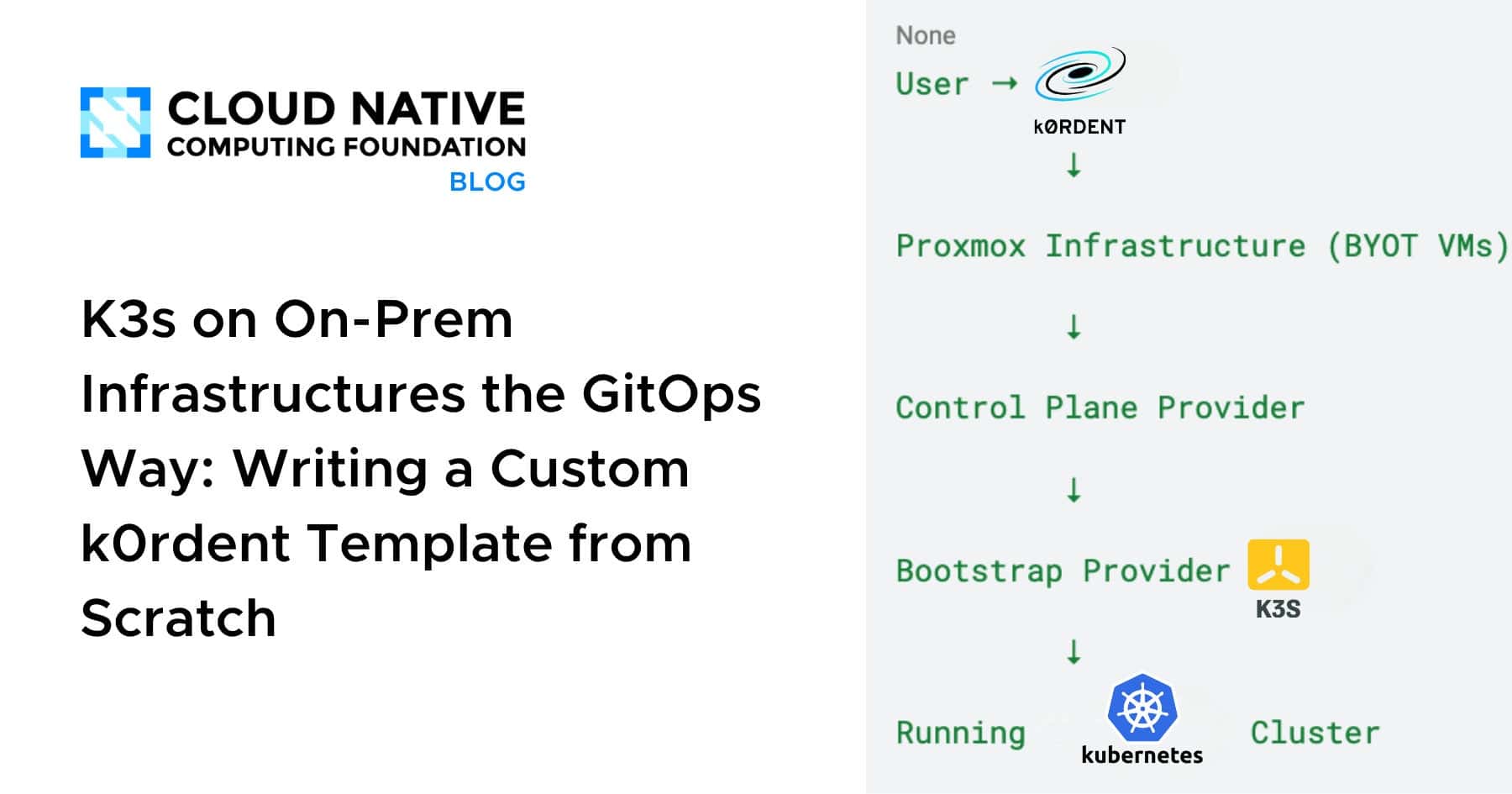

Deconstructing the Declarative Flow: A Three-Layered Architecture

The entire process is orchestrated through a series of declarative definitions, primarily using Helm charts, which k0rdent then reconciles. This multi-layered architecture ensures a clear separation of concerns, with each component responsible for a specific aspect of the cluster’s lifecycle.

1. Step 1: Infrastructure Provider (BYOT for Proxmox)

The initial phase focuses purely on provisioning the virtual machine infrastructure. Since a native Proxmox provider was unavailable, a custom Helm chart was developed to act as k0rdent’s Infrastructure Provider for Proxmox. This chart is intentionally narrowly scoped, focusing exclusively on infrastructure concerns.

Its key functions include:

- VM Creation: Interfacing with the Proxmox API to create new virtual machines.

- Resource Allocation: Defining CPU, memory, and storage for each VM.

- Network Configuration: Assigning network interfaces and IP addresses.

- Template Cloning: Utilizing pre-existing Proxmox VM templates as the base for new instances.

- Cloud-init Integration: Passing configuration data (like SSH keys, hostname, user data) to the VMs upon first boot via cloud-init, ensuring initial setup is automated and consistent.

Critically, this Helm chart contains no Kubernetes-specific logic. Its sole purpose is to provide ready-to-use virtual machines, treating the Proxmox environment as a pool of resources to be consumed declaratively. The Proxmox provider Helm charts are open-sourced and available for community use, fostering broader adoption and collaboration.

2. Step 2: Control Plane Provider

Once the underlying virtual machines are provisioned by the infrastructure provider, the Control Plane Provider takes charge. This component is responsible for establishing the Kubernetes control plane on the designated VMs. Its responsibilities include:

- Component Installation: Installing essential Kubernetes control plane components (e.g., API Server, etcd, Scheduler, Controller Manager).

- Configuration: Configuring these components to communicate and operate correctly as a cohesive control plane.

- Certificate Management: Ensuring secure communication within the cluster through proper certificate generation and distribution.

- Role Assignment: Declaratively assigning roles (e.g., control plane, worker) to the provisioned VMs, transforming raw infrastructure into functional Kubernetes nodes.

In this setup, control plane nodes seamlessly run on the Proxmox VMs, with all necessary VM details flowing directly from the infrastructure provider. This ensures that the roles are assigned declaratively, making the cluster feel "intentional, not accidental," as described by the project team. The visual representations provided underscore the clear delineation of responsibilities and the logical flow of information.

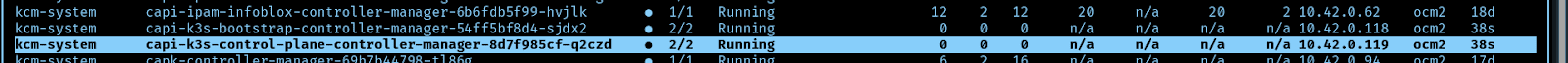

3. Step 3: Bootstrapping Kubernetes with K3s

The final major step involves bootstrapping Kubernetes onto the control plane and worker nodes. For this, K3s was selected as the bootstrap provider, a choice perfectly aligned with the project’s goals for a lightweight, fast, and efficient on-premise solution. The BootstrapProvider and ControlPlaneProvider definitions for K3s are elegantly simple, referencing the official cluster-api-k3s releases for their respective components.

The K3s Bootstrap Provider Helm chart manages the entire K3s lifecycle, encompassing:

- K3s Installation: Installing the K3s binary on the designated nodes.

- Cluster Initialization: Initiating the K3s cluster on the control plane node(s).

- Agent Joining: Configuring worker nodes to join the K3s cluster as agents.

- Configuration Management: Applying specific K3s configurations (e.g., custom CNI, storage provisioner settings).

- Health Checks: Monitoring the K3s components to ensure the cluster is operational.

Upon the successful completion of this step, a fully functional Kubernetes cluster, powered by K3s, is up and running on the Proxmox infrastructure, ready to host containerized applications.

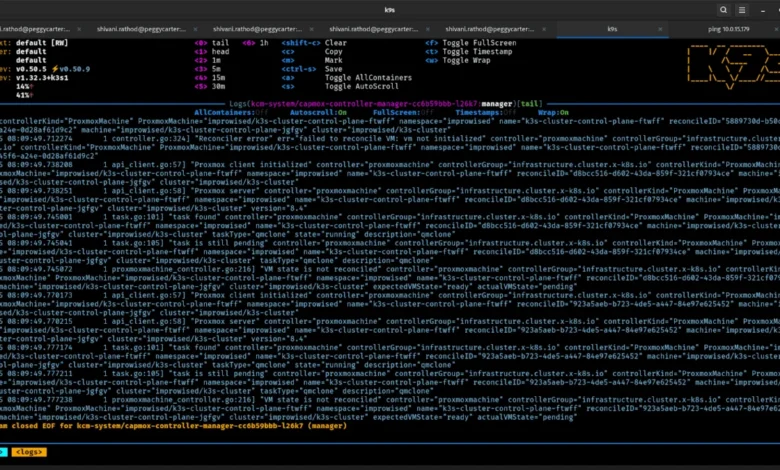

The Power of Continuous Reconciliation

The true strength of k0rdent, particularly when combined with this provider-based model, lies in its continuous reconciliation loop. Unlike traditional imperative scripts that execute once and then leave the system in an unmanaged state, k0rdent constantly monitors the actual state of the infrastructure and cluster against the desired state defined in the declarative configurations.

This means:

- Self-Healing Infrastructure: If a VM fails or deviates from its specified configuration, k0rdent will detect the discrepancy and attempt to remediate it.

- Automated Scaling: Scaling the cluster up or down simply involves updating the desired number of nodes in the configuration; k0rdent handles the provisioning or de-provisioning of VMs and the integration into the Kubernetes cluster.

- Guaranteed Consistency: Any manual changes that diverge from the declarative definition will be identified and, if configured, automatically reverted or flagged for attention, preventing configuration drift.

- Repeatable Deployments: The exact same declarative configuration can be used to spin up identical clusters across different Proxmox instances, ensuring consistency from development to production environments.

The result is a fully declarative and managed K3s cluster on the on-premise environment, where the infrastructure and Kubernetes lifecycle are treated as code, version-controlled, and continuously reconciled.

Broader Implications and Future Outlook

This successful implementation holds significant implications for the wider cloud-native community, particularly for organizations navigating the complexities of hybrid and edge computing. According to recent CNCF surveys, on-premise and hybrid cloud deployments remain a critical component of enterprise IT strategies, often alongside public cloud usage. Solutions like this bridge the gap, bringing the operational elegance of cloud-native principles to self-managed infrastructure.

"This project is a testament to the power of open-source collaboration and the extensibility of the cloud-native ecosystem," commented Prithvi Raj, a CNCF Ambassador. "By demonstrating how to effectively leverage k0rdent’s BYOT with K3s on Proxmox, we’re empowering a broader range of users to embrace declarative Kubernetes management, regardless of their underlying infrastructure choices. This significantly lowers the barrier to entry for robust on-premise and edge deployments."

The ability to "bring your own template" ensures that organizations are not locked into specific infrastructure providers or rigid deployment models. It fosters innovation by allowing the community to extend the capabilities of tools like k0rdent to virtually any environment, from custom bare-metal setups to specialized virtualization platforms. This flexibility is crucial as the cloud-native landscape continues to diversify, with edge computing, IoT, and sovereign cloud initiatives gaining momentum.

Ultimately, this development signifies a maturation of on-premise Kubernetes management. It moves beyond the era of bespoke scripts and manual interventions, ushering in a new standard where self-hosted infrastructure can be managed with the same declarative rigor and automation traditionally associated with hyperscale cloud providers. Scaling a cluster is no longer a weekend project; rebuilding it is no longer terrifying. It is simply a matter of configuration, treated as code, and continuously reconciled. This approach not only streamlines operations but also enhances security, reliability, and the overall developer experience for teams operating in hybrid and edge environments. As Kubernetes continues its journey, solutions like this will be instrumental in ensuring its ubiquity and adaptability across the entire spectrum of modern computing infrastructure.