Declarative Multi-Cluster Management with K3s on Proxmox using k0rdent: A Blueprint for On-Premise Kubernetes Excellence

The cloud-native landscape, anchored by Kubernetes, has undergone a transformative journey since its inception a dozen years ago, evolving from an internal Google project into the de facto operating system for modern infrastructure. This ubiquitous platform now orchestrates workloads across an astonishing array of environments, from powerful mainframes and GPU-accelerated clusters to diverse multi-cloud, hybrid, on-premise, and burgeoning edge deployments. Complementing this evolution, the Cloud Native Computing Foundation (CNCF) ecosystem has expanded dramatically, introducing a myriad of projects designed to fill the functional gaps and enhance the operational capabilities of Kubernetes.

While the vastness of the CNCF landscape addresses countless challenges, a particularly intricate intersection—the deployment of lightweight Kubernetes, specifically K3s, on on-premise infrastructure like Proxmox, coupled with the declarative multi-cluster management provided by k0rdent—presents a compelling solution to long-standing operational complexities. This article delves into a meticulously curated use case, demonstrating how a robust, repeatable, and declarative approach can provision K3s clusters within a self-hosted environment, leveraging custom Helm charts and k0rdent’s "Bring Your Own Template" (BYOT) methodology. This isn’t merely a theoretical exercise; it represents a production-ready blueprint for managing on-premise Kubernetes clusters with unprecedented efficiency and reliability.

The On-Premise Kubernetes Predicament: A Legacy of Pain Points

For organizations committed to running Kubernetes on their own physical infrastructure, the journey has historically been fraught with significant challenges. The allure of control, data sovereignty, and potentially lower long-term costs often comes hand-in-hand with a litany of operational headaches. Common grievances voiced by IT professionals and site reliability engineers include:

- Manual Configuration Drudgery: Setting up and maintaining Kubernetes clusters on bare metal or custom virtualization platforms often devolves into a series of manual, script-heavy, and error-prone tasks. Each node, each component, requires precise configuration, leaving ample room for human error.

- Inconsistent Deployments: Without standardized automation, clusters tend to drift, leading to inconsistencies across different environments or even between nodes within the same cluster. This makes troubleshooting difficult and scales poorly.

- Scaling Nightmares: Expanding an on-premise cluster—adding new worker nodes or even control plane instances—can become a complex, time-consuming project, often requiring re-evaluation of network configurations, storage, and security policies for each new addition.

- Lack of Repeatability: Reproducing a cluster configuration, whether for development, testing, or disaster recovery, is often a monumental task, undermining the agility that Kubernetes is supposed to deliver.

- Vendor Lock-in and Custom Solutions: While public cloud providers offer managed Kubernetes services that abstract away much of this complexity, on-premise environments often necessitate custom tooling or reliance on specific vendor solutions, which can limit flexibility and portability.

- Difficulty in Upgrades: Patching and upgrading Kubernetes components or the underlying operating system can introduce significant downtime and operational risk, especially in highly customized on-prem setups.

These pain points collectively highlight a fundamental disconnect: while Kubernetes champions declarative infrastructure and automation, its on-premise implementation often forces teams back into imperative, manual workflows. The objective, therefore, was to devise a solution that retains the benefits of on-premise deployment while embracing the declarative, repeatable, and clean principles central to modern cloud-native operations, all while remaining friendly to the unique constraints of a self-hosted environment. This is precisely where the synergistic combination of k0rdent, Proxmox, and K3s emerges as a powerful alternative.

The Vision: A Declarative Multi-Cluster Management Ecosystem

The core architectural objective was to construct a robust and highly automated system capable of provisioning and managing K3s clusters on Proxmox. This system was designed around several key principles:

- Declarative Infrastructure: The entire setup, from virtual machines to Kubernetes components, should be defined through declarative configurations, ensuring that the desired state is consistently maintained.

- Lightweight Kubernetes: K3s was chosen for its minimal resource footprint and rapid deployment capabilities, making it ideal for on-premise and edge scenarios where efficiency is paramount.

- On-Premise Compatibility: Direct integration with Proxmox, a popular open-source virtualization management platform, was crucial to leverage existing self-hosted infrastructure investments.

- Multi-Cluster Management: The solution needed to support the management of multiple Kubernetes clusters from a centralized control plane, simplifying fleet operations.

- Extensibility (BYOT): The ability to "Bring Your Own Template" for infrastructure provisioning was a non-negotiable requirement, allowing for pre-optimized base images and accelerating deployment times.

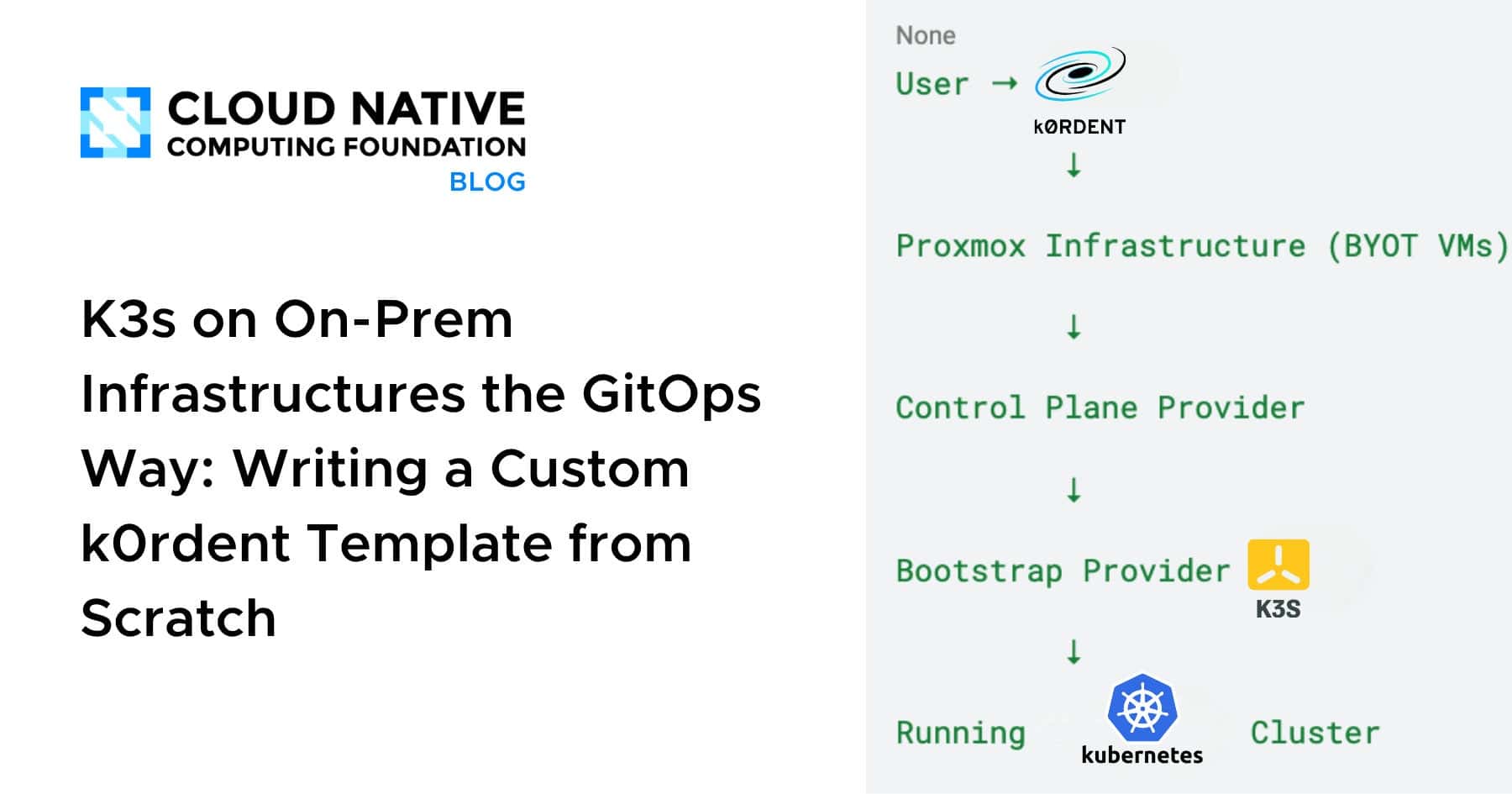

The high-level operational flow envisioned for this system clearly delineates responsibilities, ensuring each component performs its specific function with precision:

- User Interaction: An operator initiates the cluster provisioning request via k0rdent.

- k0rdent Orchestration: k0rdent acts as the central orchestrator, translating the declarative intent into actionable steps.

- Proxmox Infrastructure (BYOT VMs): The infrastructure provider, custom-built for Proxmox, provisions virtual machines based on pre-defined templates.

- Control Plane Provider: This layer is responsible for configuring the control plane components of the Kubernetes cluster.

- Bootstrap Provider (K3s): The bootstrap provider installs and initializes K3s on the provisioned VMs.

- Running Kubernetes Cluster: The final outcome is a fully operational K3s Kubernetes cluster, ready for workload deployment.

This layered approach ensures a clean separation of concerns, making the system modular, maintainable, and highly reliable.

Why On-Premise, k0rdent, and K3s Form a Potent Combination

Proxmox represents a prime example of a self-hosted environment where organizations can achieve significant operational control and cost efficiencies. However, the traditional approach to deploying Kubernetes on such platforms often leads to bespoke, fragile, and difficult-to-scale installations. k0rdent fundamentally alters this paradigm. Instead of relying on imperative scripts that dictate how actions should be performed, k0rdent embraces a declarative model where operators specify what the desired state of their infrastructure and clusters should be. The system’s reconciliation loops then continuously work to achieve and maintain that state, abstracting away much of the underlying operational complexity.

k0rdent, operating within the broader Cluster API ecosystem, offers a distinct separation of concerns that is particularly beneficial for on-premise integrations:

- Infrastructure Provider: Manages the lifecycle of underlying infrastructure (e.g., VMs, bare metal).

- Control Plane Provider: Handles the deployment and management of Kubernetes control plane components.

- Bootstrap Provider: Installs and configures Kubernetes on the provisioned infrastructure.

This clear delineation of responsibilities proved instrumental in seamlessly integrating Proxmox, a platform not natively supported by many existing Cluster API implementations.

Step 1: Infrastructure Provider – Bringing Your Own Template (BYOT) for Proxmox

A significant hurdle for on-premise Kubernetes deployments is the provisioning of the underlying compute infrastructure. Since k0rdent, or the broader Cluster API, does not natively ship with a Proxmox provider, the solution necessitated the development of a custom Infrastructure Provider. This was achieved by creating a dedicated Helm chart, specifically designed to interface with Proxmox and manage VM lifecycles.

The Power of Bring Your Own Template (BYOT):

A critical design choice was the adoption of the BYOT approach. Instead of dynamically building VM images during each provisioning cycle, which can be time-consuming and resource-intensive, the strategy leveraged existing, pre-configured Proxmox VM templates. These templates were prepared with essential configurations already in place, including:

- Cloud-init Enabled: Cloud-init, a widely used industry standard for cross-platform cloud instance initialization, was pre-configured, allowing for automated setup tasks upon VM boot.

- SSH Access: Secure Shell (SSH) access was pre-configured, enabling secure remote management and initial bootstrapping steps.

- Base OS Packages: Essential operating system packages and dependencies were pre-installed, reducing post-provisioning setup time.

This BYOT approach delivered several tangible advantages:

- Accelerated Provisioning: VMs could be spun up much faster, as the bulk of the OS and initial configuration was already handled.

- Enhanced Consistency: All VMs started from the same golden image, guaranteeing a high degree of consistency across the cluster.

- Simplified Maintenance: Updates to the base OS or common utilities could be applied once to the template, benefiting all subsequent deployments.

- Reduced Complexity: The infrastructure provider’s role was streamlined, focusing purely on VM instantiation rather than intricate image building.

Helm Chart for Proxmox Infrastructure Provisioning:

The custom Helm chart for the Proxmox Infrastructure Provider was intentionally scoped to handle only infrastructure-related tasks, adhering to the principle of separation of concerns. Its responsibilities included:

- VM Creation and Deletion: Managing the full lifecycle of virtual machines within Proxmox.

- Resource Allocation: Specifying CPU, memory, and storage for each VM.

- Network Configuration: Assigning network interfaces and IP addresses.

- Volume Management: Attaching and detaching storage volumes.

- Cloud-init Parameter Injection: Passing specific configuration data to cloud-init for per-VM customization.

- No Kubernetes Logic: Crucially, this layer remained entirely agnostic to Kubernetes-specific configurations, ensuring a clean abstraction.

This modular design meant that the infrastructure layer was robust and independent, providing a solid foundation for the subsequent Kubernetes deployment stages. The Helm charts for this Proxmox provider are publicly available, fostering community contribution and reusability, demonstrating a commitment to open-source principles.

Step 2: Control Plane Provider – Orchestrating Kubernetes Brains

Once the virtual machines are provisioned and brought online by the Infrastructure Provider, the Control Plane Provider assumes responsibility. This critical layer is tasked with establishing and managing the core components of the Kubernetes control plane, effectively transforming raw VMs into intelligent orchestrators. Its primary functions include:

- Installation of Control Plane Components: Deploying essential Kubernetes services like the API server, scheduler, controller manager, and etcd (or K3s’s embedded SQLite/external database).

- Certificate Management: Generating and distributing the necessary TLS certificates for secure communication within the cluster.

- High Availability Configuration: Setting up and managing multiple control plane nodes to ensure resilience and fault tolerance, particularly crucial for production environments.

- Node Role Assignment: Clearly designating which provisioned VMs will serve as control plane nodes, ensuring a clear and declarative assignment of responsibilities.

In this specific setup, the control plane nodes are seamlessly deployed onto the Proxmox VMs, with all necessary VM details (IP addresses, hostnames, resource identifiers) flowing directly from the underlying Infrastructure Provider. This continuous information exchange ensures that the Control Plane Provider has all the context it needs to correctly configure Kubernetes. The declarative nature of this process means that roles are assigned with explicit intent, leading to a cluster architecture that is both predictable and robust, eliminating the ambiguity and potential for misconfiguration often associated with manual setups. The visual representations within the original post—though images—underscore this clear flow and intentionality, demonstrating how each VM is consciously integrated into the control plane.

Step 3: Bootstrapping Kubernetes with K3s – The Lightweight Champion

With the infrastructure provisioned and the control plane logic ready, the final stage involves bootstrapping Kubernetes onto the nodes. For this crucial step, K3s was the unequivocal choice, and for compelling reasons. K3s, often dubbed "Lightweight Kubernetes," is a highly optimized, fully compliant Kubernetes distribution specifically designed for resource-constrained environments, edge computing, and on-premise deployments where simplicity and efficiency are paramount.

Why K3s?

- Minimal Footprint: K3s binaries are incredibly small, often less than 100MB, significantly reducing download times and storage requirements.

- Fast Installation: Its single-binary architecture allows for incredibly rapid installation and startup times, dramatically accelerating cluster provisioning.

- Reduced Dependencies: K3s streamlines Kubernetes by removing non-essential features and replacing heavier components (like etcd with SQLite or external DB options, or

cloud-controller-managerwith a simpler provider) while remaining fully Kubernetes API compliant. - Edge and IoT Suitability: Its design makes it perfect for edge locations, IoT devices, and on-premise servers where resources are limited and operational simplicity is key.

- Production Ready: Despite its lightweight nature, K3s is a production-grade Kubernetes distribution, backed by Rancher Labs (a SUSE company) and widely adopted.

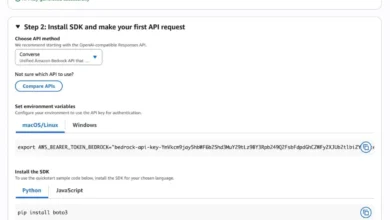

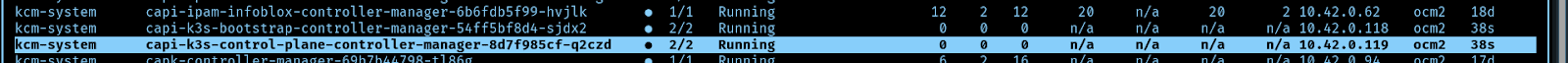

The bootstrap process for K3s is defined through declarative provider definitions within k0rdent, as illustrated by the provided YAML snippets. These definitions clearly specify the version of the K3s Cluster API provider to use and where to fetch its configuration components, allowing for seamless integration into the k0rdent ecosystem. The fetchConfig.url points directly to the official K3s Cluster API releases, ensuring that the latest stable components are utilized.

The Bootstrap Provider Helm chart specifically handles the entire K3s lifecycle on the target VMs:

- K3s Installation: Deploying the K3s server and agent binaries onto the respective control plane and worker nodes.

- Cluster Initialization: Initiating the K3s cluster, configuring the control plane, and setting up the necessary internal components.

- Joining Nodes: Orchestrating worker nodes to join the K3s cluster using secure tokens.

- Network Configuration: Ensuring the K3s network components (e.g., Flannel, Containerd) are correctly installed and configured.

- Lifecycle Management: Beyond initial setup, the provider also manages upgrades and other lifecycle events for the K3s installation.

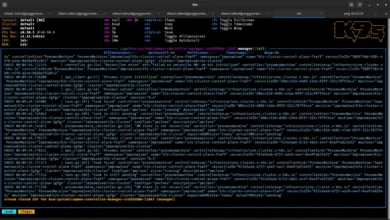

Upon the successful completion of this step, the Kubernetes cluster is fully operational, with K3s orchestrating containers across the Proxmox-provisioned VMs.

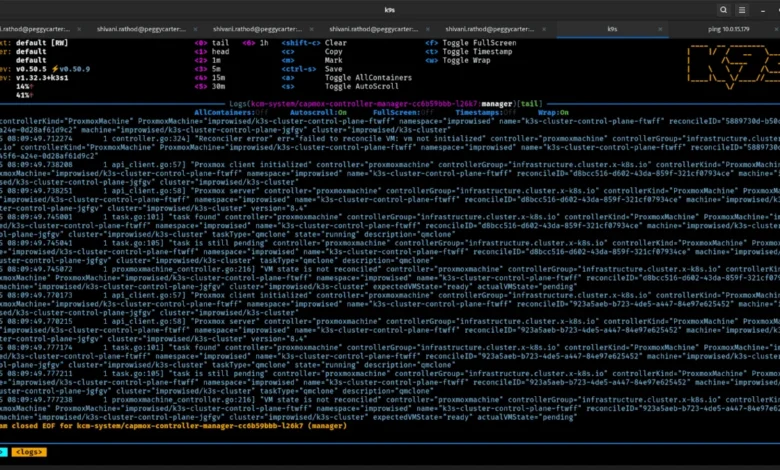

The Orchestrator: How k0rdent Ties It All Together Through Continuous Reconciliation

The true power of this solution lies in k0rdent’s ability to seamlessly integrate these disparate layers and ensure a consistent desired state through continuous reconciliation. Rather than a one-off scripting exercise, k0rdent establishes a dynamic, self-healing system:

- Desired State Definition: The operator defines the target Kubernetes cluster’s configuration—number of control plane nodes, worker nodes, VM specifications, K3s version, and other parameters—using declarative YAML.

- Infrastructure Provisioning: k0rdent invokes the custom Proxmox Infrastructure Provider, which, based on the desired state, provisions the necessary virtual machines using the BYOT templates.

- Control Plane Configuration: Once VMs are ready, k0rdent activates the Control Plane Provider to install and configure the Kubernetes control plane components (e.g., K3s server) on the designated nodes.

- Kubernetes Bootstrapping: The Bootstrap Provider (K3s) takes over, installing the K3s binaries and initializing the cluster, bringing Kubernetes to life.

- Continuous Reconciliation Loop: Crucially, k0rdent doesn’t stop here. It continuously monitors the actual state of the infrastructure and Kubernetes cluster against the declared desired state. If any drift is detected—a VM goes down, a K3s component fails, or a configuration changes unexpectedly—k0rdent automatically triggers reconciliation actions to restore the desired state.

- Scalability and Resilience: This reconciliation loop also handles scaling events. If the desired state is updated to include more worker nodes, k0rdent will provision new VMs, bootstrap K3s onto them, and integrate them into the cluster, all automatically.

The result is a fully declarative and robust K3s cluster operating on a self-managed Proxmox environment. This paradigm shift means that operators are freed from the burden of imperative scripting and manual interventions, instead focusing on defining the desired outcome and trusting the system to achieve and maintain it.

Real-World Outcomes and Broader Implications

The successful implementation of this declarative pipeline for K3s on Proxmox, orchestrated by k0rdent, yields significant operational advantages:

- Repeatability and Consistency: Every cluster deployed through this method will be identical, eliminating configuration drift and ensuring predictable behavior. This is invaluable for development, testing, and production environments.

- Simplified Scaling: Expanding or contracting the cluster becomes a mere configuration change, significantly reducing the operational overhead typically associated with on-premise infrastructure adjustments.

- Disaster Recovery: The entire cluster can be rebuilt from declarative definitions, drastically improving recovery time objectives (RTO) and recovery point objectives (RPO) in disaster scenarios.

- Reduced Operational Cost: Automation minimizes manual labor, freeing up highly skilled engineers to focus on higher-value tasks rather than repetitive infrastructure management.

- Democratization of On-Premise Kubernetes: This approach makes advanced Kubernetes management accessible to organizations that prefer or require on-premise deployments but lack the resources for extensive custom tooling.

- Enhanced Agility: New clusters can be spun up rapidly for specific projects or teams, fostering greater innovation and responsiveness.

As Shivani Rathod from Improwised Tech and CNCF Ambassador Prithvi Raj highlighted through their work, the motivation was to overcome the inherent "pain" of on-premise Kubernetes. Their solution underscores that running Kubernetes on self-hosted infrastructure no longer necessitates a reliance on ad-hoc scripts and "duct tape" solutions. By embracing k0rdent’s BYOT approach, on-premise infrastructure transcends its traditional limitations and becomes a first-class citizen in the cloud-native ecosystem—declarative, reproducible, and seamlessly managed. If an organization’s specific infrastructure isn’t supported out-of-the-box, the framework actively encourages building custom templates, embodying the very spirit of extensibility and community contribution that defines the cloud-native movement. This methodology sets a new standard for managing on-premise and edge Kubernetes deployments, paving the way for more efficient, resilient, and scalable operations.