Declarative On-Premise Kubernetes Management Achieves New Milestones with K3s, Proxmox, and k0rdent Integration

Published on April 17, 2026, a collaborative effort by Shivani Rathod of Improwised Tech and Prithvi Raj, a CNCF Ambassador, has unveiled a significant advancement in the realm of on-premise Kubernetes deployments. This initiative introduces a robust, declarative method for provisioning and managing lightweight Kubernetes clusters, specifically K3s, on Proxmox-based infrastructure, leveraging the powerful multi-cluster management capabilities of k0rdent. This development marks a pivotal step in democratizing advanced cloud-native operations for environments traditionally challenged by the complexities of Kubernetes orchestration.

The Evolving Landscape of Kubernetes and On-Premise Infrastructure

Twelve years after its inception as a Google side project, Kubernetes has matured into the de facto operating system for modern infrastructure, underpinning applications across an incredibly diverse array of environments—from mainframes and GPUs to multi-cloud, hybrid, on-premise, and edge deployments. The Cloud Native Computing Foundation (CNCF) ecosystem has expanded in parallel, developing projects that address specific needs and fill the architectural gaps left by Kubernetes’ core design. While cloud-based Kubernetes services have streamlined deployments for many, a substantial portion of enterprises continue to operate critical workloads on-premise, driven by data sovereignty requirements, compliance mandates, performance needs, or existing hardware investments.

However, managing Kubernetes in an on-premise setting has historically presented formidable challenges. These often include the manual, error-prone nature of provisioning, the difficulty in achieving consistent and repeatable deployments across different clusters, and the operational overhead associated with scaling and maintaining the infrastructure layer beneath Kubernetes. Traditional approaches frequently rely on bespoke scripting, which, while functional for initial setup, quickly becomes unwieldy, non-declarative, and difficult to audit or replicate. This lack of automation and declarative control has been a significant barrier to adopting cloud-native best practices in private data centers.

k0rdent: Bridging the Gap in On-Premise Orchestration

The solution presented in this new integration directly addresses these pain points by combining the strengths of lightweight Kubernetes (K3s), a robust virtualization platform (Proxmox), and a declarative multi-cluster management tool (k0rdent). K0rdent, as a multi-cluster management system built on Cluster API principles, offers a transformative approach to cluster lifecycle management. Instead of dictating how a cluster should be built through sequential commands, k0rdent allows operators to describe what the desired state of their clusters should be. It then employs a continuous reconciliation loop to ensure the actual state aligns with this declared ideal, automating the underlying provisioning, configuration, and maintenance tasks.

This paradigm shift from imperative scripting to declarative management is particularly impactful for on-premise environments. K0rdent’s design promotes a clear separation of concerns, dividing the complex task of cluster provisioning into distinct, manageable layers:

- Infrastructure Provider: Responsible for provisioning the underlying virtual machines or bare metal resources.

- Control Plane Provider: Manages the Kubernetes control plane components (e.g., API server, scheduler, controller manager).

- Bootstrap Provider: Handles the initial installation and configuration of Kubernetes on the nodes.

This modularity proves crucial for integrating diverse infrastructure types, as demonstrated by the custom Proxmox integration.

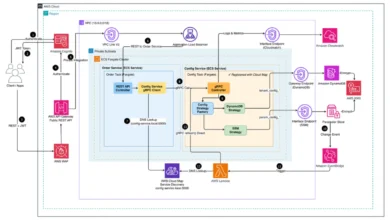

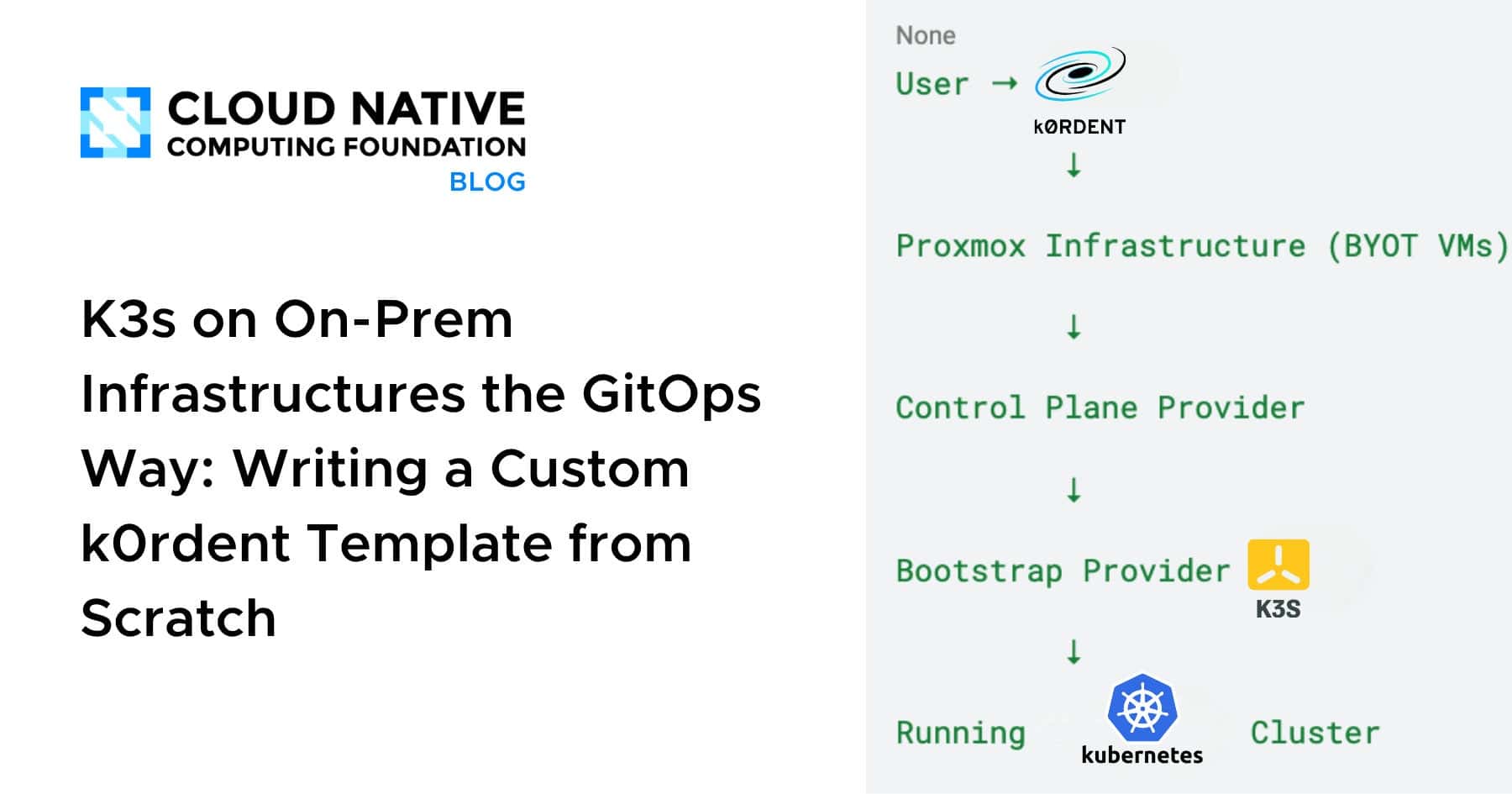

The Architectural Blueprint: A Layered Approach to Cluster Provisioning

The proposed architecture outlines a streamlined, end-to-end flow for deploying K3s clusters on Proxmox, emphasizing automation and declarative principles. At a high level, the process unfolds as follows:

- User Interaction: An operator defines the desired cluster configuration using k0rdent’s declarative manifests.

- k0rdent Orchestration: K0rdent interprets these manifests and initiates the provisioning process.

- Proxmox Infrastructure (BYOT VMs): The custom Infrastructure Provider interacts with Proxmox to create and configure virtual machines based on pre-defined templates.

- Control Plane Provider: Once VMs are available, this layer deploys and manages the Kubernetes control plane components onto the designated master nodes.

- Bootstrap Provider (K3s): This layer handles the installation and configuration of K3s on both control plane and worker nodes, effectively bootstrapping the Kubernetes cluster.

- Running Kubernetes Cluster: The final outcome is a fully operational, declaratively managed K3s cluster.

Each layer in this flow is designed to perform a specific function with high efficiency, ensuring a clean and manageable separation of responsibilities.

Step 1: The Infrastructure Provider – Customizing for Proxmox with BYOT

A core innovation in this solution is the development of a custom Infrastructure Provider for Proxmox. Given that k0rdent (and by extension, Cluster API) does not ship with native Proxmox support, the team at Improwised Tech created a specialized Helm chart to fill this gap. This chart effectively transforms Proxmox into a first-class citizen within the k0rdent ecosystem.

Why "Bring Your Own Template" (BYOT)?

The strategy hinges on a "Bring Your Own Template" (BYOT) approach. Rather than dynamically building VM images for each provisioning cycle, the solution leverages existing, pre-configured Proxmox VM templates. These templates are pre-baked with essential configurations such as cloud-init enabled, SSH access configured, and base operating system packages installed. This BYOT approach offers several compelling advantages:

- Efficiency and Speed: Eliminates the time-consuming process of image creation during provisioning, significantly accelerating cluster deployment.

- Consistency and Repeatability: Ensures that every VM provisioned from the template is identical, reducing configuration drift and enhancing reliability across clusters.

- Reduced Complexity: Separates the concerns of base VM image management from the Kubernetes provisioning logic, simplifying troubleshooting and updates.

- Optimized Resource Utilization: Allows for fine-tuned base images specific to the environment, potentially reducing VM footprint.

Functionality of the Proxmox Infrastructure Helm Chart:

The custom Helm chart for Proxmox is meticulously scoped to only manage infrastructure resources. Its responsibilities include:

- VM Creation and Deletion: Programmatically spinning up and tearing down virtual machines on the Proxmox hypervisor.

- Resource Allocation: Configuring CPU, memory, and storage for each VM.

- Network Configuration: Assigning network interfaces, IP addresses, and DNS settings.

- Host Entry Management: Ensuring proper network discoverability for the VMs within the Proxmox environment.

- Cloud-init Integration: Utilizing cloud-init for initial VM customization post-provisioning.

Crucially, this chart deliberately avoids any Kubernetes-specific logic, maintaining a clear boundary between infrastructure management and cluster orchestration. The Helm charts for this Proxmox provider are publicly available, fostering transparency and community contributions, and can be accessed at: https://github.com/Improwised/charts/tree/main/charts/cluster-api-provider-proxmox.

Step 2: Orchestrating the Control Plane

Once the necessary virtual machines have been provisioned by the Infrastructure Provider, the Control Plane Provider assumes responsibility. Its primary objective is to transform these raw VMs into functional Kubernetes control plane nodes. This involves a series of critical tasks:

- Installing Control Plane Components: Deploying the essential Kubernetes components such as the API server, scheduler, and controller manager.

- Datastore Configuration: Setting up and configuring the Kubernetes datastore, typically etcd, or leveraging K3s’s embedded SQLite or external database options.

- Certificate Management: Generating and distributing the necessary TLS certificates for secure communication within the cluster.

- Health Monitoring: Ensuring the control plane components are running correctly and are highly available.

In this specific setup, the control plane nodes are deployed directly onto the Proxmox VMs, with all VM-specific details seamlessly flowing from the Infrastructure Provider. This ensures that roles are assigned declaratively, making the cluster’s architecture intentional and transparent, rather than the result of ad-hoc configurations. The integration leverages existing Cluster API patterns to define the control plane’s desired state, which k0rdent then orchestrates on the Proxmox-backed VMs.

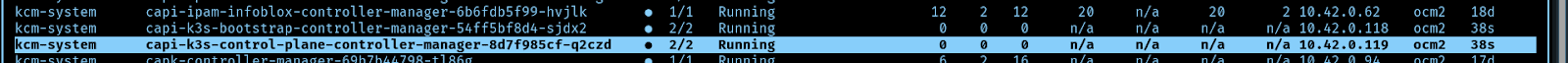

Step 3: Bootstrapping Kubernetes with K3s – The Lightweight Advantage

For the crucial bootstrapping phase, K3s was selected as the Kubernetes distribution. K3s, a CNCF sandbox project, is widely recognized for its minimalist design, making it an ideal choice for resource-constrained environments, edge deployments, and on-premise infrastructure where operational overhead must be minimized. Its advantages include:

- Lightweight Footprint: Significantly smaller binary size and reduced memory requirements compared to upstream Kubernetes.

- Rapid Installation: Designed for quick deployment, often taking seconds to minutes.

- Minimal Dependencies: Fewer external dependencies, simplifying setup and reducing potential conflicts.

- Purpose-Built for Edge and On-Prem: Optimized for environments outside traditional data centers, offering robust performance on smaller hardware.

The integration defines the K3s Bootstrap and Control Plane providers using standard Cluster API custom resources, which k0rdent then uses to manage the K3s lifecycle. The provider definitions are straightforward, pointing to official K3s Cluster API releases:

apiVersion: operator.cluster.x-k8s.io/v1alpha2

kind: BootstrapProvider

metadata:

name: k3s

spec:

version: v0.3.0

fetchConfig:

url: https://github.com/k3s-io/cluster-api-k3s/releases/v0.3.0/bootstrap-components.yaml

- if .Values.configSecret.name

configSecret:

name: .Values.configSecret.name

namespace: .Values.configSecret.namespace

- end apiVersion: operator.cluster.x-k8s.io/v1alpha2

kind: ControlPlaneProvider

metadata:

name: k3s

spec:

version: v0.3.0

fetchConfig:

url: https://github.com/k3s-io/cluster-api-k3s/releases/v0.3.0/control-plane-components.yaml

- if .Values.configSecret.name

configSecret:

name: .Values.configSecret.name

namespace: default .Release.Namespace

- end What the Bootstrap Provider Does:

The K3s Bootstrap Provider Helm chart orchestrates the full lifecycle of K3s installation and configuration on the provisioned VMs:

- K3s Installation: Downloads and installs the K3s binary on all designated worker and control plane nodes.

- Node Joining: Configures worker nodes to join the K3s cluster.

- Kubelet Configuration: Sets up and configures the Kubelet on each node, ensuring proper communication with the control plane.

- Cluster State Verification: Validates that Kubernetes components are running and the cluster is healthy.

- Version Management: Ensures the specified K3s version is installed and maintained.

Upon successful completion of this step, a fully functional Kubernetes cluster, powered by K3s, is up and running on the Proxmox virtual machines.

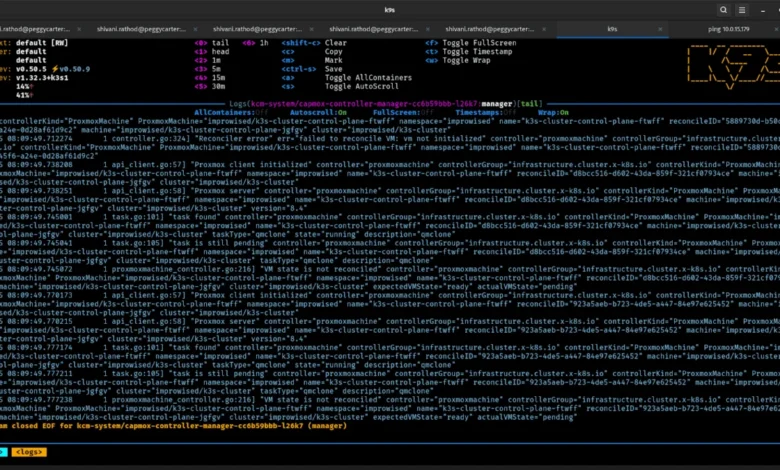

The Power of Continuous Reconciliation with k0rdent

The true strength of this integrated approach lies in k0rdent’s continuous reconciliation capabilities. Unlike one-off scripts, k0rdent constantly monitors the actual state of the infrastructure and Kubernetes clusters against the desired state defined in the declarative manifests. This ensures that the system is self-healing and self-managing:

- State Monitoring: K0rdent continuously observes the infrastructure (Proxmox VMs) and the Kubernetes cluster (K3s components).

- Drift Detection: Any deviation from the declared desired state (e.g., a VM failure, a K3s component crash, an unauthorized configuration change) is detected.

- Automated Remediation: K0rdent automatically triggers actions to bring the system back into compliance with the desired state, whether that means recreating a VM, restarting a service, or reconfiguring a component.

- Scaling and Updates: Changes to the desired state (e.g., adding more worker nodes, upgrading K3s) are applied automatically and safely.

The result is a fully declarative and managed K3s cluster on the on-premise Proxmox environment. This eliminates manual interventions for scaling, updates, and recovery, drastically reducing operational burden and human error.

Tangible Outcomes: A New Era for On-Premise Deployments

The successful implementation of this solution yields significant benefits for organizations operating on-premise infrastructure. After the provisioning and reconciliation process stabilizes, users are left with:

- A Declarative Infrastructure Layer: Proxmox VMs are managed through code, providing version control, auditability, and repeatability.

- An Automated K3s Cluster: The Kubernetes cluster’s lifecycle, from installation to updates and scaling, is fully automated.

- Enhanced Repeatability: The entire setup can be replicated identically across different Proxmox instances or even different data centers with minimal effort.

- Simplified Scaling: Adding or removing nodes becomes a matter of updating a configuration file, rather than a complex manual process.

- Increased Reliability: The continuous reconciliation loop ensures that the cluster remains in its desired state, automatically recovering from many types of failures.

- Reduced Operational Overhead: Freed from the drudgery of manual tasks, operations teams can focus on higher-value activities.

Scaling the cluster is no longer a daunting weekend project, and rebuilding it from scratch loses its terrifying complexity. It simply becomes a configuration change, handled gracefully by k0rdent. This approach fundamentally transforms on-premise Kubernetes management from a "duct tape and bash scripts" endeavor into a sophisticated, automated, and reproducible operation. By embracing k0rdent’s BYOT approach, on-premise infrastructure is elevated to a first-class citizen in the cloud-native landscape, treated with the same declarative rigor as public cloud resources. The flexibility to build custom templates for unsupported infrastructure types is a testament to the extensibility and power of this methodology.

Broader Implications and Future Outlook

This integration carries significant implications for the broader cloud-native ecosystem, particularly for hybrid cloud strategies and edge computing initiatives. Industry reports indicate that a substantial percentage of enterprise workloads will continue to reside on-premise or at the edge, making robust, automated solutions for these environments critically important. The combination of K3s’s efficiency and k0rdent’s declarative power provides a compelling blueprint for deploying and managing Kubernetes in these traditionally challenging locations.

This development underscores a growing trend towards specialized Kubernetes distributions and extensible management frameworks like Cluster API. It demonstrates that advanced, declarative cluster lifecycle management is not exclusive to hyperscale cloud providers but can be effectively implemented in private data centers, offering similar levels of automation and resilience. Industry observers suggest that solutions like this will accelerate the adoption of cloud-native principles by organizations with significant legacy infrastructure, enabling them to modernize their operations without a complete migration to public clouds. Furthermore, the "build your own provider" ethos championed by k0rdent and Cluster API empowers communities and enterprises to tailor solutions to their unique infrastructure requirements, fostering innovation and reducing vendor lock-in. This collaborative effort by Improwised Tech and a CNCF Ambassador exemplifies how community-driven innovation continues to push the boundaries of what’s possible in cloud-native infrastructure, making Kubernetes more accessible and manageable across all environments.