Anthropic’s Claude Opus 4.7 Model Now Available on Amazon Bedrock, Revolutionizing Enterprise AI Workflows

Amazon Web Services (AWS) today announced the general availability of Anthropic’s Claude Opus 4.7 model within its Amazon Bedrock service, marking a significant advancement in enterprise-grade generative artificial intelligence. This integration introduces Anthropic’s most intelligent Opus model to date, designed to deliver unparalleled performance across critical business functions, including complex coding tasks, sophisticated long-running agentic workflows, and demanding professional applications. The rollout solidifies Amazon Bedrock’s position as a leading platform for accessing cutting-edge foundation models (FMs) and underscores the ongoing strategic partnership between AWS and Anthropic, a prominent AI safety and research company.

The Claude Opus 4.7 model is powered by Amazon Bedrock’s newly enhanced, next-generation inference engine, specifically engineered to provide robust, enterprise-grade infrastructure capable of handling production-scale workloads. This advanced engine features brand-new scheduling and scaling logic that dynamically allocates compute capacity to requests, significantly improving availability and responsiveness. This dynamic allocation is particularly beneficial for managing steady-state workloads efficiently while simultaneously accommodating services that require rapid scaling, ensuring consistent performance even under fluctuating demand. A cornerstone of this new infrastructure is its commitment to data privacy, offering "zero operator access." This critical security feature ensures that customer prompts and generated responses remain strictly confidential, never visible to Anthropic or AWS operators, thereby safeguarding sensitive enterprise data.

The Evolution of Generative AI on AWS Bedrock

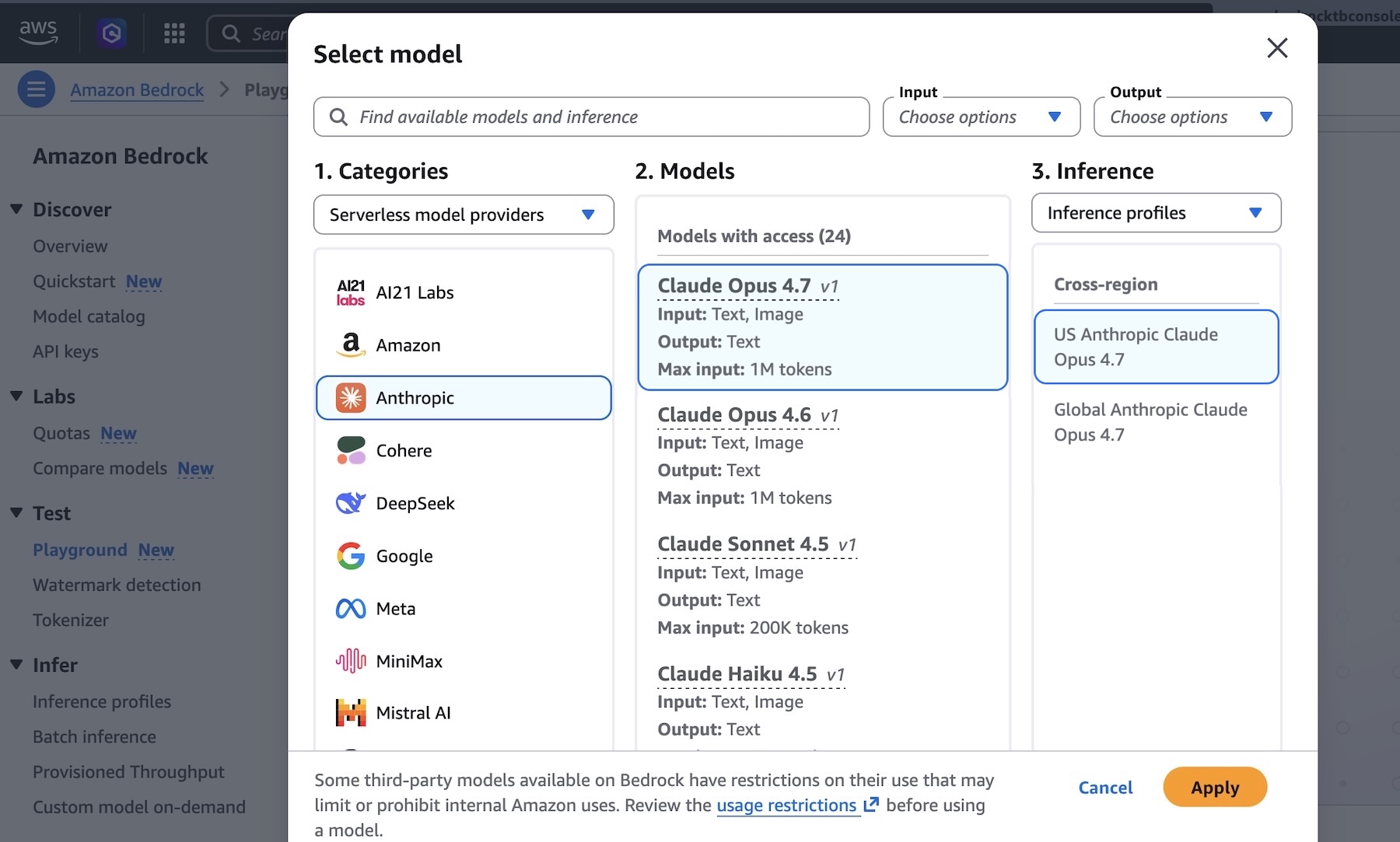

The introduction of Claude Opus 4.7 represents a key milestone in the broader narrative of generative AI’s maturation and its increasing integration into enterprise operations. Amazon Bedrock, initially launched in April 2023 and made generally available in September 2023, was conceived as a fully managed service designed to democratize access to FMs from AWS and leading AI startups like Anthropic, AI21 Labs, Cohere, Meta, Mistral AI, and Stability AI. Its primary goal is to empower developers and businesses to build and scale generative AI applications with security, privacy, and responsible AI practices built-in.

Prior to Opus 4.7, Amazon Bedrock had already integrated other models from Anthropic’s Claude family, including Claude 2.1 and the earlier Claude 3 models (Haiku, Sonnet, and Opus). This continuous integration reflects a rapid evolutionary timeline in the generative AI space, where new, more capable models are released with increasing frequency. The partnership between AWS and Anthropic has been a cornerstone of Bedrock’s offerings, with AWS committing substantial investments to Anthropic and Anthropic making AWS its primary cloud provider for critical workloads, including safety research and future model development. This symbiotic relationship ensures that AWS customers gain early and robust access to Anthropic’s innovations.

Unprecedented Capabilities for Enterprise Workflows

According to Anthropic, Claude Opus 4.7 delivers substantial improvements across a spectrum of production workflows critical for modern enterprises. These enhancements span agentic coding, sophisticated knowledge work, advanced visual understanding, and the execution of complex, long-running tasks. The model’s refined architecture allows it to navigate ambiguity with greater proficiency, offering more thorough and nuanced problem-solving capabilities. Furthermore, Opus 4.7 exhibits a heightened precision in following instructions, a crucial attribute for automating intricate business processes where accuracy is paramount.

For developers and organizations leveraging AI for software development, Opus 4.7’s advancements in agentic coding are particularly noteworthy. This refers to the model’s ability to not just generate code snippets, but to act as an intelligent agent capable of understanding broader architectural goals, debugging, refactoring, and even planning multi-step coding projects. This could significantly accelerate development cycles, improve code quality, and free human developers to focus on higher-level strategic challenges.

In knowledge work, the model’s enhanced understanding and reasoning capabilities translate into more accurate summaries, more insightful analyses of complex documents, and improved content generation that aligns closely with specific brand guidelines or technical requirements. Its ability to handle long-running tasks suggests a capacity to manage extended conversations, multi-document analysis, or sequential decision-making processes, which are common in customer service, legal review, and research domains. While the official announcement highlights these qualitative improvements, industry analysts anticipate that "most intelligent" implies superior performance on a range of established AI benchmarks, such as MMLU (Massive Multitask Language Understanding), GSM8K (grade school math problems), and HumanEval (code generation and completion), compared to its predecessors.

Statements from Key Stakeholders

An AWS spokesperson, speaking on the condition of anonymity, emphasized the strategic importance of this launch: "The integration of Claude Opus 4.7 on Amazon Bedrock underscores AWS’s unwavering commitment to providing our customers with access to the most advanced and secure AI models available. Our next-generation inference engine, with its zero operator access and dynamic scaling capabilities, ensures that enterprises can deploy powerful AI solutions with confidence, knowing their data is protected and their applications can scale effortlessly to meet demand. This collaboration with Anthropic is a testament to our shared vision of empowering builders with cutting-edge, responsible AI."

A representative from Anthropic highlighted the company’s continuous pursuit of AI excellence: "Claude Opus 4.7 represents a significant leap forward in our mission to build helpful, honest, and harmless AI. By enhancing its ability to handle ambiguity, solve problems thoroughly, and follow instructions precisely, we are providing a tool that can truly augment human intelligence across a vast array of professional domains. Our partnership with AWS allows us to bring this advanced capability to a broad enterprise audience through the secure and scalable Amazon Bedrock platform, fostering innovation while upholding our core principles of AI safety."

Industry analysts have also weighed in on the implications. Dr. Anya Sharma, a lead AI researcher at a prominent tech consultancy, remarked, "The release of Claude Opus 4.7 on Bedrock is a strategic move that strengthens AWS’s competitive standing in the rapidly evolving generative AI landscape. The focus on enterprise-grade privacy with ‘zero operator access’ addresses a critical concern for large organizations, making Bedrock a more compelling choice for deploying sensitive AI workloads. This will likely accelerate the adoption of advanced LLMs in sectors like finance, healthcare, and government, where data security is paramount."

The Backbone: Amazon Bedrock’s Next-Generation Inference Engine

The technical prowess underpinning Claude Opus 4.7’s performance on Bedrock cannot be overstated. The next-generation inference engine is a crucial differentiator. Its brand-new scheduling and scaling logic dynamically allocates capacity to requests, which translates into several key benefits for customers:

- Improved Availability: By intelligently managing resources, the engine minimizes latency and downtime, ensuring that AI applications remain highly responsive and available even during peak usage.

- Efficient Resource Utilization: Dynamic allocation optimizes the use of underlying compute resources, potentially leading to cost efficiencies for customers as they only pay for the capacity they truly need.

- Rapid Scaling: For applications experiencing sudden spikes in demand, the engine can quickly provision additional capacity, preventing performance degradation and ensuring a seamless user experience. This is vital for use cases like viral marketing campaigns or seasonal e-commerce traffic.

- Zero Operator Access: This privacy feature is a significant selling point for enterprises. It means that neither AWS nor Anthropic personnel can access the content of customer prompts or the generated responses. This architectural design ensures that sensitive or proprietary information processed by the model remains entirely within the customer’s secure environment, adhering to stringent compliance and regulatory requirements. This level of data isolation builds trust and enables organizations to confidently leverage generative AI for mission-critical tasks without compromising intellectual property or customer privacy.

Practical Implementation for Developers

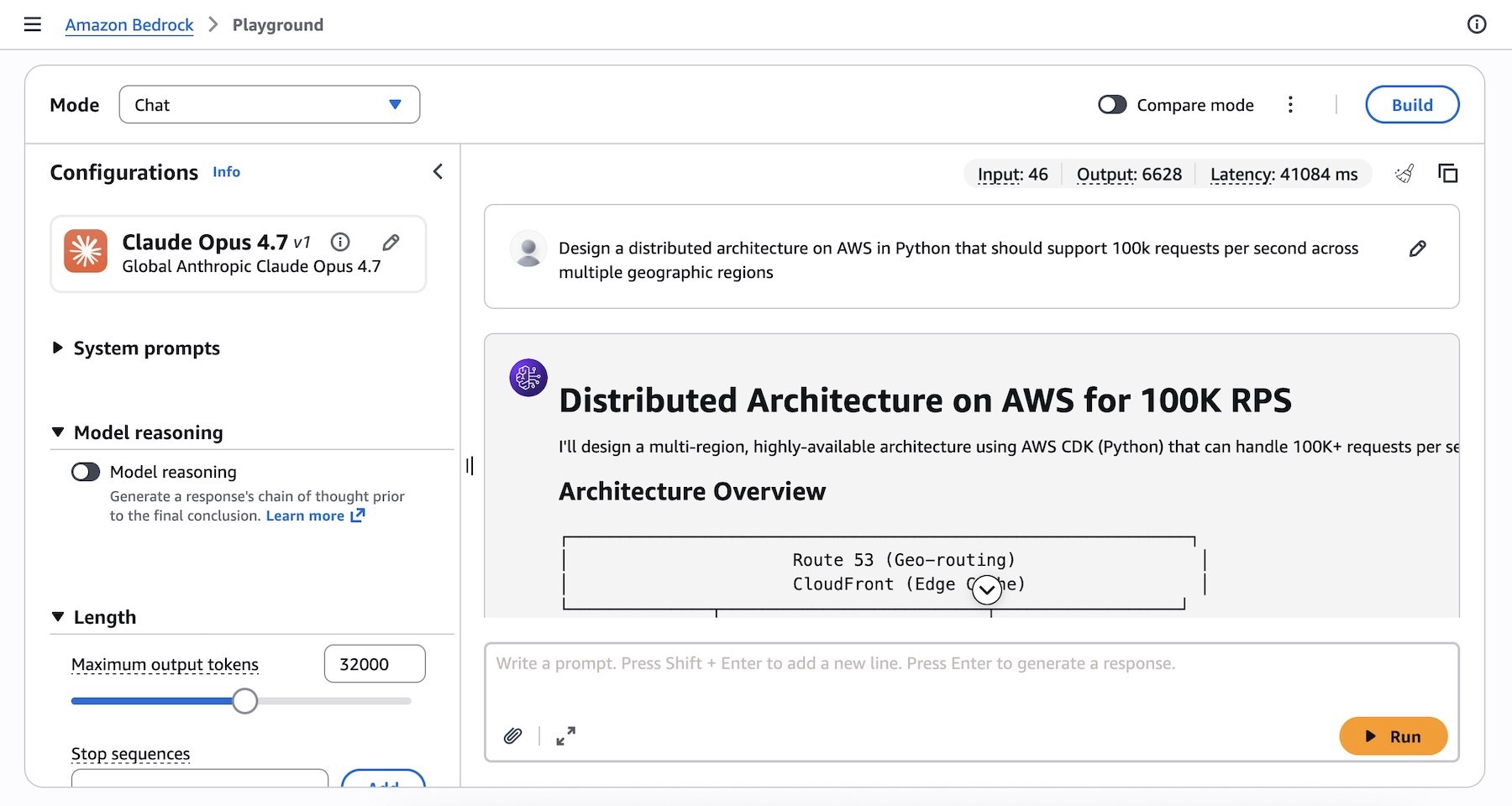

For developers eager to leverage Claude Opus 4.7, Amazon Bedrock provides multiple access points and comprehensive tooling. The model is readily accessible through the Amazon Bedrock console, where users can navigate to the "Playground" under the "Test" menu, select "Claude Opus 4.7," and begin experimenting with complex prompts.

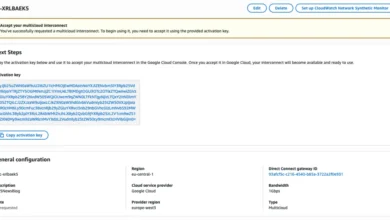

Programmatic access is also streamlined, catering to various development preferences. Developers can interact with the model using the Anthropic Messages API, which can be invoked through the bedrock-runtime endpoint via the dedicated anthropic[bedrock] SDK package for a highly integrated experience. Alternatively, the model can be called using the AWS Command Line Interface (AWS CLI) and the AWS SDKs (available in multiple programming languages) via Bedrock’s Invoke and Converse APIs.

For instance, a developer could use the anthropic[bedrock] SDK to design a distributed architecture for a high-traffic application:

from anthropic import AnthropicBedrockMantle

# Initialize the Bedrock Mantle client (uses SigV4 auth automatically)

mantle_client = AnthropicBedrockMantle(aws_region="us-east-1")

# Create a message using the Messages API

message = mantle_client.messages.create(

model="us.anthropic.claude-opus-4-7",

max_tokens=32000,

messages=[

"role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions"

]

)

print(message.content[0].text)Similarly, the AWS CLI offers a direct method for invocation:

aws bedrock-runtime invoke-model

--model-id us.anthropic.claude-opus-4-7

--region us-east-1

--body '"anthropic_version":"bedrock-2023-05-31", "messages": ["role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions."], "max_tokens": 32000'

--cli-binary-format raw-in-base64-out

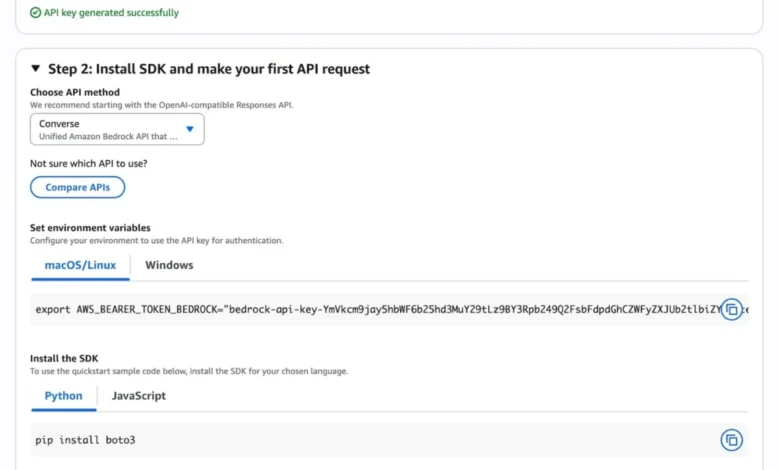

invoke-model-output.txtFurthermore, Amazon Bedrock supports "Adaptive thinking" with Claude Opus 4.7. This advanced feature allows Claude to dynamically allocate thinking token budgets based on the complexity of each request. For simpler queries, it uses fewer resources, while for highly complex problems requiring deeper reasoning, it intelligently expands its processing capacity. This optimization improves efficiency and ensures that the model provides the most intelligent and thorough responses possible without over-provisioning for simpler tasks. AWS also offers a "Quickstart" guide within the console, enabling developers to generate short-term API keys for testing purposes and access sample code for various API methods, including OpenAI-compatible responses, to expedite their first API calls.

Broader Impact and Implications

The integration of Claude Opus 4.7 into Amazon Bedrock carries significant implications for the broader generative AI ecosystem and enterprise digital transformation.

- Accelerated Enterprise AI Adoption: The combination of a highly intelligent model and a secure, scalable, and privacy-focused infrastructure will likely spur greater adoption of generative AI within regulated industries and large enterprises. The "zero operator access" feature particularly addresses a major hurdle for many organizations hesitant to expose proprietary data to third-party AI services.

- Enhanced Developer Productivity: With more powerful models and user-friendly access methods, developers can build sophisticated AI applications more quickly and efficiently. This could lead to a proliferation of innovative solutions across various sectors.

- Competitive Landscape Shift: This release further intensifies the competition among major cloud providers. By offering a top-tier model from Anthropic, AWS strengthens Bedrock’s appeal against rivals like Microsoft Azure’s OpenAI Service and Google Cloud’s Vertex AI, both of which also offer access to leading FMs. The continuous race to integrate the "best" models and provide the most robust infrastructure benefits end-users by driving innovation and choice.

- Advancing Responsible AI: Anthropic’s foundational commitment to AI safety and "Constitutional AI" principles, combined with AWS’s emphasis on privacy and security, creates a compelling offering for organizations prioritizing responsible AI deployment. This reinforces the idea that powerful AI can be developed and used ethically.

- Future of Work: The model’s capabilities in agentic coding and long-running tasks suggest a future where AI systems can take on more autonomous and complex roles, collaborating with human professionals rather than merely assisting them. This could reshape workflows in software engineering, legal services, scientific research, and more.

Availability and Next Steps

Anthropic’s Claude Opus 4.7 model is immediately available in several AWS Regions, including US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm). AWS has indicated that customers should consult the official Bedrock documentation for future updates on regional availability. Detailed pricing information for using Claude Opus 4.7 on Amazon Bedrock can be found on the Amazon Bedrock pricing page.

AWS encourages users to explore the capabilities of Claude Opus 4.7 in the Amazon Bedrock console today and provide feedback through AWS re:Post for Amazon Bedrock or via their established AWS Support contacts. The original announcement, authored by Channy, noted an update on April 17, 2026, to ensure the accuracy of code samples and CLI commands, reinforcing AWS’s commitment to providing reliable and up-to-date resources for its developer community. This ongoing refinement ensures that developers have the most current and effective tools at their disposal to harness the power of advanced generative AI.