Anthropic’s Claude Opus 4.7 Now Available on Amazon Bedrock, Elevating Enterprise AI Capabilities.

Amazon Web Services (AWS) today announced the general availability of Anthropic’s Claude Opus 4.7 model on Amazon Bedrock, marking a significant advancement for enterprise-grade artificial intelligence applications. This latest iteration of Anthropic’s flagship large language model (LLM) is touted as its most intelligent Opus model to date, promising substantial improvements in performance across critical domains such as advanced coding, long-running autonomous agents, and complex professional workflows. The integration underscores AWS’s commitment to providing a diverse selection of leading foundation models within a secure, scalable, and enterprise-ready environment.

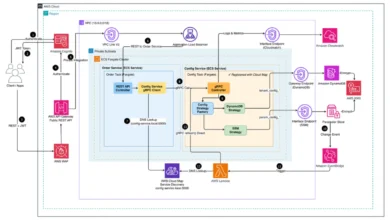

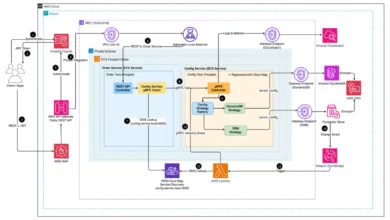

The introduction of Claude Opus 4.7 on Amazon Bedrock is powered by Bedrock’s next-generation inference engine, a crucial architectural enhancement designed to deliver robust infrastructure for demanding production workloads. This new engine features sophisticated scheduling and scaling logic that dynamically allocates compute capacity to incoming requests. This innovation significantly boosts availability, particularly for consistent, steady-state workloads, while simultaneously ensuring ample room for services experiencing rapid spikes in demand. A cornerstone of this new inference engine is its "zero operator access" guarantee, a critical security feature ensuring that customer prompts and responses remain entirely private, never visible to Anthropic or AWS operators. This commitment to data privacy is paramount for enterprises handling sensitive information and operating in regulated industries, addressing a key concern in the widespread adoption of generative AI.

According to statements from Anthropic, Claude Opus 4.7 delivers tangible improvements across a spectrum of workflows commonly executed by teams in production environments. These include enhanced capabilities in agentic coding, sophisticated knowledge work, nuanced visual understanding, and the execution of intricate, long-running tasks. The model demonstrates superior ability in navigating ambiguity, exhibiting greater thoroughness in its problem-solving approaches, and adhering more precisely to complex instructions. This represents a marked upgrade from its predecessor, Opus 4.6, though developers are advised that some prompting changes and harness adjustments may be necessary to fully leverage the new model’s advanced capabilities. Anthropic has provided a comprehensive prompting guide to assist developers in optimizing their interactions with Opus 4.7.

The Evolution of Amazon Bedrock and Anthropic’s Strategic Partnership

Amazon Bedrock, first introduced in April 2023, has rapidly evolved into a pivotal platform in the enterprise AI landscape. It was conceived to simplify the development and deployment of generative AI applications by offering access to a variety of foundation models from leading AI companies, alongside Amazon’s own models like Amazon Titan. The platform’s core value proposition lies in its fully managed nature, abstracting away the complexities of infrastructure provisioning, scaling, and maintenance. This allows businesses to focus on building innovative applications rather than managing the underlying AI infrastructure.

Anthropic, founded by former OpenAI researchers, has emerged as a significant player in the AI ethics and safety space, developing powerful LLMs with a strong emphasis on "Constitutional AI" – a methodology designed to align AI models with human values. The partnership between AWS and Anthropic has deepened considerably since Bedrock’s inception. AWS has made substantial investments in Anthropic, solidifying a strategic collaboration that sees Anthropic’s cutting-edge models consistently integrated into the Bedrock ecosystem. This synergy benefits both parties: AWS enriches its Bedrock offering with some of the most advanced LLMs available, while Anthropic gains unparalleled access to AWS’s vast global infrastructure and extensive enterprise customer base. This mutually beneficial relationship is a testament to the accelerating pace of innovation and collaboration shaping the generative AI market.

The continuous integration of new and improved models like Claude Opus 4.7 reflects a broader trend of rapid iteration and competition within the AI industry. As enterprises increasingly seek to harness the power of generative AI for diverse use cases—from content generation and code assistance to advanced analytics and customer service—the demand for robust, secure, and highly performant foundation models has skyrocketed. Bedrock’s strategy of offering a "model playground" allows businesses to experiment with different models, fine-tune them with their proprietary data, and deploy them with confidence, knowing that the underlying infrastructure meets enterprise security and scalability standards.

Technical Accessibility and Developer Empowerment

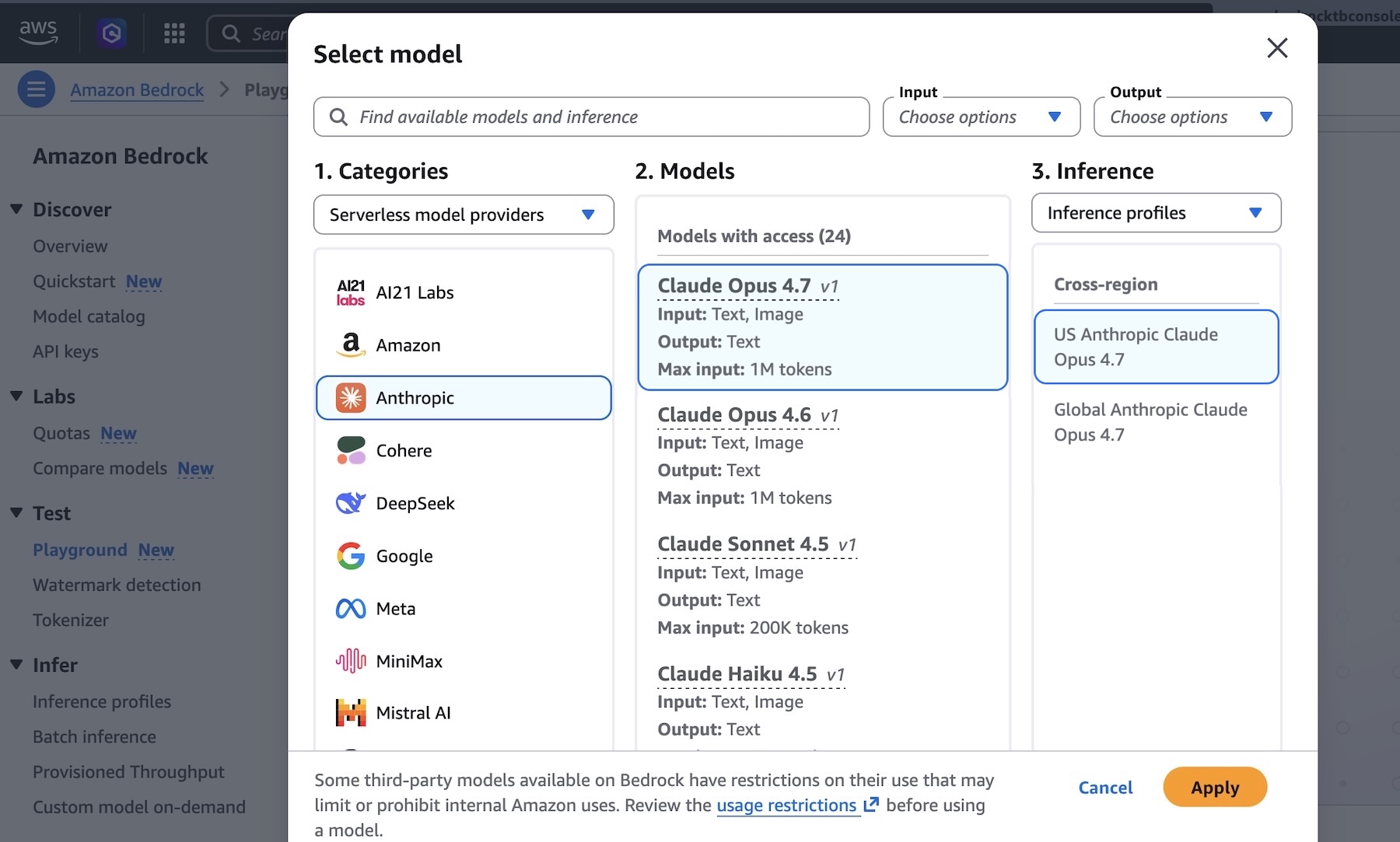

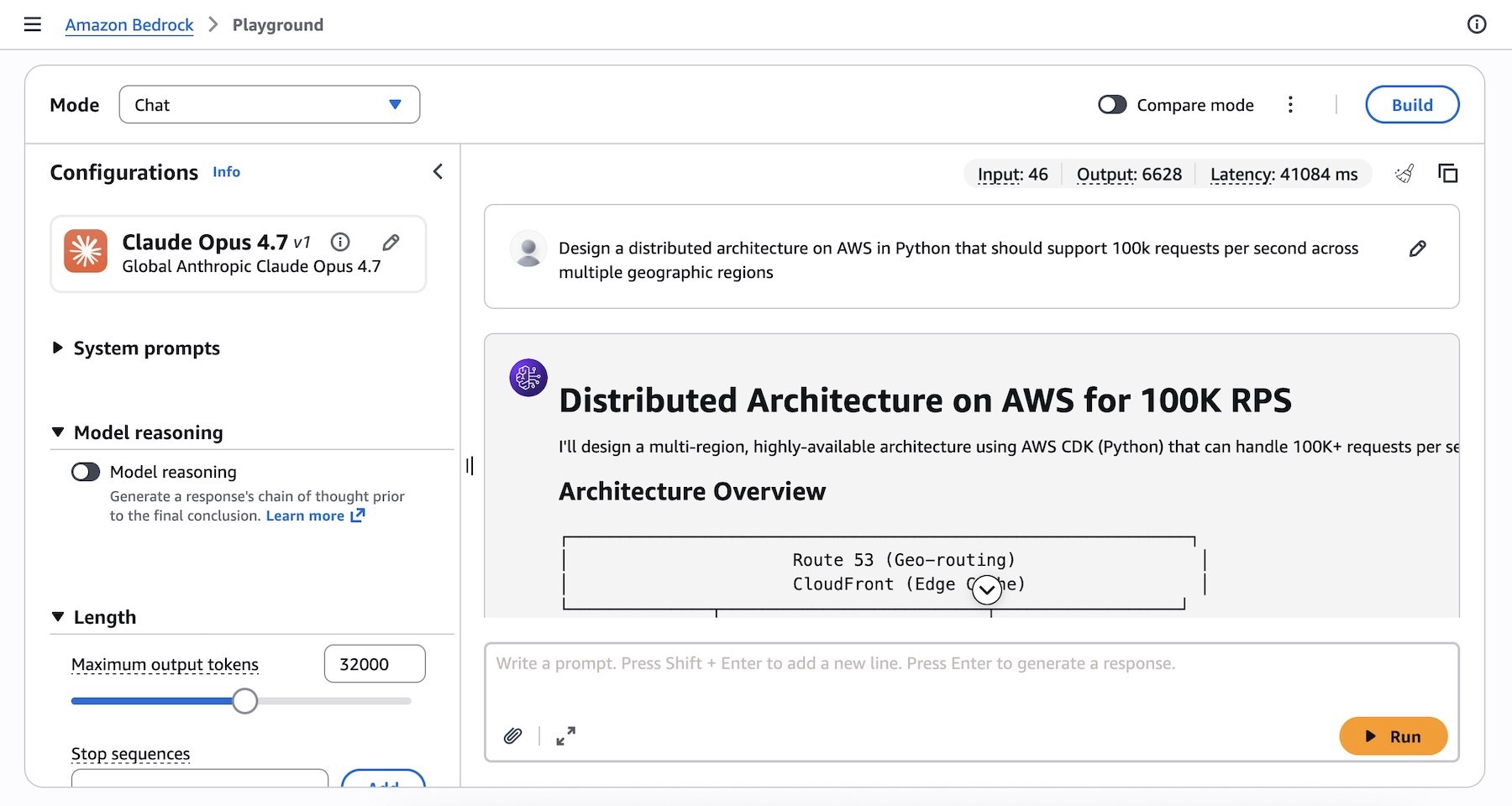

Developers can readily engage with Claude Opus 4.7 through the Amazon Bedrock console. The user-friendly interface allows for immediate testing within the "Playground" environment, where users can select Claude Opus 4.7 and experiment with complex prompts, such as designing a distributed architecture on AWS in Python capable of handling 100,000 requests per second across multiple geographic regions. This hands-on approach facilitates rapid prototyping and iterative development.

Beyond the console, programmatic access to Claude Opus 4.7 is available via various methods, catering to different developer preferences and integration requirements. The Anthropic Messages API can be called directly through the bedrock-runtime endpoint using the specialized anthropic[bedrock] SDK package, offering a streamlined experience for Python developers. For those preferring the AWS ecosystem, the Invoke and Converse API on bedrock-runtime are accessible through the AWS Command Line Interface (AWS CLI) and AWS SDK, providing flexibility across a range of programming languages and existing AWS workflows.

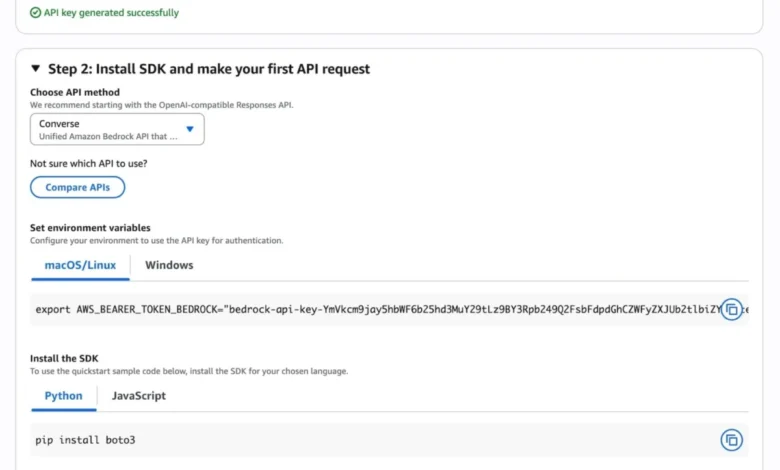

For quickstarts and initial API calls, the Bedrock console provides an intuitive "Quickstart" option. After defining a use case, developers can generate a short-term API key for authentication purposes, enabling them to retrieve sample code snippets for various API methods, including OpenAI-compatible Responses API, to make inference requests using the model.

Illustrative code examples demonstrate the ease of integrating Claude Opus 4.7 into applications. Using the anthropic[bedrock] SDK package, a Python snippet can initialize a client, define the model ID (us.anthropic.claude-opus-4-7), set max_tokens, and send a message. Similarly, AWS CLI commands offer a direct way to invoke the model, specifying the model ID, region, and the JSON-formatted body containing the anthropic_version, messages, and max_tokens. These examples underscore the platform’s commitment to developer-friendliness and rapid deployment.

A notable feature enhancing the model’s intelligent reasoning capability is "Adaptive Thinking" with Claude Opus 4.7. This allows Claude to dynamically allocate thinking token budgets based on the complexity of each request. For simpler queries, it uses fewer tokens, optimizing cost and latency. For more intricate problems requiring deeper analysis, it automatically expands its token budget, ensuring a thorough and intelligent response. This adaptive approach maximizes both efficiency and efficacy, making the model more versatile for a wider array of enterprise tasks.

Global Availability and Economic Implications

Anthropic’s Claude Opus 4.7 model is immediately available in several key AWS Regions, including US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm). This multi-region availability is crucial for global enterprises requiring low-latency access to AI services for their geographically dispersed operations and customer bases. AWS maintains a comprehensive list of Regions for future updates, indicating a planned expansion of availability.

The economic implications of such a release are significant. By making a state-of-the-art model like Claude Opus 4.7 accessible through a managed service like Bedrock, AWS lowers the barrier to entry for businesses looking to leverage advanced generative AI. Enterprises can avoid the substantial upfront costs and operational overhead associated with building and maintaining their own large-scale AI infrastructure. The "pay-as-you-go" pricing model characteristic of AWS services further reduces financial risk, allowing businesses to scale their AI consumption in alignment with their actual usage and evolving needs. This economic flexibility is particularly attractive to startups and medium-sized businesses, democratizing access to powerful AI tools previously only available to tech giants.

Moreover, the emphasis on data privacy through "zero operator access" addresses one of the most significant impediments to enterprise AI adoption. Industries such as finance, healthcare, legal, and government, which operate under stringent data governance and compliance regulations, can now explore and deploy generative AI solutions with greater confidence, knowing that their sensitive data remains protected. This assurance can unlock new use cases and accelerate digital transformation efforts across these critical sectors.

Broader Impact and Future Outlook

The launch of Claude Opus 4.7 on Amazon Bedrock has profound implications for the future of enterprise AI. It empowers businesses to build more sophisticated, reliable, and intelligent AI applications that can automate complex workflows, enhance decision-making, and unlock new forms of innovation. For developers, it means having a powerful, secure, and easily accessible tool to accelerate their projects and push the boundaries of what AI can achieve.

The advancements in "agentic coding" and "long-running agents" facilitated by Opus 4.7 signal a move towards more autonomous and proactive AI systems. Instead of merely responding to prompts, these agents can understand complex goals, break them down into sub-tasks, execute multi-step processes, and even self-correct, operating with minimal human intervention. This capability is transformative for areas like software development, scientific research, and complex operational management.

Looking ahead, the collaboration between AWS and Anthropic is likely to continue driving rapid innovation. Future iterations of Claude models will undoubtedly bring further improvements in reasoning, context window size, multimodal capabilities, and efficiency. Concurrently, Amazon Bedrock will continue to evolve its underlying infrastructure, enhancing inference performance, security features, and the breadth of foundation models available. The platform’s commitment to offering choice ensures that enterprises can select the best model for their specific use case, avoiding vendor lock-in and fostering a healthy competitive environment among AI model developers.

Enterprises are encouraged to explore Claude Opus 4.7’s capabilities through the Amazon Bedrock console and provide feedback via AWS re:Post for Amazon Bedrock or through their usual AWS Support contacts. The continuous dialogue between AWS, Anthropic, and the developer community will be instrumental in shaping the next generation of enterprise AI solutions, ensuring they meet the evolving demands of a rapidly digitizing world. This release is not just about a new model; it represents a significant stride in making advanced, secure, and ethical AI a practical reality for businesses globally.