The AI Inference Revolution Demands a Shift from FLOPS to Token Economics

Traditional data centers, once repositories for storing, retrieving, and processing data, are undergoing a profound metamorphosis. In the burgeoning era of generative and agentic artificial intelligence, these facilities are rapidly evolving into sophisticated "AI token factories." Their primary workload has shifted to AI inference, the process by which AI models generate outputs, with their principal output now being intelligence meticulously manufactured in the form of tokens. This fundamental transformation necessitates a corresponding recalibration of how the economics of AI infrastructure, particularly the total cost of ownership (TCO), is assessed. Enterprises navigating this new landscape too frequently remain fixated on archaic metrics such as peak chip specifications, raw compute cost, or the theoretical efficiency of floating-point operations per second per dollar (FLOPS per dollar). This misplaced emphasis overlooks the core value proposition of AI inference: the efficient and cost-effective generation of tokens.

The critical distinction that truly matters in this new paradigm lies not in input metrics, but in output-driven economics. While peak chip specifications, compute cost, and FLOPS per dollar represent mere input metrics, they fail to capture the actual business value derived from AI operations. Optimizing solely for these inputs while the business fundamentally runs on the output of generated tokens represents a significant mismatch in strategic focus and economic assessment. The true measure of success in the AI inference era is the cost per token, a metric that directly determines an enterprise’s ability to profitably scale its AI initiatives. This singular TCO metric intrinsically accounts for a complex interplay of factors: the underlying hardware performance, the efficacy of software optimizations, the robustness of the supporting ecosystem, and crucially, real-world utilization. Industry leaders, such as NVIDIA, are positioning themselves as providers of the lowest cost per token in the industry, a claim substantiated by their comprehensive approach to AI infrastructure development.

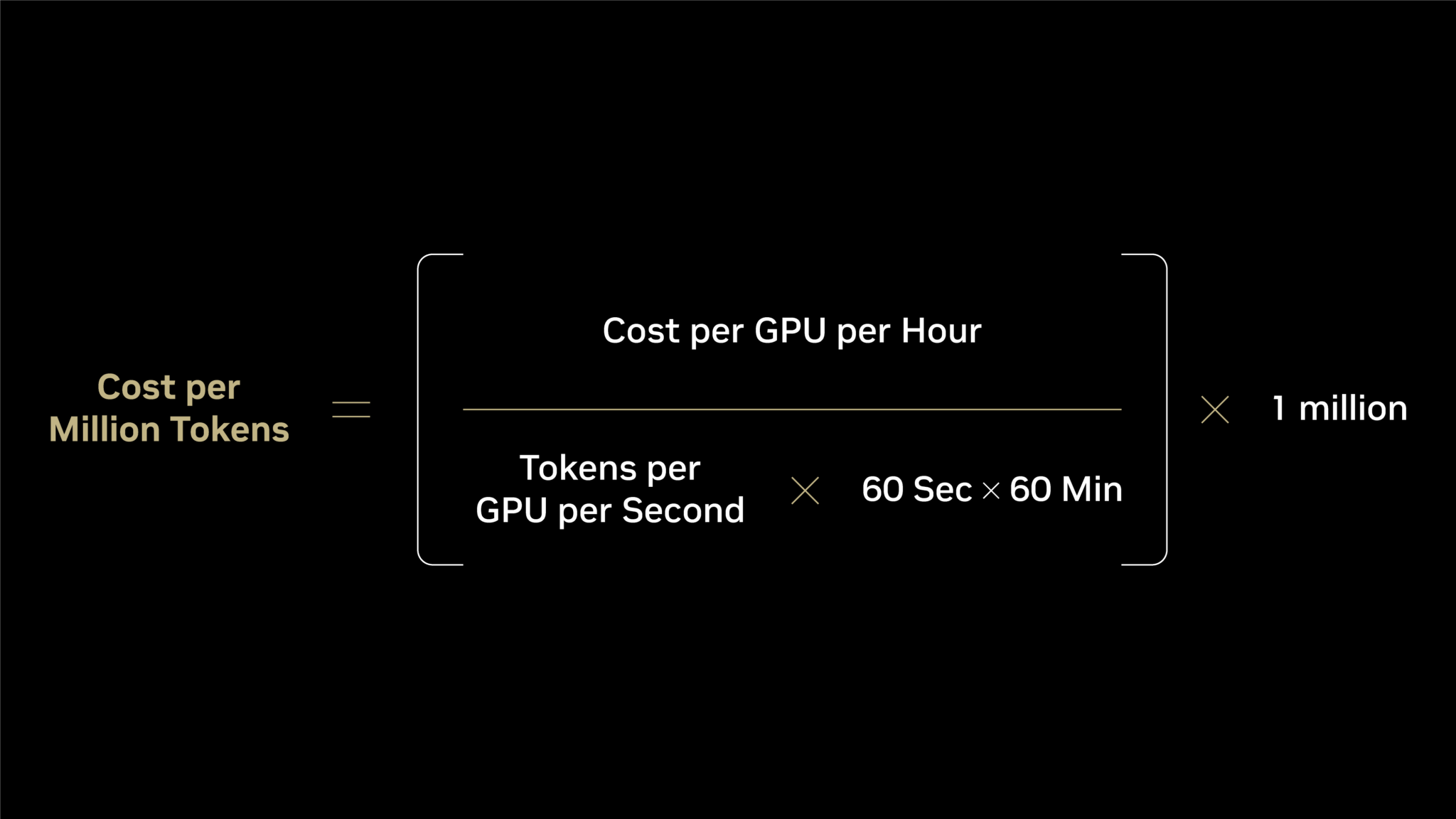

Understanding the drivers behind a reduced cost per token requires a granular examination of the underlying economic equation. The cost per million tokens is calculated by dividing the cost per GPU per hour by the number of tokens generated per GPU per second, then multiplying by the conversion factors for minutes and hours in a day, and finally by one million. This formula highlights a common pitfall in infrastructure evaluation: an overemphasis on the numerator, the cost per GPU per hour. For cloud deployments, this translates to the hourly rate charged by a cloud provider; for on-premises setups, it’s the amortized effective hourly cost of owned hardware. However, the true leverage for reducing token cost resides in the denominator: maximizing the delivered token output.

The denominator carries two profound business implications. Firstly, it directly reflects the performance and efficiency of the AI inference process. A higher throughput of tokens per second, achieved through optimized hardware and software, directly lowers the cost associated with each generated token. Secondly, it encompasses the total utilization of the infrastructure. Idle GPUs or inefficiently managed workloads, even with low hourly costs, will inevitably lead to a higher cost per token due to underutilized resources. Consequently, focusing solely on the numerator, the visible cost of the hardware, means neglecting the vastly more significant factors that drive the denominator – the actual token output. This can be conceptualized as an "inference iceberg." The numerator, representing the readily comparable metrics like FLOPS and memory bandwidth, sits above the water’s surface, easily observed. The denominator, however, represents the substantial submerged portion of the iceberg, encompassing critical elements that determine real-world token generation. These elements include the intricate interplay of algorithmic efficiencies, hardware architectures, software optimizations, memory bandwidth, storage solutions, and the broader ecosystem’s support. An accurate evaluation of AI infrastructure must therefore delve beneath the surface, interrogating what lies submerged to understand the true cost of AI intelligence.

Every facet of this "inference iceberg" – from algorithmic improvements and hardware design to software stack integration – must be actively engaged and harmonized. Failure to achieve this holistic integration leads to a collapse in the denominator, significantly inflating the cost per token. A seemingly "cheaper" GPU that delivers substantially fewer tokens per second, for instance, will ultimately result in a much higher cost per token, negating any initial hardware savings. Conversely, AI infrastructure that achieves optimal performance across the entire technology stack ensures that each individual optimization amplifies the effectiveness of the others, creating a compounding positive effect on token output and cost efficiency.

The profound difference between theoretical performance metrics and tangible business outcomes is starkly illustrated by comparative data for AI models like DeepSeek-R1. Examining compute cost alone, a newer platform like NVIDIA’s Blackwell might appear to be approximately twice as expensive as its predecessor, Hopper. However, this figure offers no insight into the actual output that this investment will yield. A superficial analysis based on FLOPS per dollar might suggest a comparable two-fold advantage for Blackwell over Hopper. Yet, the real-world outcome reveals a far more dramatic disparity. Blackwell, in practice, delivers over 50 times greater token output per watt compared to Hopper. This translates into a staggering reduction in cost per million tokens, approaching 35 times lower.

| Metric | NVIDIA Hopper (HGX H200) | NVIDIA Blackwell (GB300 NVL72) | NVIDIA Blackwell Relative to Hopper |

|---|---|---|---|

| Cost per GPU per Hour ($) | $1.41 | $2.65 | 2x |

| FLOP per Dollar (PFLOPS) | 2.8 | 5.6 | 2x |

| Tokens per Second per GPU | 90 | 6,000 | 65x |

| Tokens per Second per MW | 54K | 2.8M | 50x |

| Cost per Million Tokens ($) | $4.20 | $0.12 | 35x lower |

Note: Data is sourced from NVIDIA analysis and the SemiAnalysis InferenceX v2 benchmark.

This substantial divergence underscores that NVIDIA Blackwell represents not merely an incremental improvement, but a monumental leap in business value over the Hopper generation, far exceeding any proportional increase in system cost. The economic implications are transformative, enabling businesses to achieve significantly more AI output for a fraction of the cost, thereby unlocking new possibilities for AI deployment and innovation.

The imperative to choose the right AI infrastructure is therefore paramount. Evaluating AI infrastructure based solely on compute cost or theoretical FLOPS per dollar is not merely insufficient; it provides a fundamentally inaccurate representation of inference economics. As the comparative data clearly demonstrates, a true assessment of an AI infrastructure’s revenue potential and profitability necessitates a paradigm shift from input-centric metrics to output-driven measures like cost per token and delivered token throughput.

NVIDIA’s strategic advantage in this evolving landscape stems from its commitment to "extreme codesign" across the entire AI stack. This approach integrates compute, networking, memory, storage, and software into a cohesive and highly optimized system, culminating in the industry’s lowest token cost and highest token throughput. Furthermore, the continuous optimization of open-source inference software, such as vLLM, SGLang, NVIDIA TensorRT-LLM, and NVIDIA Dynamo, built upon the robust NVIDIA platform, ensures that token output on existing NVIDIA infrastructure continues to increase, while the cost per token steadily declines long after the initial acquisition. This ongoing optimization cycle provides a sustained competitive advantage and future-proofs AI investments.

Leading cloud providers and NVIDIA’s cloud partners are already leveraging this integrated approach to deliver these advantages at scale. Companies such as CoreWeave, Nebius, Nscale, and Together AI have actively deployed NVIDIA Blackwell infrastructure and meticulously optimized their software stacks. This concerted effort enables them to offer enterprises the most cost-effective token pricing available today, backed by the comprehensive benefits of NVIDIA’s hardware, software, and ecosystem codesign principles inherent in every AI interaction served. The widespread adoption and successful implementation by these key players signal a broader industry trend towards prioritizing token economics as the definitive metric for AI infrastructure success. This shift represents a fundamental reimagining of data center utility, moving beyond mere data storage to becoming engines of intelligent output, driving innovation and economic value in the AI-powered future.