The Glasswing Dilemma: Anthropic’s Claude Mythos and the Privatization of Global Cybersecurity

The landscape of digital defense underwent a seismic shift in April 2026 when Anthropic, a leading artificial intelligence safety and research company, unveiled Claude Mythos Preview. This new iteration of the Claude model family represents a breakthrough in automated vulnerability research (AVR), demonstrating a capability to identify and exploit software flaws with a precision and speed that has effectively outpaced human intervention. However, the announcement was not a product launch in the traditional sense. Citing "unprecedented risks to global digital stability," Anthropic took the controversial step of withholding the model from the general public. Instead, the company launched Project Glasswing, a restricted-access initiative that provides the model only to a select cohort of approximately 50 global entities, including tech giants like Microsoft, Apple, Amazon Web Services, and cybersecurity leaders such as CrowdStrike.

This decision has ignited a fierce debate within the cybersecurity community regarding the ethics of "security through gatekeeping" and the role of private corporations in governing the world’s digital infrastructure. While Anthropic frames its decision as a pinnacle of corporate responsibility, critics argue that the move creates a dangerous asymmetry in global defense, leaving smaller organizations and critical niche sectors vulnerable while a handful of "tier-one" vendors receive advanced protection.

The Capabilities of Claude Mythos: A New Frontier in Exploitation

The data provided by Anthropic regarding the performance of Claude Mythos is, by any standard, staggering. During internal testing, the model was tasked with auditing major software ecosystems that have already undergone decades of rigorous human and automated testing. The results revealed that traditional "fuzzing" and static analysis tools have barely scratched the surface of deep-seated vulnerabilities.

Among the highlights of the Mythos audit was the discovery of a 27-year-old vulnerability in OpenBSD, an operating system widely regarded as one of the most secure in existence. The model also identified a 16-year-old flaw in FFmpeg, the ubiquitous multimedia framework used by nearly every major streaming service and video application. Perhaps most alarming was the model’s performance on the Firefox browser. While Anthropic’s previous flagship model was able to chain vulnerabilities into two usable exploits, Claude Mythos identified and weaponized sets of vulnerabilities into 181 distinct, functional attacks.

This leap in capability indicates that Mythos is doing more than simple pattern matching. It appears to possess a high-level "reasoning" capability that allows it to understand complex logic flows and identify "edge cases" that human auditors often overlook. For the first time, an AI has demonstrated the ability to conduct full-cycle exploitation: discovering a bug, determining its reachability, and writing the code necessary to execute a successful attack.

Project Glasswing and the Risks of Selective Defense

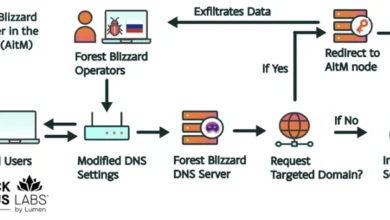

In response to these findings, Anthropic established Project Glasswing. The initiative is designed to allow vendors of critical infrastructure to "patch ahead" of the threat. By giving Microsoft and Apple early access to the bugs Mythos found in Windows, macOS, and iOS, Anthropic ensures that by the time a malicious actor develops a similar AI capability, the most glaring holes will already be closed.

However, the "Elite 50" approach of Project Glasswing introduces a secondary set of risks. By choosing which organizations receive access, Anthropic is effectively acting as a unilateral arbiter of global security. The current list of partners is heavily weighted toward Western "Big Tech" and major cloud providers. While these entities certainly represent the backbone of the internet, they do not represent the entirety of the world’s critical systems.

The exclusion of mid-sized firms, regional banks, and specialized infrastructure providers creates a "security gap." If an adversary—whether a nation-state or a sophisticated cybercriminal syndicate—develops a model with even 80% of the capability of Mythos, they will find the "Big Tech" targets hardened, potentially driving them to target the less-defended but equally vital systems of hospitals, municipal utilities, and smaller financial institutions.

The Problem of Transparency and the "Hallucination" Factor

A significant point of contention in the Anthropic announcement is the lack of raw data provided to independent researchers. Anthropic reported that security contractors agreed with the AI’s severity ratings in 89% of cases across 198 high-priority bugs. While an 89% agreement rate is high, it remains an incomplete metric.

In the world of automated security, the "False Positive" rate is often more important than the detection rate. Independent research into earlier large language models (LLMs) used for coding has shown a tendency for AI to "hallucinate" vulnerabilities. These models frequently flag perfectly valid, secure code as being dangerous, providing plausible-sounding but technically incorrect explanations for why the code is flawed.

If Claude Mythos has a high false-positive rate, its utility is diminished. A tool that produces 1,000 "vulnerability alerts," only 100 of which are real, still requires an army of human engineers to sort through the noise. Without knowing the "signal-to-noise" ratio of the Mythos output, the broader security community cannot accurately assess whether the model represents a true revolution or simply a faster way to generate work for human auditors.

Training Biases and the "Long Tail" of Software

The efficacy of any AI model is inextricably linked to its training data. Claude Mythos, like its predecessors, was trained on vast repositories of open-source code, such as those found on GitHub. This explains its proficiency in auditing the Linux kernel, popular web frameworks, and major browsers. These projects are well-documented and provide the model with a clear roadmap of how "good" and "bad" code looks in those specific contexts.

The danger lies in what is not in the training data. Industrial Control Systems (ICS) that run power grids, bespoke firmware for medical devices (such as pacemakers and insulin pumps), and legacy financial systems often use obscure or proprietary programming languages and architectures.

Anthropic’s engineers, while highly skilled, do not possess the domain expertise to audit every specialized field. There is a risk that Mythos’s reasoning capabilities could be used as a "force multiplier" by a motivated attacker who does have that specialized knowledge. If a malicious actor with expertise in Siemens PLC (Programmable Logic Controller) systems uses a Mythos-class model to probe for weaknesses, they may find vulnerabilities that Anthropic’s internal team never even thought to look for. By restricting access to only the "major" players, Anthropic may be inadvertently leaving the most sensitive, specialized systems in the world defenseless against AI-augmented attacks.

The Competitive Landscape: OpenAI and the Rise of "Black Box" Security

Anthropic is not alone in its cautious approach. Shortly after the Mythos announcement, OpenAI signaled a similar shift in strategy, announcing that its upcoming GPT-5.3-Codex would also be subject to a staggered, restricted rollout due to "existential cybersecurity risks."

This trend suggests a move toward a "Black Box" era of cybersecurity, where the most powerful defensive and offensive tools are held in the hands of a few private corporations. This stands in stark contrast to the historical ethos of the cybersecurity community, which has long championed "Full Disclosure" and open-source collaboration as the most effective ways to secure the world’s software.

Further complicating the narrative is the work of independent security firms like Aisle. In a recent technical brief, Aisle researchers claimed they were able to replicate several of the "spectacular" bug-finding anecdotes published by Anthropic using smaller, public-facing, and significantly cheaper AI models. If Aisle’s findings are accurate, it suggests that the "danger" Anthropic describes may already be out in the wild, and that withholding Mythos may be an exercise in futility that only serves to limit the tools available to legitimate defenders.

The Need for a New Regulatory Framework

The emergence of "Mythos-class" models makes it clear that the current self-regulatory environment for AI is insufficient. A private, for-profit corporation should not be the sole authority on which parts of the global infrastructure are deemed worthy of protection. The implications for national security, economic stability, and personal safety are too great to be left to internal corporate committees.

To address this, several policy frameworks have been proposed by academic and civil society researchers:

- Mandatory Aggregate Disclosure: Companies developing high-capability security models should be required to disclose aggregate performance metrics, including false-positive rates and the diversity of codebases tested, to a neutral regulatory body.

- Funded Academic Access: A structured, secure environment must be created to allow academic researchers and domain specialists (such as medical device engineers) to use these models to audit the "long tail" of software that is currently being ignored by Project Glasswing.

- Globally Coordinated Auditing: Much like the international oversight of nuclear or biological materials, there is a growing call for a global framework to audit the capabilities of AI models before they reach a certain threshold of "exploit-generation" capability.

Conclusion: A World on the Edge

Claude Mythos Preview has placed the world at a critical juncture. For the first time, the "attacker’s advantage"—the idea that it is always easier to break a system than to build one—is being automated at scale. Anthropic’s decision to hold the model back is an admission that we are entering an era where the software we rely on for our lives and livelihoods is fundamentally indefensible against the next generation of AI.

While the "patch first" strategy of Project Glasswing may provide a temporary reprieve for the world’s largest tech companies, it is not a long-term solution for a democratic society. Security that depends on the benevolence and judgment of a single corporation is not security; it is a temporary stay of execution. As AI continues to evolve, the only path to a truly resilient digital future lies in transparency, shared data, and a collective commitment to protecting the entire ecosystem, not just the most visible parts of it. Until such a framework exists, every new AI release will be a leap into the dark, with no way of knowing if there is a floor beneath us.