Wellfound Launches AI Video Interview Feature Powered by Mux to Revolutionize Startup Recruitment and Humanize the Hiring Process

The global recruitment landscape is currently undergoing a significant transformation as startups and established firms alike seek more efficient ways to identify top-tier talent beyond traditional paper credentials. Wellfound, the prominent startup job marketplace formerly known as AngelList Talent, has officially launched a new AI-driven video interview feature designed to bridge the gap between static resumes and the nuanced realities of candidate potential. By leveraging advanced video infrastructure provided by Mux, Wellfound aims to provide a platform where candidates can demonstrate their soft skills, personality, and professional narratives, ensuring that qualified individuals are not overlooked due to a lack of recognizable brand names or elite educational backgrounds on their applications.

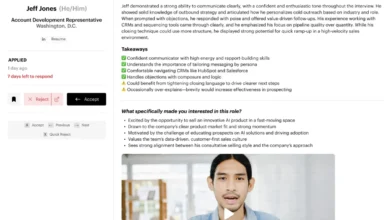

The core objective of this initiative is to address a long-standing inefficiency in the hiring process: the "resume filter" problem. Recruiters managing hundreds of applications often rely on cognitive shortcuts, prioritizing candidates from well-known companies or prestigious universities. This systemic bias frequently results in the exclusion of "industry changers"—individuals who possess the requisite skills and cultural fit but lack the traditional markers of success currently favored by automated screening algorithms. Wellfound’s AI video interview feature seeks to democratize access to opportunities by allowing candidates to record a single, comprehensive interview that can be shared across multiple job applications on the platform.

The Evolution of Video in the Recruitment Sector

The integration of video into the hiring process is not a novel concept, but previous iterations have often struggled with adoption and utility. Several years ago, Wellfound experimented with asynchronous video submissions, where candidates would record answers to specific prompts. However, the feature failed to gain traction because recruiters found it impractical to sit through 10- to 15-minute recordings for every applicant. The lack of searchability and the time-intensive nature of manual review rendered video an obstacle rather than an asset.

The current technological landscape, characterized by the maturation of Large Language Models (LLMs) and sophisticated video APIs, has fundamentally changed the value proposition of video. Wellfound’s new feature utilizes Mux’s transcription and clipping capabilities to transform raw video data into actionable insights. Unlike the previous attempts, the new system does not require recruiters to watch full videos. Instead, it provides summarized takeaways, searchable transcripts, and frame-accurate clips that allow hiring managers to jump directly to the most relevant parts of a candidate’s response.

This shift represents a move toward "candidate-first" technology. Rather than forcing applicants to record bespoke videos for every single job—a process that contributes to "application fatigue"—Wellfound allows for a generalized HR-style screening. Candidates discuss their career goals, recent projects, and professional philosophies. The AI interviewer is programmed to recognize if a response is incomplete or if there is an opportunity to delve deeper into a specific topic, prompting follow-up questions in real-time to ensure a high-quality submission.

Technical Implementation and the Role of Mux

The rapid development and deployment of the AI video feature highlight a growing trend in the tech industry: the use of specialized APIs to reduce the "barrier to entry" for complex features. Wellfound CEO Amit Matani reportedly prototyped the feature over a single weekend—a process he referred to as "vibe coding." By Monday, the core functionality was established, and the engineering team spent the subsequent week refining the code for a full-scale launch.

The technical backbone of this feature relies heavily on the Mux API, which handles the heavy lifting of video processing. The workflow begins when a candidate completes their AI-led interview. The video is then processed through Mux to generate auto-generated transcripts. These transcripts are subsequently fed into an LLM to produce concise summaries and provide constructive feedback to the candidate.

Key technical components include:

- Automated Transcription: This allows for the extraction of key insights and the generation of text-based summaries that recruiters can skim in seconds.

- Frame-Accurate Clipping: Using context from the transcript, Wellfound identifies specific sections of the interview. Recruiters can view a 30-second clip of a candidate discussing "leadership" or "technical problem-solving" without navigating the entire file.

- Auto-Captions: Recognizing that many recruiters review applications in environments where audio is not feasible, the system provides captions to ensure the content is accessible at all times.

- Cold Storage Optimization: To manage costs effectively, Wellfound utilizes Mux’s "Cold Storage" feature. Once a candidate has successfully landed a role or becomes inactive, the video files are automatically moved to a lower-cost storage tier, significantly reducing the overhead of maintaining a massive library of high-definition video.

Supporting Data and Market Context

The move toward AI-assisted interviewing comes at a time when the cost of a "bad hire" is increasingly prohibitive. According to data from the Society for Human Resource Management (SHRM), the average cost per hire is nearly $4,700, but the total cost of a misaligned hire can be three to four times the position’s annual salary. By providing a more holistic view of a candidate early in the funnel, Wellfound’s tool aims to reduce these risks.

Furthermore, the "ghosting" phenomenon in recruitment—where candidates never hear back from employers—has reached record highs. Wellfound’s feature addresses this by providing automated, tangible feedback to every candidate who completes a video interview. This feedback loop, which highlights strengths and areas for improvement, offers value to the candidate even if they do not progress to the next stage of the hiring process. Preliminary feedback from the platform suggests that candidates find this transparency refreshing in a market often characterized by automated rejection emails.

On the employer side, recruiters have reported that the video summaries have led them to "match" with candidates they would have otherwise rejected based solely on their resume. Conversely, it has also helped them identify candidates who look excellent on paper but lack the communication skills or cultural alignment required for a specific startup environment.

Chronology of Development and Launch

The timeline of the Wellfound AI video feature reflects the accelerated pace of modern software development:

- Phase 1 (The Experiment): Wellfound identifies that resume-based screening is causing them to miss high-potential candidates.

- Phase 2 (The Technical Evaluation): The leadership team reviews existing video infrastructure and determines that Mux’s API offers the necessary features (transcription, clipping, and storage) at a sustainable price point.

- Phase 3 (The Weekend Build): CEO Amit Matani builds the initial prototype, testing the feasibility of AI-driven follow-up questions and video summaries.

- Phase 4 (Production and Refinement): Wellfound engineers clean the prototype code, integrate the LLM for feedback generation, and ensure the UI is "candidate-first."

- Phase 5 (Launch and Expansion): The feature is rolled out to the general platform. Following the success of the interview feature, the team begins expanding the use of video to "Company Pitch" videos and mission-based content on company profile pages.

Broader Implications for the Future of Work

The success of Wellfound’s integration suggests that the future of recruitment lies in the "humanization of data." As AI continues to automate the initial stages of the job hunt, the challenge for platforms is to ensure that the "human element" isn’t lost in the shuffle. By using AI to facilitate human connection—rather than replace it—Wellfound is positioning itself at the forefront of a more empathetic recruitment model.

The implications extend beyond just hiring. The use of video as a primary medium for professional storytelling is likely to become standard across the B2B sector. Wellfound is already exploring ways for founders to use the same Mux-powered pipeline to create pitch videos for their companies. These videos can be clipped and included in candidate outreach, allowing startups to sell their vision more effectively to passive talent.

Moreover, the efficiency gains from "one and done" interviews could potentially disrupt the traditional HR screening industry. If a single high-quality, AI-vetted video can serve as the initial gatekeeper for dozens of applications, the time-to-hire metric across the startup ecosystem could see a dramatic reduction.

Official Responses and Analysis

Amit Matani, CEO of Wellfound, emphasized that the goal was never to replace human judgment but to empower it. "The hope was that we could help candidates tell their story better and companies could see this is a real person on the other side," Matani stated. He also noted that the ease of integration was a deciding factor in the project’s viability, suggesting that if the technical barriers had been higher, the feature might never have been realized.

Industry analysts suggest that this move is a direct response to the "commoditization" of resumes. With the rise of AI-generated cover letters and optimized resumes, it has become increasingly difficult for recruiters to discern genuine expertise from well-crafted prompts. Video provides a "proof of personhood" and a level of authenticity that text-based applications currently lack.

As Wellfound continues to iterate on this feature, the focus will likely shift toward further reducing bias within the AI interviewer itself and expanding the accessibility of the video tools to a broader range of international markets. For now, the launch serves as a potent example of how startups can use modern API infrastructure to solve age-old problems in the labor market, creating a more equitable and efficient path for talent to find its way to the right teams.