A Scalable, Multi-Tenant Configuration Service Architecture Addresses Microservices Complexity on AWS

Modern microservices architectures, while offering unparalleled agility and scalability, introduce significant operational challenges, with configuration management standing out as one of the most complex. Organizations scaling their operations frequently encounter two critical bottlenecks: the dynamic handling of tenant-specific metadata that often changes faster than traditional cache invalidation strategies permit, and the inherent difficulty in scaling the metadata service itself without creating a performance choke point. This struggle forces an uncomfortable trade-off between accepting stale tenant context, which risks incorrect data isolation or misapplied feature flags, and implementing aggressive cache invalidation, which can degrade performance and place undue load on backend services. As tenant counts surge into the hundreds or thousands, the configuration service becomes a critical scaling challenge, particularly when different configuration types exhibit vastly divergent access patterns and storage requirements.

The complexity further intensifies when the need arises to support heterogeneous storage backends for diverse configuration types. Some configurations, such as frequently accessed tenant-specific settings or feature flags, demand high-frequency access patterns best suited for services like Amazon DynamoDB, renowned for its single-digit millisecond performance at any scale. Conversely, other configurations, perhaps more static application-wide parameters or sensitive credentials, benefit from the hierarchical organization, built-in versioning, and secure storage capabilities of AWS Systems Manager Parameter Store. Traditional architectural approaches often corner engineering teams into unpalatable choices: either construct multiple bespoke configuration services, dramatically increasing operational overhead and maintenance complexity, or compromise on performance and efficiency by consolidating all configurations into a single storage backend not optimized for every use case.

This article details an innovative solution to these pervasive challenges: building a highly scalable, multi-tenant configuration service utilizing the tagged storage pattern. This architectural approach leverages key prefixes, such as tenant_config_ or param_config_, to automatically and intelligently route configuration requests to the most appropriate AWS storage service. This pattern is instrumental in maintaining stringent tenant isolation and facilitates real-time, zero-downtime configuration updates through a sophisticated event-driven architecture, effectively eradicating the pervasive problem of cache staleness. By the conclusion of this analysis, readers will possess a comprehensive understanding of how to architect a configuration service capable of handling intricate multi-tenant requirements while simultaneously optimizing for both peak performance and operational simplicity.

The Evolving Landscape of Microservices Configuration

The proliferation of microservices architectures has fundamentally reshaped application development and deployment. Moving from monolithic structures to a distributed system of loosely coupled services allows for independent development, deployment, and scaling, fostering greater agility and resilience. However, this paradigm shift introduces a new layer of complexity, particularly in managing configurations across numerous services, environments, and, critically, for multiple tenants within a Software-as-a-Service (SaaS) model.

Historically, configuration management in monolithic applications was relatively straightforward, often involving local files or centralized databases. With microservices, configurations become distributed, dynamic, and frequently tenant-specific. Each service might require its own set of parameters, feature flags, database connection strings, or third-party API keys. In a multi-tenant environment, these configurations must also be isolated per tenant, ensuring that one tenant’s settings do not inadvertently affect another’s. Reports from industry analysts like Gartner indicate that over 70% of new applications are being developed using microservices, underscoring the urgency of robust configuration solutions. The challenge is not merely storing configurations, but delivering them securely, efficiently, and with minimal latency to hundreds or thousands of constantly evolving microservices instances for potentially millions of end-users.

Traditional approaches, such as periodic polling for configuration changes, introduce significant latency, meaning services operate with outdated settings for periods ranging from seconds to minutes. This can lead to inconsistent user experiences, incorrect data processing, or delayed feature rollouts. The alternative—forcing service restarts to pick up new configurations—is antithetical to the demands of modern 24/7 SaaS applications, causing disruptive downtime, dropped connections, and frustrated users. A recent survey by IDC highlighted that unplanned downtime costs businesses an average of $5,600 per minute, emphasizing the critical need for solutions that enable continuous availability during updates. These limitations underscore the necessity for a more sophisticated, event-driven mechanism that can deliver configuration updates in near real-time without impacting service availability.

Solution Overview: An Event-Driven, Multi-Backend Architecture

The presented architecture leverages four key AWS services, expertly orchestrated through a NestJS-based gRPC service, to construct a resilient and highly efficient event-driven configuration management system. This system is designed from the ground up to address the aforementioned challenges, providing a blueprint for modern cloud-native applications.

Architecture Components and Flow

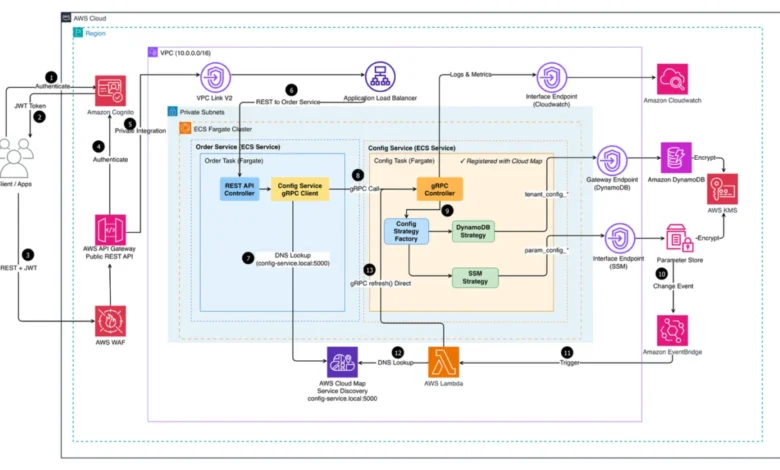

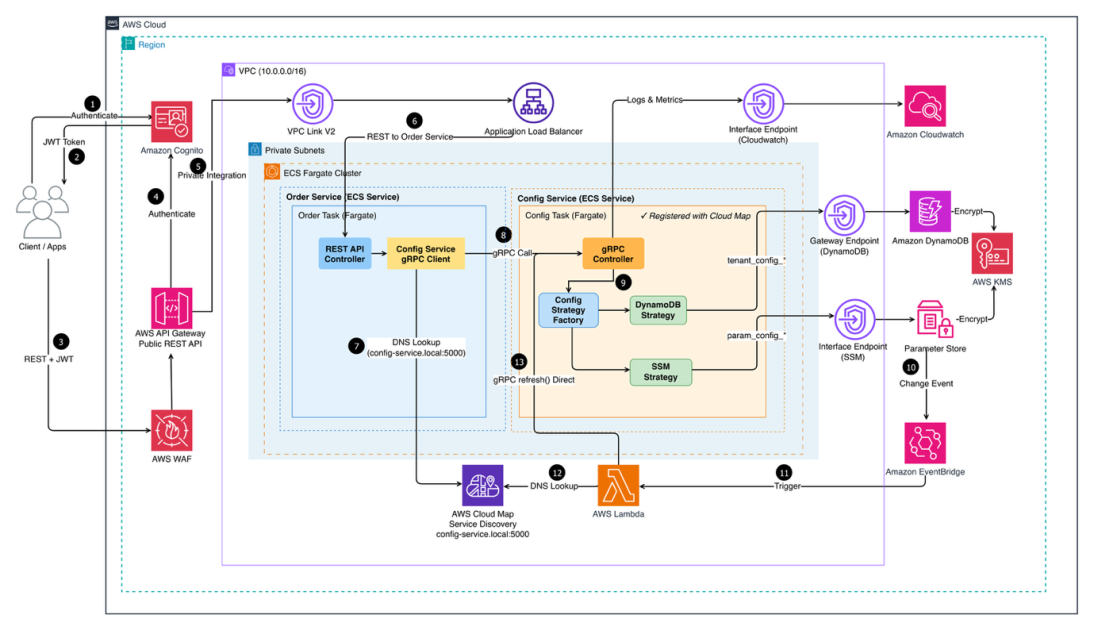

The comprehensive architecture of the Multi-Tenant Configuration Service deployed on AWS orchestrates client requests from entry to data retrieval. Client applications initiate interaction by authenticating securely via Amazon Cognito, a robust identity management service that provides user directories and authentication flows. These requests are then meticulously screened by AWS WAF (Web Application Firewall) to protect against common web exploits and bots, before being routed through Amazon API Gateway. API Gateway, acting as the front door, directs traffic via a VPC Link to an Application Load Balancer. This load balancer intelligently distributes incoming requests across two core microservices, the "Configuration Service" and the "Admin Service," both running on Amazon Elastic Container Service (Amazon ECS) with AWS Fargate within private subnets. This serverless container approach abstracts away infrastructure management, allowing development teams to focus purely on application logic.

Service discovery within this distributed environment is seamlessly managed by AWS Cloud Map, ensuring that microservices can locate and communicate with each other dynamically. Operational visibility, crucial for maintaining system health and performance, is centralized through Amazon CloudWatch, which collects and monitors logs and metrics across all deployed services.

The entire system is logically segmented into four interconnected layers, each meticulously designed to tackle a specific facet of the configuration management challenge:

-

Storage Layer – Multi-Backend Strategy: This foundational layer employs a strategic combination of AWS services, each meticulously optimized for distinct configuration access patterns and requirements.

- Amazon DynamoDB: Utilized for high-frequency access patterns, DynamoDB’s NoSQL capabilities are ideal for storing tenant-specific configurations that demand low-latency reads and writes. Its ability to scale to virtually any workload with consistent performance makes it perfect for dynamic settings and feature flags. DynamoDB tables can handle millions of requests per second, with data stored across multiple availability zones for high availability.

- AWS Systems Manager Parameter Store: This service is designated for more static, application-wide, or sensitive configurations. It offers hierarchical storage, built-in versioning, and seamless integration with other AWS services for secure parameter retrieval, making it suitable for environment variables, database credentials, or third-party API keys. Parameter Store supports up to 10,000 parameters per account and provides encryption at rest and in transit.

-

Service Layer – gRPC with Strategy Pattern: At the heart of the system lies a NestJS-based microservice that implements the configuration retrieval logic. The choice of gRPC for inter-service communication is deliberate, offering high-performance, type-safe, and bandwidth-efficient communication compared to traditional REST APIs, especially critical in a distributed microservices environment where compatibility with web browsers is not a primary concern. The core innovation within this layer is a Strategy Pattern implementation. This design pattern dynamically determines the optimal storage backend based on predefined configuration key prefixes. For instance, a key prefixed

tenant_config_might be routed to DynamoDB, whileapp_param_might go to Parameter Store. This pattern dramatically simplifies the addition of new storage backends, such as Amazon Simple Storage Service (Amazon S3) for very large configuration files, without necessitating modifications to the core service logic, thereby enhancing modularity and maintainability. -

Authentication Layer – Amazon Cognito: The robust security of the system hinges on its authentication layer. User authentication flows are managed entirely through Amazon Cognito, which issues JSON Web Tokens (JWTs) upon successful authentication. A critical security design principle is enforced here: the service never accepts

tenantIdfrom request parameters. Instead, it rigorously extracts the tenant context solely from validated JWT tokens. This critical measure ensures that requests cannot access other tenants’ data, even if malicious actors attempt to manipulate request payloads, thereby upholding strict tenant isolation and preventing potential data breaches. Cognito also supports custom attributes, allowing for the storage of tenant-specific identifiers directly within the user’s profile. -

Event-Driven Refresh Layer: This layer directly confronts the dilemma of traditional configuration updates. Polling approaches, which continuously check for changes, are inherently inefficient, generating unnecessary API calls and incurring costs even when no changes have occurred. More critically, they introduce unacceptable delays, as services only recognize updates after the next poll cycle, potentially seconds or minutes later. Conversely, service restart approaches, while ensuring configuration freshness, cause downtime, drop active connections, and disrupt user sessions—an unacceptable outcome for 24/7 SaaS applications. The event-driven refresh layer addresses both problems by implementing a reactive architecture. Amazon EventBridge, a serverless event bus, actively monitors AWS Systems Manager Parameter Store for any configuration changes. Upon detecting a change, EventBridge triggers an AWS Lambda function, which in turn orchestrates the update of the configuration service’s local cache. This sophisticated mechanism enables configuration updates to propagate within seconds, ensuring that services operate with the latest settings without any interruption to user experience. This approach drastically reduces the mean time to recovery (MTTR) for configuration issues and accelerates the deployment of new features or bug fixes.

Technical Implementation: Multi-Tenant Data Model

The bedrock of tenant isolation and efficient querying within this architecture is the meticulously designed data model, particularly for configurations stored in DynamoDB. DynamoDB’s flexible NoSQL nature and its composite key structure (Partition Key and Sort Key) are leveraged to achieve stringent tenant isolation and optimize query performance without the operational burden of creating separate tables for each tenant.

DynamoDB Schema Design for Multi-Tenancy:

For tenant-specific configurations, the DynamoDB schema is structured as follows:

- Partition Key (

PK):tenantId- This is the primary key component responsible for distributing data across DynamoDB partitions. By using

tenantIdas the Partition Key, all configurations belonging to a specific tenant are co-located on the same partition, enabling highly efficient retrieval of all settings for that tenant. This design choice is fundamental to achieving strict tenant isolation, as queries for onetenantIdwill never inadvertently access data belonging to another.

- This is the primary key component responsible for distributing data across DynamoDB partitions. By using

- Sort Key (

SK):config_key- The Sort Key allows for a hierarchical organization of configuration items within a given

tenantId. It provides flexibility in querying, enabling efficient retrieval of individual configuration items or a range of items for a particular tenant. For example,feature_flags#dark_modeorbilling_settings#plan_type.

- The Sort Key allows for a hierarchical organization of configuration items within a given

Practical Example of Tenant Isolation and Efficient Access:

Consider a SaaS application managing various settings for "Tenant A" and "Tenant B."

-

Tenant A’s Configurations:

PK: tenantA_id,SK: feature_flags#dark_mode,Value: truePK: tenantA_id,SK: notification_settings#email_frequency,Value: dailyPK: tenantA_id,SK: regional_settings#timezone,Value: EST

-

Tenant B’s Configurations:

PK: tenantB_id,SK: feature_flags#dark_mode,Value: falsePK: tenantB_id,SK: notification_settings#email_frequency,Value: weeklyPK: tenantB_id,SK: regional_settings#timezone,Value: PST

When a microservice needs to fetch configurations for tenantA_id, it performs a Query operation on DynamoDB with PK = tenantA_id. This query efficiently retrieves all items associated with Tenant A, without scanning or processing any data belonging to Tenant B. The Sort Key config_key further refines these queries, allowing for precise retrieval of specific configurations (e.g., PK=tenantA_id and SK=feature_flags#dark_mode) or batches of related configurations (e.g., all feature_flags#* for tenantA_id using a begins_with condition on the Sort Key). This design significantly reduces the number of read capacity units (RCUs) consumed, leading to cost efficiencies and improved latency, especially for frequently accessed configurations.

For application-wide parameters stored in AWS Systems Manager Parameter Store, the hierarchical naming convention provides similar benefits. For instance, /app/prod/database/connection_string or /app/dev/feature_flags/new_ui. This structure inherently supports versioning and allows for environment-specific parameter overrides, which is critical for CI/CD pipelines and secure deployment practices.

Broader Implications and Future Outlook

The adoption of this scalable, multi-tenant configuration service architecture carries significant implications for organizations operating in the cloud-native landscape.

Operational Simplicity and Cost Efficiency: By abstracting away complex configuration management logic into a dedicated, event-driven service leveraging managed AWS services, development teams can focus on core business logic rather than infrastructure boilerplate. AWS Fargate eliminates the need to manage EC2 instances, and EventBridge, Lambda, and DynamoDB are serverless, meaning organizations only pay for the resources consumed. This model can lead to substantial reductions in operational overhead and optimized cloud spending. The elimination of manual configuration updates and service restarts also reduces the risk of human error, a leading cause of outages.

Enhanced Security Posture: The rigorous enforcement of tenant isolation through JWT token validation, coupled with AWS WAF and Cognito’s robust authentication capabilities, significantly bolsters the application’s security posture. By preventing client-side manipulation of tenantId, the architecture effectively mitigates a common class of security vulnerabilities, ensuring that sensitive tenant data remains segregated and protected. The use of Parameter Store for sensitive credentials further centralizes and secures critical application parameters.

Accelerated Development and Feature Velocity: The ability to deploy configuration changes in near real-time without service interruptions empowers development teams to iterate faster, conduct A/B testing with greater agility, and roll out new features or bug fixes with minimal risk. This agility is a cornerstone of competitive advantage in fast-paced markets, allowing businesses to respond rapidly to market demands and customer feedback. The event-driven nature ensures that changes are propagated consistently across all services, eliminating configuration drift and promoting a unified operational state.

Resilience and Scalability: Leveraging AWS’s highly available and scalable managed services (DynamoDB, Fargate, Lambda, EventBridge) inherently imbues the configuration service with enterprise-grade resilience and the capacity to scale seamlessly under varying loads. These services are designed for high availability, with data replication across multiple availability zones and automatic failover mechanisms, ensuring that the configuration service itself does not become a single point of failure.

Adaptability and Future-Proofing: The Strategy Pattern implemented within the service layer ensures that the architecture is inherently adaptable. As new configuration types emerge or as organizational needs evolve to incorporate different storage backends (e.g., dedicated feature flag services, key-value stores for specific caching needs), these can be integrated with minimal disruption to the core service logic. This modularity future-proofs the investment in the architecture, allowing it to evolve with changing technological landscapes and business requirements.

In conclusion, the architecting of a scalable, multi-tenant configuration service on AWS, anchored by the tagged storage pattern and an event-driven refresh layer, represents a sophisticated yet pragmatic approach to overcoming persistent challenges in microservices deployment. This framework delivers unparalleled scalability, stringent security, real-time configuration updates, and operational efficiency, providing a robust foundation for organizations building complex, high-performance SaaS applications on the cloud. The focus on automation, security, and developer agility positions this architecture as a best practice for modern cloud-native development, ensuring that configuration management becomes an enabler of innovation rather than an operational bottleneck.