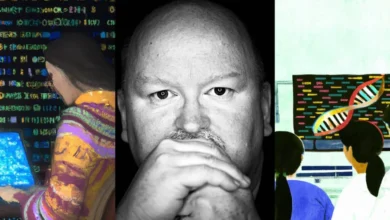

The Transformative Power of AI: Microsoft CTO Kevin Scott on the Dawn of a New Era in Innovation and Productivity

The rapid evolution of artificial intelligence, particularly driven by advancements in large language models (LLMs), is fundamentally reshaping how individuals across numerous professions engage with their work and creative processes. From streamlining code generation for software developers to assisting graphic designers in conceptualizing visual assets, AI systems are becoming indispensable tools. Kevin Scott, Chief Technology Officer at Microsoft, anticipates a continued surge in the sophistication and scalability of these AI systems, projecting their impact across critical global challenges such as climate change and childhood education, while also revolutionizing sectors from healthcare and law to materials science and even speculative fiction. Scott recently shared his insights on the profound impact of AI on knowledge workers and the trajectory of future AI developments, highlighting several key takeaways that underscore the monumental shifts underway.

The Unprecedented Innovations of 2022

Reflecting on the past year, Scott expressed that the advancements witnessed in artificial intelligence in 2022 surpassed even the most optimistic expectations. "When we were heading into 2022, I think just about everybody in AI was anticipating really impressive things to take place over the next twelve or so months," Scott remarked. "But now that we’re pretty much through the year, and even with those lofty expectations, it’s kind of genuinely mind-blowing to look back at the magnitude of innovation that we saw left-to-right in AI. The things that researchers and other folks have done to advance the state-of-the-art are just light years beyond what we thought possible even a few years ago." He attributed this exponential progress overwhelmingly to the incredibly rapid advancement of large AI models.

Scott pinpointed three specific breakthroughs from 2022 that he found most impressive:

-

GitHub Copilot: This LLM-powered system transforms natural language prompts into functional code, significantly boosting developer productivity. Its ability to democratize coding by making it accessible to a broader audience is seen as crucial for a future increasingly reliant on software development. The implications are vast, potentially accelerating innovation cycles and lowering the barrier to entry for aspiring coders.

-

Generative Image Models (e.g., DALL-E 2): The widespread accessibility and popularity of models like DALL-E 2 have provided individuals with a novel "superpower"—a visual vocabulary previously accessible only to skilled artists. While not transforming laypeople into professional artists, these tools empower them to translate ideas into compelling visual concepts, bridging the gap between imagination and execution for a wider range of creative endeavors. This has already found applications in marketing, design prototyping, and content creation, democratizing visual storytelling.

-

Advancements in Scientific AI (e.g., Protein Folding): Scott highlighted the substantial gains made in applying AI to complex scientific problems, citing the work on protein folding as a prime example. Collaborations, such as Microsoft’s involvement with David Baker’s laboratory at the University of Washington’s Institute for Protein Design using tools like RoseTTAFold, demonstrate AI’s potential to accelerate scientific discovery. These breakthroughs are described as "force multipliers" for science and medicine, offering the promise of addressing some of humanity’s most intractable challenges. The ability of AI to model and predict complex biological structures has profound implications for drug discovery, disease treatment, and understanding fundamental biological processes.

The Horizon: AI’s Impact in 2023 and Beyond

Looking ahead, Scott expressed strong confidence that 2023 will be an even more exhilarating year for the AI community, building on the momentum of 2022. He foresees the principles behind GitHub Copilot extending beyond coding to assist in a multitude of intellectual tasks. "The entire knowledge economy is going to see a transformation in how AI helps out with repetitive aspects of your work and makes it generally more pleasant and fulfilling," Scott predicted. This expansive vision includes applications in designing new pharmaceuticals, optimizing manufacturing processes, and enhancing writing and editing workflows.

Scott shared a personal anecdote illustrating this transformative potential: his experimentation with an LLM-based tool for writing science fiction. He noted that while writing traditionally might yield around 2,000 words in a productive day, this AI-assisted approach allowed him to generate up to 6,000 words daily. This experience, he described, felt qualitatively more energizing and removed creative logjams that previously hindered his progress. This concept of a "copilot for everything"—an AI assistant augmenting cognitive work—promises not only increased output but also a significant enhancement of creativity.

The Joy of Enhanced Productivity and Flow

The discussion also delved into why these AI tools contribute to greater job satisfaction. Scott posited that the enjoyment derived from mastering and effectively deploying new tools is a significant factor. For many, AI represents a fundamentally more effective set of tools than previously available. He referenced a Microsoft study indicating that the adoption of no-code or low-code tools led to an over 80% positive impact on work satisfaction, workload, and morale.

For individuals, these tools can enhance their "flow state"—the psychological state of being fully immersed in an activity. By accelerating tasks, reducing the need for context switching to solve minor subproblems, and eliminating drudgery, AI allows users to remain focused and creative for longer periods. Scott likened this to having better running shoes for a race; it’s about enabling individuals to perform at their peak without being held back by inefficient processes or repetitive tasks. This is precisely what developers have reported experiencing with GitHub Copilot, noting that it helps them stay in the zone and maintain sharper focus during what were once mundane coding tasks.

AI Integration Across Microsoft Products

Beyond specialized tools like GitHub Copilot and DALL-E 2, AI is subtly yet profoundly improving the user experience of widely used Microsoft products and services. Scott referred to this as the "big untold story of AI," where machine learning algorithms are integrated into numerous aspects of our digital interactions, often without explicit user awareness.

Examples abound within Microsoft Teams, where machine learning optimizes audio jitter buffers for smoother communication and applies background blur effects. Scott noted that more than a dozen machine learning systems contribute to a more delightful Teams experience. This pervasive integration extends across the Microsoft ecosystem, from the predictive text in Word and the search algorithms in Bing to personalized feeds in Xbox Cloud Gaming and LinkedIn.

A significant shift in AI development at Microsoft has been the move from creating highly specialized models for each individual task to developing broadly applicable, single models that can be leveraged across numerous products. This scaling approach allows for simultaneous improvements across a wide array of applications as the foundational models become more powerful. This strategy not only optimizes resource allocation but also ensures that users benefit from continuous advancements in AI capabilities across their daily digital tools.

AI for Good and the Future of Science

Microsoft’s commitment to leveraging AI for societal benefit is underscored by initiatives like AI4Science and AI for Good. Scott expressed particular excitement about AI’s potential to tackle some of the world’s most complex challenges. These include developing cures for intractable diseases, preparing for future pandemics, ensuring affordable healthcare for aging populations, scaling quality education, and creating technologies to reverse the effects of carbon emissions.

The underlying principle is that the scaling properties of AI models used in basic science applications mirror those of large language models. By training models on simulations or real-world data, researchers can dramatically improve the performance of applications in fields like computational fluid dynamics and molecular dynamics for drug design. This accelerates the pace of discovery, potentially leading to breakthrough medicines, novel catalysts for environmental solutions, and a faster path to solving critical global issues.

The Indispensable Role of Computing Power and Hardware

The recent surge in AI capabilities is inextricably linked to advancements in computing techniques and hardware. Scott emphasized that "the fundamental thing underlying almost all of the recent progress we’ve seen in AI is how critical the importance of scale has proven to be." The ability to train models on vast datasets with immense compute power has unlocked richer and more generalized capabilities. To sustain this progress, optimizing and scaling compute power remains paramount.

Microsoft has made significant investments in this area, announcing its first Azure AI supercomputer two years ago and revealing at its Build developer conference that it now operates multiple supercomputing systems that are among the largest and most powerful in the world. These systems, utilized by Microsoft and OpenAI, train state-of-the-art large models such as Microsoft’s Turing, Z-code, and Florence, as well as OpenAI’s GPT, DALL-E, and Codex models. The recent collaboration with NVIDIA to build a supercomputer leveraging Azure infrastructure and NVIDIA GPUs further signifies this commitment.

While brute-force compute scale with increasingly large clusters of GPUs has been a driving factor, Scott highlighted a potentially more significant breakthrough: the development of software layers that optimize how models and data are distributed across these massive systems. This optimization is crucial for both training and serving models to customers. To ensure these powerful AI models function as accessible platforms for innovation, they must be usable by entities beyond a select few tech giants.

Microsoft’s substantial investment in software like DeepSpeed for training efficiency and ONNX Runtime for inference aims to optimize cost and latency, making larger AI models more accessible and valuable. The company’s commitment to open-sourcing these technologies further fosters industry-wide improvement and collaboration.

Navigating the AI and Jobs Landscape

Addressing the persistent concern about AI’s impact on employment, Scott acknowledged the complex macroeconomic landscape and the need for new forms of productivity to sustain progress. He views AI tools as platforms that democratize access, enabling a wider range of individuals to build businesses and solve problems. This democratization is expected to lead to a richer set of solutions and a more diverse group of participants in technological creation.

He drew parallels to historical technological paradigm shifts like the telephone, automobile, and internet, noting that each brought about fundamental changes in the nature of work, creating new jobs and requiring new skill sets. Scott anticipates similar shifts with AI, where some existing jobs may be transformed, while entirely new roles will emerge. This necessitates a forward-thinking approach to education, skill development, and ensuring a workforce equipped for the critical jobs of the future.

Ensuring Responsible AI Development and Deployment

Microsoft is acutely aware of the potential for misuse and abuse of AI technologies and has implemented robust safeguards. Scott detailed the company’s comprehensive "responsible AI" process, which involves multidisciplinary teams of experts scrutinizing potential harms and implementing mitigation strategies. These include refining training datasets, deploying filters to prevent harmful content generation, integrating query blocking for sensitive topics, and developing technologies that promote more helpful and diverse responses. A post-launch plan is also in place to detect and address unforeseen harms promptly.

Intentional and iterative deployment is another critical safeguard. For broadly capable models hosted in the cloud and accessed via APIs, developers must adhere to terms of service, with access revoked for violations. For other products, Microsoft may initiate with limited previews for specific use cases, allowing for real-world testing of responsible AI safeguards before broader adoption.

Microsoft is committed to sharing its learnings and expertise with the broader industry through its Responsible AI Standard and Principles, aiming to foster a culture of safety and responsibility across the AI landscape. This proactive approach underscores the company’s belief that developing and deploying AI ethically is not just a technical challenge but a fundamental imperative for societal well-being. The ongoing dialogue around AI’s capabilities and implications, as articulated by leaders like Kevin Scott, signals a critical juncture where technology’s potential to solve humanity’s greatest challenges is being actively pursued alongside rigorous efforts to ensure its responsible and beneficial application.