AWS Launches Anthropic’s Claude Opus 4.7 on Amazon Bedrock, Elevating Enterprise AI Capabilities

Amazon Web Services (AWS) today announced the general availability of Anthropic’s most advanced large language model (LLM), Claude Opus 4.7, on Amazon Bedrock. This strategic integration marks a significant advancement in empowering enterprises with cutting-edge artificial intelligence, promising enhanced performance across critical domains such as complex coding, sophisticated long-running agentic tasks, and demanding professional workflows. The introduction of Claude Opus 4.7 on Bedrock is poised to redefine how businesses leverage generative AI, offering a robust, secure, and highly scalable platform for developing and deploying AI-powered applications.

Unlocking New Frontiers with Claude Opus 4.7

Claude Opus 4.7 represents the pinnacle of Anthropic’s model development, designed specifically to tackle the most challenging AI workloads. According to Anthropic, this latest iteration delivers substantial improvements over its predecessors, including Opus 4.6, by demonstrating superior capabilities in handling ambiguity, executing more thorough problem-solving, and adhering to instructions with greater precision. These enhancements are particularly critical for enterprises dealing with vast amounts of unstructured data, intricate business logic, and the need for highly reliable AI agents. Key areas where Opus 4.7 is expected to shine include:

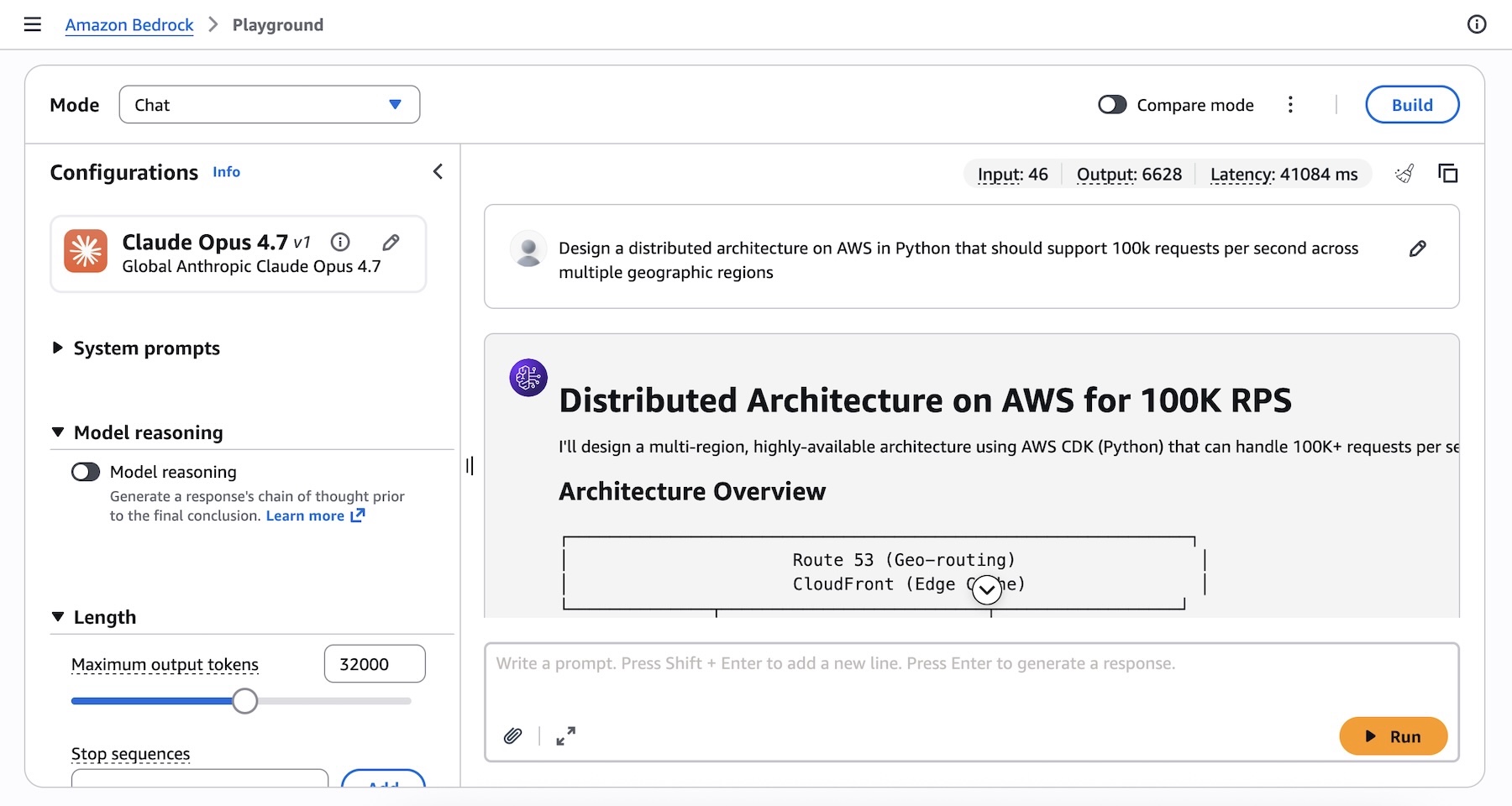

- Agentic Coding: Developers can now leverage Claude Opus 4.7 for more sophisticated code generation, debugging, and refactoring tasks. The model’s enhanced reasoning abilities allow it to understand complex architectural requirements and produce high-quality, efficient code snippets, potentially accelerating software development cycles and reducing the manual effort involved in intricate programming challenges. For instance, a prompt like

Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regionscan now yield a more comprehensive and robust solution, detailing services like Amazon EC2, Lambda, API Gateway, DynamoDB, and Aurora, complete with Python code examples for interaction and deployment considerations. - Knowledge Work Automation: In knowledge-intensive fields, Opus 4.7 can power advanced applications for research, document analysis, legal review, and content creation. Its ability to process and synthesize information from extensive documents, coupled with improved understanding of nuanced queries, makes it an invaluable tool for automating tasks that traditionally require significant human cognitive effort. This includes summarizing lengthy reports, extracting specific data points from diverse sources, and generating detailed analyses based on complex datasets.

- Visual Understanding: While the announcement focuses on text-based capabilities, Anthropic’s Claude models often incorporate multimodal understanding. Opus 4.7’s advancements likely contribute to improved interpretation of visual information when integrated into multimodal workflows, allowing for more insightful analysis of images and diagrams in conjunction with textual prompts.

- Long-Running Tasks: One of the persistent challenges in AI has been the ability of models to maintain coherence and accuracy over extended interactions or multi-step processes. Claude Opus 4.7’s improvements in this area mean it can better manage context, follow complex instruction sets over time, and execute multi-part projects, making it ideal for applications like customer service bots that handle multi-turn conversations or project management assistants that track progress across several stages.

The model’s improved ability to navigate ambiguity and its meticulous problem-solving approach are vital for enterprise applications where precision and reliability are paramount. While an upgrade from Opus 4.6, users are advised to consult Anthropic’s prompting guide to optimize their interactions and harness the full potential of the new model, suggesting that subtle adjustments in prompt engineering can unlock even greater performance.

The Bedrock Advantage: Enterprise-Grade Infrastructure and Unwavering Privacy

The deployment of Claude Opus 4.7 is underpinned by Amazon Bedrock’s next-generation inference engine, a testament to AWS’s commitment to providing robust, scalable, and secure infrastructure for generative AI. This engine is not merely a conduit for models; it is a meticulously engineered platform designed to meet the rigorous demands of enterprise production workloads.

A cornerstone of this new inference engine is its brand-new scheduling and scaling logic. This sophisticated system dynamically allocates capacity to incoming requests, leading to marked improvements in availability, particularly for steady-state workloads. Simultaneously, it intelligently makes room for rapidly scaling services, ensuring that businesses can adapt to fluctuating demand without compromising performance. This dynamic allocation is crucial for enterprises that experience variable loads, from peak shopping seasons to sudden surges in data processing needs.

Beyond performance, data privacy and security remain a top priority for AWS and Anthropic. The Bedrock inference engine boasts a "zero operator access" policy. This means that customer prompts and the corresponding model responses are never visible to either Anthropic or AWS operators. This stringent security measure ensures that sensitive corporate data, proprietary information, and confidential communications remain private, directly addressing one of the most significant concerns for enterprises adopting cloud-based AI solutions. This commitment to data isolation and privacy distinguishes Bedrock as a trusted platform for handling critical business operations, fostering greater confidence among organizations hesitant about exposing their data to third-party AI services.

A Strategic Partnership Forging the Future of AI

The integration of Claude Opus 4.7 into Amazon Bedrock is a continuation of a significant strategic alliance between AWS and Anthropic. This partnership, solidified by AWS’s substantial investment in Anthropic, aims to accelerate the development and deployment of safe and powerful AI systems. The collaboration positions AWS as a premier cloud provider for Anthropic’s cutting-edge models, while Anthropic benefits from AWS’s vast infrastructure, global reach, and extensive enterprise customer base.

This alliance also reflects a broader trend in the tech industry where cloud providers are forming deep partnerships with leading AI model developers to offer differentiated and highly competitive generative AI services. In a landscape increasingly dominated by major players like OpenAI (partnered with Microsoft Azure) and Google’s own Vertex AI offerings, the AWS-Anthropic collaboration creates a formidable alternative for enterprises seeking best-in-class AI solutions coupled with enterprise-grade security and scalability. The emphasis on "constitutional AI" from Anthropic—a methodology focused on training AI systems to be helpful, harmless, and honest—aligns well with AWS’s commitment to responsible AI development, providing an ethical foundation for powerful enterprise applications.

Navigating the Competitive Enterprise AI Landscape

The market for enterprise generative AI solutions is fiercely competitive, with cloud giants vying to offer the most compelling foundational models and development platforms. AWS Bedrock stands out by providing a fully managed service that makes foundational models from various leading AI companies accessible via a single API. This multi-model approach offers businesses flexibility and choice, allowing them to select the best model for their specific use case without being locked into a single provider.

The launch of Claude Opus 4.7 directly challenges offerings like OpenAI’s GPT-4 models on Azure OpenAI Service and Google’s Gemini models on Vertex AI. With Opus 4.7’s advanced reasoning and agentic capabilities, AWS is signaling its intent to capture a larger share of the high-value enterprise AI market, particularly for applications requiring sophisticated problem-solving and long-context understanding. The "zero operator access" feature is a crucial differentiator, appealing strongly to industries with strict regulatory compliance and data privacy requirements, such as finance, healthcare, and government. This focus on security and privacy is a strategic move to build trust and attract enterprises that might otherwise be hesitant to adopt public cloud AI services.

Empowering Developers: Access and Implementation

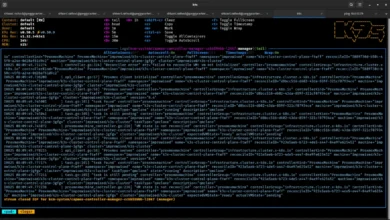

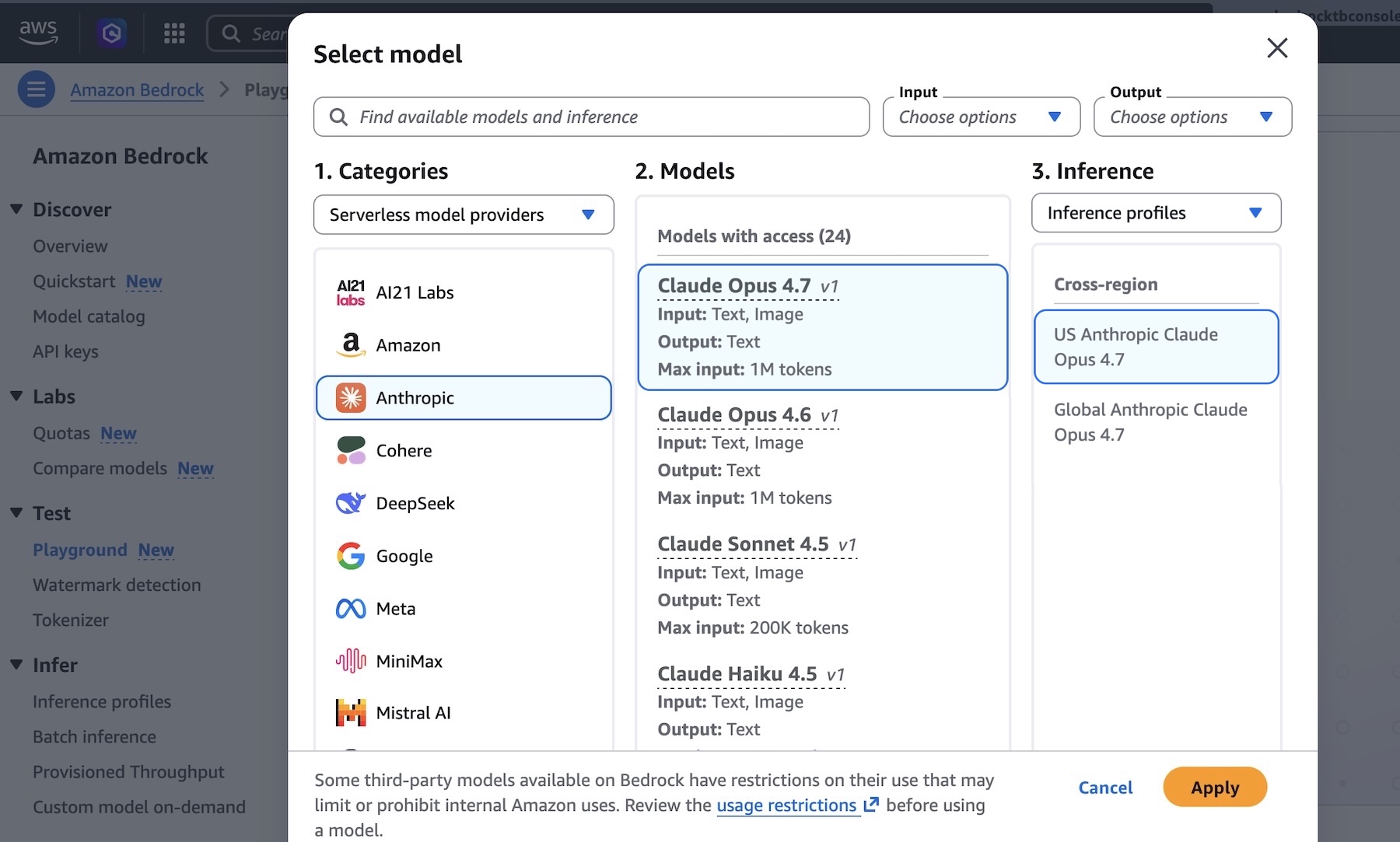

AWS has made Claude Opus 4.7 readily accessible to developers through multiple channels, ensuring ease of integration into existing workflows. Developers can begin experimenting with the model immediately via the Amazon Bedrock console, navigating to the "Playground" under the "Test" menu and selecting "Claude Opus 4.7." This interactive environment allows for quick prototyping and testing of complex prompts, such as the architecture design example provided:

Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions.

For programmatic access, developers have several robust options:

- Anthropic Messages API: The model can be invoked programmatically using the

bedrock-runtimeendpoint through the Anthropic SDK. An example using theanthropic[bedrock]SDK package illustrates a streamlined experience:from anthropic import AnthropicBedrockMantle # Initialize the Bedrock Mantle client (uses SigV4 auth automatically) mantle_client = AnthropicBedrockMantle(aws_region="us-east-1") # Create a message using the Messages API message = mantle_client.messages.create( model="us.anthropic.claude-opus-4-7", max_tokens=32000, messages=[ "role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions" ] ) print(message.content[0].text) - AWS CLI and SDKs: For those preferring AWS’s native tooling, the

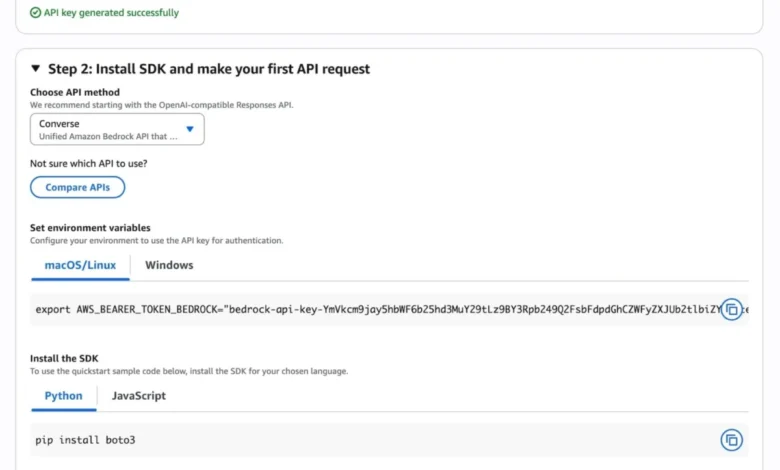

InvokeandConverse APIonbedrock-runtimecan be utilized via the AWS Command Line Interface (AWS CLI) and various AWS SDKs. A CLI example for invoking the model directly is:aws bedrock-runtime invoke-model --model-id us.anthropic.claude-opus-4-7 --region us-east-1 --body '"anthropic_version":"bedrock-2023-05-31", "messages": ["role": "user", "content": "Design a distributed architecture on AWS in Python that should support 100k requests per second across multiple geographic regions."], "max_tokens": 32000' --cli-binary-format raw-in-base64-out invoke-model-output.txtThese options provide flexibility for developers, whether they are integrating AI into existing Python applications, leveraging containerized environments, or orchestrating complex workflows using AWS services. The "Quickstart" option in the Bedrock console further simplifies the initial setup, allowing users to generate short-term API keys for testing and access sample codes for various API methods, including OpenAI-compatible responses.

Adaptive Thinking: Intelligent Resource Allocation for Complex Tasks

A notable feature available with Claude Opus 4.7 is "Adaptive Thinking." This capability allows Claude to dynamically allocate "thinking token budgets" based on the perceived complexity of each request. Instead of fixed computational resources, the model can intelligently determine how much "thought" or processing power is needed for a particular prompt. For simpler queries, it responds swiftly, conserving resources. For more intricate problems requiring deeper analysis and multi-step reasoning, it allocates more tokens, ensuring a thorough and accurate response. This optimization not only enhances the quality of output for complex tasks but also contributes to more efficient resource utilization and potentially faster response times for simpler interactions. This intelligent resource management is particularly beneficial in production environments where a wide range of query complexities is common.

Global Availability and Economic Considerations

Anthropic’s Claude Opus 4.7 model is immediately available in key AWS regions, including US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm). This multi-region availability ensures that enterprises globally can leverage the model with low latency and in compliance with regional data residency requirements. AWS continues to update its list of available regions, indicating a broader rollout is likely in the future.

The economic aspect of adopting advanced AI models is a critical consideration for enterprises. AWS provides detailed pricing information for Amazon Bedrock, which typically follows a pay-as-you-go model, allowing businesses to scale their AI consumption based on actual usage without significant upfront investments. This flexible pricing structure makes powerful models like Claude Opus 4.7 accessible to a wide range of organizations, from startups to large enterprises, enabling them to experiment and innovate without prohibitive costs. The cost-efficiency, combined with the power of Opus 4.7, positions Bedrock as an attractive platform for organizations looking to maximize their AI ROI.

Broader Implications and Future Outlook

The launch of Claude Opus 4.7 on Amazon Bedrock has profound implications for the future of enterprise AI. It empowers businesses to:

- Accelerate Innovation: By providing a highly capable AI assistant for coding, research, and problem-solving, Opus 4.7 can significantly accelerate product development cycles, foster new service offerings, and drive innovation across various industries.

- Boost Productivity: Automation of complex, knowledge-intensive tasks can free up human capital to focus on strategic initiatives, leading to substantial gains in operational efficiency and employee productivity.

- Enhance Decision-Making: With its superior reasoning and data analysis capabilities, the model can provide deeper insights, helping businesses make more informed and data-driven decisions.

- Strengthen Security and Compliance: The "zero operator access" feature sets a new standard for data privacy in cloud AI, making Bedrock a preferred choice for industries with stringent security and compliance mandates.

- Democratize Advanced AI: By making such a powerful model accessible through a managed service, AWS lowers the barrier to entry for businesses to leverage state-of-the-art AI, fostering broader adoption and application across diverse sectors.

The continuous evolution of foundational models like Claude Opus 4.7, coupled with robust platforms like Amazon Bedrock, signifies a transformative period for artificial intelligence. As these models become more sophisticated, capable of handling increasingly complex and nuanced tasks, their integration into the fabric of enterprise operations will become indispensable. The collaboration between AWS and Anthropic, underscored by this launch, reaffirms their commitment to leading this revolution, offering tools that are not only powerful but also secure, scalable, and responsibly developed.

Enterprises are encouraged to explore the capabilities of Anthropic’s Claude Opus 4.7 in the Amazon Bedrock console today. Feedback channels, such as AWS re:Post for Amazon Bedrock and direct AWS Support contacts, remain open for users to share their experiences and contribute to the ongoing refinement of these cutting-edge AI services. The journey towards a more intelligent and automated enterprise future continues, with Claude Opus 4.7 on Bedrock marking a significant milestone along the way.