Wellfound Integrates AI Video Interviewing and Mux Transcription Technology to Transform Startup Recruitment and Candidate Assessment

The traditional resume, long considered the gold standard for job applications, is increasingly being viewed by industry experts as an insufficient predictor of long-term job fit and cultural alignment. Recognizing the limitations of paper-based credentials, Wellfound, the prominent startup job marketplace formerly known as AngelList Talent, has announced the launch of a new AI-driven video interview feature. This technological integration, built using the Mux video infrastructure, aims to provide candidates with a platform to showcase their personality and soft skills while offering recruiters a more efficient way to filter through high volumes of applications without succumbing to the "pedigree bias" that often plagues the hiring process.

For decades, the recruitment industry has relied on shortcuts to manage the overwhelming influx of applications. Recruiters often prioritize candidates from recognizable "brand-name" companies or prestigious universities, a practice that frequently results in qualified talent being overlooked simply because they lack a traditional background. Wellfound’s new initiative seeks to disrupt this cycle by allowing candidates to record a single, comprehensive AI-facilitated interview that can be shared across multiple job applications on the platform. By leveraging advanced transcription and clipping technology, the feature ensures that human recruiters can quickly access relevant insights without the need to watch hours of unedited footage.

The Architecture of the AI Video Interview

The AI video interview feature is designed to function as an initial human resources screener. Rather than requiring a separate interview for every job application—a process that would likely lead to "candidate fatigue"—Wellfound allows users to complete one foundational interview. This session covers broad but essential topics, such as a candidate’s career aspirations, recent project experiences, and problem-solving methodologies.

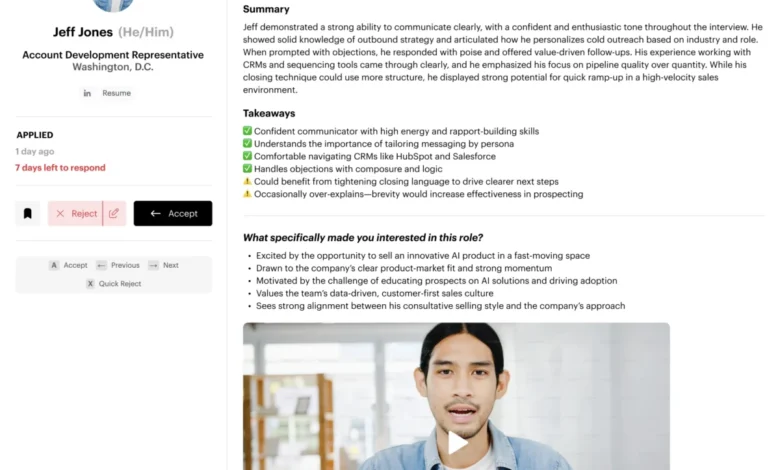

The "AI" component of the interview acts as an intelligent moderator. During the recording, the system monitors the candidate’s responses in real-time. If the AI detects that a prompt was not fully addressed or identifies a unique opportunity for the candidate to elaborate on a specific skill, it generates follow-up questions. This dynamic interaction mimics a live screening call, ensuring that the final recording provides a holistic view of the applicant’s capabilities.

Amit Matani, CEO of Wellfound, emphasized that the goal is to bridge the gap between digital profiles and human reality. "The hope was that we could help candidates tell their story better and companies could see this is a real person on the other side," Matani stated. Importantly, while the interview is facilitated by AI, the final hiring decisions remain in human hands. Hiring teams review the curated clips and summaries, ensuring that the "human element" of recruiting is enhanced rather than replaced by automation.

Technical Evolution: Solving the Video Engagement Problem

The concept of asynchronous video interviews is not entirely new to Wellfound. Several years ago, the company experimented with a version where candidates recorded answers to static questions. However, the feature failed to gain traction because of a fundamental bottleneck: recruiter time. In a high-volume hiring environment, recruiters were unwilling to sit through 15-minute unedited videos for every applicant.

The current iteration solves this engagement problem through the integration of Mux’s transcription and video processing APIs. The workflow follows a sophisticated pipeline:

- Automated Transcription: Immediately following the interview, the video is processed through Mux to generate a highly accurate transcript.

- LLM Summarization: Wellfound runs these transcripts through a Large Language Model (LLM) to extract key takeaways, summarize long-form answers, and identify specific "highlight" moments.

- Frame-Accurate Clipping: Using the context provided by the transcript, the system utilizes Mux’s clipping feature to segment the video into specific topics. This allows recruiters to jump directly to an answer about "leadership experience" or "technical stack" without scrubbing through the entire file.

- Accessibility and Skimmability: The system generates auto-captions, allowing recruiters to review interviews in environments where audio might not be feasible, such as a crowded office or during a commute.

This shift from "raw video" to "structured data" has significantly altered the utility of video in recruitment. By making video skimmable, Wellfound has transformed it into a high-signal tool that rivals the speed of reading a resume while providing much deeper context.

From Prototype to Production: A One-Week Chronology

The development of the AI video interview feature serves as a case study in modern "vibe coding" and rapid iteration. The project moved from a conceptual framework to a live product in approximately seven days, a timeline made possible by the accessibility of modern video APIs.

The chronology of the launch began on a Friday, when Amit Matani decided to personally test the feasibility of the idea. Utilizing Mux’s documentation and API, Matani "vibe coded" the initial prototype over the weekend. By Monday morning, the CEO had a functioning proof-of-concept that demonstrated the core workflow of recording, transcribing, and summarizing.

Throughout the remainder of the week, Wellfound’s engineering team took over the prototype, cleaning the codebase, ensuring scalability, and integrating the feature into the existing marketplace infrastructure. The team noted that the straightforward nature of the Mux API removed traditional barriers to entry for video development, which historically required specialized knowledge of codecs, latency, and storage protocols.

Matani noted that the fair pricing model, particularly the "Cold Storage" feature, was a deciding factor in the product’s long-term viability. In the recruitment lifecycle, a video interview is highly active for a few weeks but may remain untouched for months once a candidate is hired. Mux’s ability to automatically move inactive videos into a cheaper storage tier allows Wellfound to maintain a "candidate-first" repository without incurring prohibitive infrastructure costs.

Market Data and the Need for Better Screening Tools

The launch of Wellfound’s AI video feature comes at a time when the recruitment industry is facing a crisis of volume. According to various HR industry reports, the average corporate job opening receives approximately 250 resumes. Of these, only 4 to 6 candidates are typically invited for an interview. Research by Glassdoor and LinkedIn suggests that recruiters spend an average of six to seven seconds on an initial resume "scan" before deciding whether to reject or move forward.

This rapid-fire screening process inherently favors candidates with "prestige markers." A study by the National Bureau of Economic Research (NBER) has previously highlighted that resumes with "white-sounding" names or elite university credentials receive significantly more callbacks, even when skills are comparable. By providing a video-first option, Wellfound is attempting to mitigate this bias.

Early feedback from recruiters using the platform suggests that the AI interview has already influenced hiring decisions. Wellfound reports that several hiring managers have matched with candidates they would have otherwise rejected based on their resumes. Conversely, the video clips have helped recruiters identify when a candidate who looked perfect "on paper" lacked the specific communication style or cultural alignment required for a specific startup environment.

Impact on Candidate Experience and Feedback Loops

One of the most persistent complaints in the modern job market is the "black hole" of applications—the phenomenon where candidates apply for dozens of roles and receive no communication in return. Wellfound has addressed this by using the AI-generated transcripts to provide immediate, constructive feedback to the candidates themselves.

After completing the AI interview, the system generates notes for the candidate, highlighting strengths and identifying areas for improvement in future interviews. This feature has proven to be one of the most popular aspects of the tool. In an era of automated rejections, receiving tangible, honest feedback provides a value-add for the candidate, even if they are not immediately hired for their first-choice role.

Broader Implications and the Future of Humanized Recruiting

The success of the AI video interview has prompted Wellfound to explore further applications of video technology across its platform. The company is currently deploying a similar Mux-powered pipeline for company pitch videos. In this scenario, startup founders record short segments explaining their mission and company culture. These clips are then included in candidate outreach, allowing potential hires to "meet" their future leadership before even applying.

Future updates are expected to include video integrations directly on company profile pages, allowing candidates to see "day-in-the-life" segments and meet potential colleagues. This shift toward a video-centric marketplace represents a broader trend in the tech industry: the move away from static data points toward rich, multi-media storytelling.

As AI continues to permeate the recruitment landscape, the Wellfound model suggests that the most effective use of the technology is not to replace human judgment, but to prepare and present data in a way that makes human judgment more accurate and less biased. By reducing the friction of video production and consumption, Wellfound and Mux are creating a recruitment environment where a candidate’s story is just as important as their school’s name.

The broader implication for the HR tech industry is clear: the resume is no longer the final word. As tools for capturing and analyzing human interaction become more accessible, the "paper credential" may eventually become a secondary supplement to the "digital persona." For the thousands of qualified candidates who do not fit the traditional mold of a Silicon Valley hire, this technological shift offers a long-awaited opportunity to be seen and heard on their own terms.