AWS Simplifies AI Model Deployment with SageMaker HyperPod Inference Operator as Native EKS Add-on

Amazon Web Services (AWS) has announced a significant advancement in the realm of artificial intelligence (AI) and machine learning (ML) operations with the introduction of the Amazon SageMaker HyperPod Inference Operator as a native Amazon Elastic Kubernetes Service (EKS) add-on. This development marks a pivotal step towards democratizing high-performance AI inference, drastically simplifying the deployment and lifecycle management of ML models on Kubernetes-native infrastructure. The move is set to transform how AI teams manage their inference workloads, promising faster time to value, reduced operational complexity, and enhanced control over resource utilization.

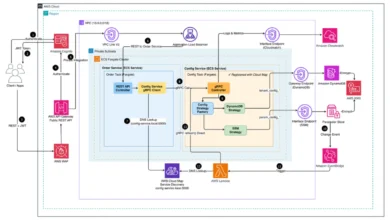

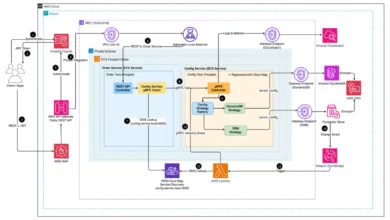

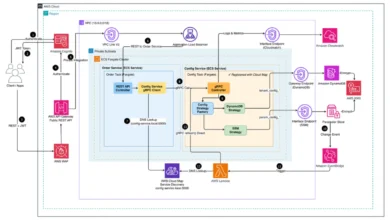

The proliferation of AI across industries has led to an exponential increase in the demand for robust, scalable, and efficient ML model deployment solutions. From large language models (LLMs) to complex computer vision systems, organizations are constantly seeking ways to move their trained models from experimentation to production with minimal friction. Amazon SageMaker HyperPod, a purpose-built capability designed to accelerate distributed training and experimentation, already offers an end-to-end experience supporting the full lifecycle of AI development. This includes interactive experimentation, training, inference, and post-training workflows. The SageMaker HyperPod Inference Operator extends this capability, acting as a Kubernetes controller specifically designed to manage the deployment and lifecycle of models within HyperPod clusters. It provides flexible deployment interfaces, including kubectl, Python SDK, SageMaker Studio UI, and a dedicated HyperPod CLI, alongside advanced autoscaling capabilities with dynamic resource allocation and comprehensive observability features that track critical metrics such as time-to-first-token, latency, and GPU utilization.

Historically, deploying inference workloads on Kubernetes-native infrastructure has presented considerable challenges for AI teams. The process often involved navigating a labyrinth of manual configurations, including intricate Helm charts, complex Identity and Access Management (IAM) role setups, tedious dependency management, and prone-to-downtime manual upgrades. This intricate setup could consume hours, if not days, before a single model was ready to serve predictions in a production environment. Such operational overhead not only diverted valuable engineering resources from core ML development but also slowed down innovation cycles, making it difficult for organizations to rapidly iterate and deploy new AI capabilities.

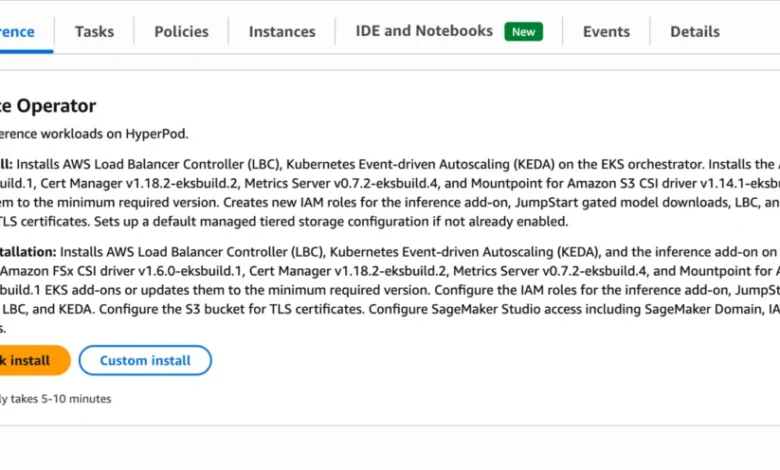

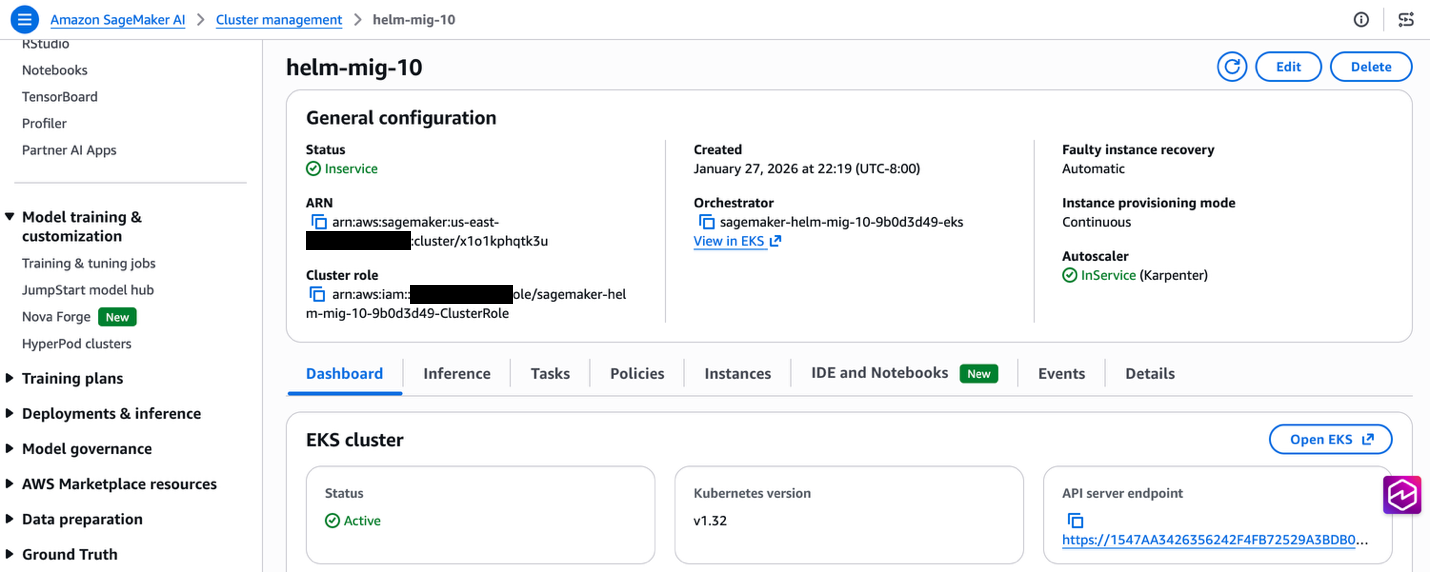

The newly announced Amazon SageMaker HyperPod Inference Operator as a native EKS add-on directly addresses these pain points. It enables one-click installation and managed upgrades directly from the SageMaker console, effectively eliminating the need for manual Helm chart deployments, intricate IAM configuration tweaks, and the often-dreaded downtime during upgrades. This strategic integration with EKS, AWS’s managed Kubernetes service, positions HyperPod as an even more compelling platform for enterprises looking to scale their AI initiatives without getting bogged down in infrastructure management.

Revolutionizing AI Deployment: From Complexity to One-Click Simplicity

The core promise of this new offering is a dramatically simplified installation experience, designed to cater to various customer scenarios. For organizations setting up new HyperPod clusters, the process is now almost entirely automated. When creating new clusters through the SageMaker console’s Quick Setup or Custom Setup workflows, the Inference Operator, along with all necessary dependencies, is automatically installed as an EKS add-on during the cluster creation process. This ensures that a new HyperPod cluster is ready for model deployments immediately upon creation, complete with integrated one-click upgrade paths.

Existing HyperPod cluster users also benefit from this simplification. They can install the Inference Operator with a single click via the SageMaker console. This automated installation process takes care of several critical configurations behind the scenes: it automatically creates necessary IAM roles and policies, configures Amazon S3 buckets, establishes VPC endpoints, and installs crucial dependency add-ons like the AWS Load Balancer Controller, KEDA, and CSI drivers. This comprehensive automation stands in stark contrast to previous methods that required extensive manual intervention and deep Kubernetes expertise. An AWS spokesperson emphasized, "This enhancement directly responds to customer feedback regarding the heavy operational lift involved in deploying AI models. By automating these foundational steps, we are enabling ML engineers to focus on model innovation rather than infrastructure plumbing, reducing deployment time from hours to mere minutes."

A Deep Dive into Streamlined Installation and Lifecycle Management

The integration as an EKS add-on brings standardized version management and one-click upgrades, accessible through both the AWS console and CLI. This ensures that customers can easily adopt new features and critical security updates without encountering complex manual procedures or risking system instability. The add-on model ensures compatibility and reduces the risk of misconfigurations that often arise with manual updates.

For installation, AWS provides three primary methods, catering to different operational preferences:

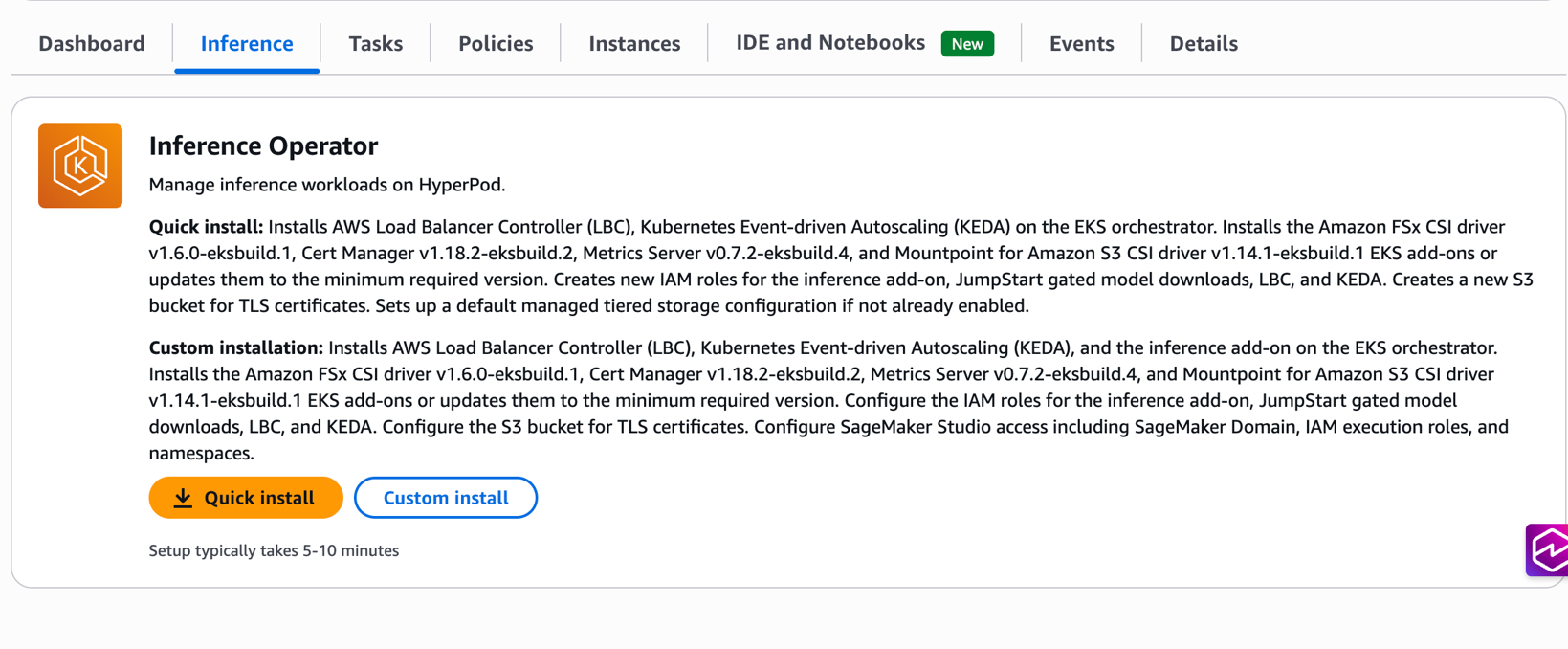

- SageMaker UI (Recommended): This offers the most streamlined experience with two distinct options. The "Quick install" automatically provisions all required resources with optimized defaults, including IAM roles, S3 buckets, and dependency add-ons. This is ideal for rapid prototyping and initial deployments. For organizations with specific governance or existing infrastructure, the "Custom install" provides flexibility to specify existing resources or customize configurations while still benefiting from the one-click experience. Users can reuse existing IAM roles, S3 buckets, or dependency add-ons based on their organizational requirements.

- EKS APIs (CLI): For customers who prefer command-line workflows and programmatic control, the Inference Operator can be installed directly using the EKS CLI. However, this method requires all prerequisite resources—such as IAM roles, S3 buckets, VPC endpoints, and dependency add-ons—to be manually created beforehand. This approach is often favored by DevOps teams integrating the deployment process into existing CI/CD pipelines.

- Terraform Deployment: Organizations leveraging Infrastructure as Code (IaC) principles can deploy HyperPod clusters using Terraform modules available in the

awsome-distributed-trainingGitHub repository. By setting thecreate_hyperpod_inference_operator_modulevariable totruewithin theircustom.tfvarsfile, users can automate the deployment of the Inference Operator add-on alongside other optional add-ons like task governance, training operator, and observability. This method ensures consistent, repeatable deployments across different environments and aligns with enterprise-grade IaC practices.

The HyperPod Inference Operator also manages several critical dependencies, which are enabled by default but can be toggled off if they already exist on the EKS cluster. These include cert-manager for certificate management, Amazon FSx for Lustre CSI and Mountpoint for Amazon S3 CSI for high-performance storage integration, the AWS Load Balancer Controller for external access, and the KEDA Operator for Kubernetes-native event-driven autoscaling. This comprehensive dependency management further unburdens AI teams from the complexities of configuring and maintaining these foundational Kubernetes components.

Enhancing Performance and Control: Advanced Inference Capabilities

Beyond simplified installation, the SageMaker HyperPod Inference Operator integrates seamlessly with a suite of advanced features designed to optimize model performance, resource utilization, and operational control.

One of the standout new features is Multi-Instance Type Deployment. This capability significantly enhances deployment reliability and resource utilization. AI teams can now specify a prioritized list of instance types in their deployment configuration. The system intelligently selects from available alternatives if the preferred instance type lacks capacity. The Kubernetes scheduler evaluates instance types in priority order using node affinity rules, seamlessly placing workloads on the highest-priority available instance type. For instance, if ml.p4d.24xlarge is the top priority but unavailable, the scheduler automatically falls back to ml.g5.24xlarge, and then to ml.g5.8xlarge as a last resort. This intelligent fallback mechanism is crucial for maintaining high availability and cost efficiency, especially in dynamic cloud environments where instance availability can fluctuate. This is implemented using Kubernetes node affinity rules with requiredDuringSchedulingIgnoredDuringExecution to restrict scheduling to specified instance types and preferredDuringSchedulingIgnoredDuringExecution with descending weights to enforce priority ordering.

For scenarios demanding even more granular scheduling control, such as excluding spot instances, preferring specific availability zones, or targeting nodes with custom labels, HyperPod Inference exposes Kubernetes’ native nodeAffinity directly in the InferenceEndpointConfig specification. This empowers users with the full expressiveness of Kubernetes scheduling primitives, allowing them to fine-tune where their inference workloads run based on cost, performance, or compliance requirements.

Furthermore, the simplified installation experience seamlessly integrates with existing advanced HyperPod inference capabilities. Managed tiered KV cache can be optionally enabled during installation, offering intelligent memory allocation based on instance types. This feature is particularly beneficial for long-context workloads, as it can reduce inference latency by up to 40% while optimizing memory utilization across the cluster. Similarly, the installation automatically configures intelligent routing capabilities with multiple strategies (prefix-aware, KV-aware, round-robin) to maximize cache efficiency and minimize inference latency based on specific workload characteristics. For comprehensive monitoring, observability integration is built-in, providing immediate visibility into inference metrics, cache performance, and routing efficiency through Amazon Managed Grafana dashboards. This ensures that operational teams have the necessary tools to monitor the health and performance of their deployed AI models in real-time.

Seamless Transition: Empowering Existing Users with a Clear Migration Path

Recognizing that many customers have already adopted the HyperPod Inference Operator via Helm charts, AWS has provided an automated migration script. This script, hosted in a public GitHub repository, facilitates a smooth transition from Helm-based deployments to the new EKS add-on model. The script includes built-in rollback capabilities, ensuring that if the add-on installation encounters issues, the system can revert to its previous state, minimizing disruption. Backup files are also stored for manual rollback if needed, offering an additional layer of safety.

The migration script automates several key steps: it uninstalls the Helm chart, migrates all existing custom resources (CRs) to the EKS add-on, backs up configurations, and provides clear step-by-step guidance or automated execution options. This proactive approach by AWS ensures that existing users can easily upgrade to the more streamlined and managed EKS add-on experience without re-architecting their entire MLOps pipelines. The benefits of migration are substantial, including simplified upgrades, improved security posture through managed IAM roles, reduced operational overhead, and consistent resource management. This commitment to backward compatibility and smooth transitions underscores AWS’s dedication to supporting its diverse customer base.

Broader Implications for the AI Ecosystem and MLOps Landscape

The introduction of the SageMaker HyperPod Inference Operator as a native EKS add-on represents more than just a product enhancement; it signifies a broader strategic move within the AI and MLOps landscape. By drastically reducing the complexity associated with deploying and managing AI inference workloads on Kubernetes, AWS is effectively democratizing access to high-performance, scalable AI infrastructure. This will enable a wider range of organizations, including those with limited specialized Kubernetes expertise, to leverage sophisticated AI models in production.

The shift towards managed services for complex infrastructure components is a growing trend, and this announcement firmly places AWS at the forefront of simplifying MLOps. Industry analysts often highlight that MLOps engineers spend a significant portion of their time (estimated up to 40%) on infrastructure setup and maintenance rather than on core ML innovation. By automating these tasks, AWS allows businesses to accelerate their AI innovation cycles, bring new products and services to market faster, and realize greater return on their AI investments. Furthermore, the enhanced control over resource allocation, through features like multi-instance type deployment and node affinity, contributes to significant cost efficiencies, especially for GPU-intensive workloads, by ensuring optimal utilization of expensive hardware.

This development also reinforces AWS’s comprehensive strategy for AI/ML, which focuses on providing a full spectrum of tools—from data preparation and model training to deployment and monitoring. By deeply integrating HyperPod inference with EKS, AWS is offering a powerful, scalable, and operationally simplified platform that can meet the rigorous demands of modern AI applications, cementing its position as a leading cloud provider for enterprise AI.

Conclusion: Driving the Future of AI with Simplified Operations

The streamlined Inference Operator installation experience for Amazon SageMaker HyperPod is a game-changer for machine learning teams. By eliminating infrastructure complexity and accelerating time to value, it empowers organizations to focus their efforts on what truly matters: developing, deploying, and optimizing their inference workloads. The one-click installation, automated resource management, and seamless upgrade capabilities mean that the operational burden is significantly reduced, allowing engineers to dedicate more time to innovation.

The EKS Add-on integration provides enterprise-grade lifecycle management while retaining the flexibility to customize configurations for specific organizational requirements. When combined with advanced features such as managed tiered KV cache, intelligent routing, and flexible instance type deployment, this simplified installation experience makes high-performance inference accessible to teams of all sizes, from startups to large enterprises.

This strategic enhancement by AWS is set to accelerate the adoption of advanced AI models in production, enabling businesses to unlock new capabilities and drive digital transformation. Organizations are encouraged to explore these new capabilities today by creating a new HyperPod cluster with the Inference Operator pre-installed, or by adding it to their existing clusters with a single click through the SageMaker console. For detailed installation instructions and troubleshooting guides, comprehensive documentation is available to assist users in leveraging the full power of this simplified, yet highly capable, AI inference platform.