Mux Launches Mux Robots to Integrate Multimodal AI Workflows into Video Infrastructure

Mux, a leading provider of video infrastructure for developers, has officially announced the launch of Mux Robots, a suite of integrated artificial intelligence workflows designed to transform video assets into searchable, structured data. This move signals a strategic shift in the video technology landscape, moving beyond traditional delivery and playback toward a model where video serves as a primary source of contextual information and business intelligence. By hosting AI workflows directly on its infrastructure, Mux aims to alleviate the operational burdens associated with model evaluation, prompt engineering, and the orchestration of complex multimodal AI systems.

The introduction of Mux Robots comes at a time when the developer community is increasingly seeking ways to leverage generative AI and multimodal models to enhance user experiences. While Mux has historically focused on the "machinery" of video—encompassing delivery, playback, and analytics—the company is now positioning itself to address the burgeoning demand for "video-as-data." This paradigm shift allows developers to do more than just stream content; it enables the extraction of meaningful insights, automated moderation, and content enrichment through a unified API.

The Evolution of Video Infrastructure

Since its inception, Mux has built a reputation on providing robust "primitives" for video developers. Features such as instant thumbnails, storyboards, and automated captions were originally conceived to improve the viewer’s playback experience. However, with the advent of powerful AI models like GPT-4o, Gemini, and Claude, these primitives have taken on a new role as essential data inputs for machine learning workflows.

The launch of Mux Robots is the logical progression of the company’s recent efforts in the AI space. Several months ago, Mux released @mux/ai, an open-source library designed to help developers run AI workflows on Mux assets using their own infrastructure. Mux Robots builds upon this foundation by offering a fully managed service. For developers who require deep customization, the open-source library remains a viable path, but Mux Robots is intended for those who prioritize scalability and ease of use without the overhead of managing underlying servers or model life cycles.

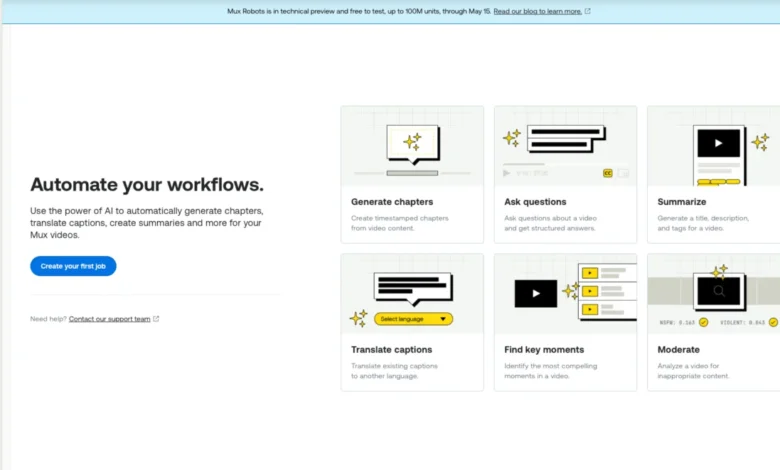

Core Capabilities and Initial Workflows

At launch, Mux Robots offers six primary workflows, each accessible through simple API calls or directly via the Mux Dashboard. These workflows are designed to address the most common challenges faced by content platforms and developers today.

Content Summarization and Tagging

The summarization workflow allows developers to automatically generate titles, descriptions, and tags for video assets. By analyzing the visual and auditory components of a video, the AI can produce structured results that improve searchability and SEO. This reduces the manual labor traditionally required by content moderators and creators to catalog large libraries of video content.

Automated Content Moderation

Moderation remains one of the most significant hurdles for platforms hosting user-generated content. Mux Robots provides automated moderation signals, identifying potentially sensitive or inappropriate content within a video. This allows platforms to scale their safety efforts without a proportional increase in human moderation costs.

Multilingual Caption Translation

As digital content becomes increasingly global, accessibility and localization are paramount. The translation workflow enables the conversion of captions into multiple languages, ensuring that content is accessible to a worldwide audience. This feature leverages the transcription primitives already available in Mux Video to provide a seamless transition from speech-to-text to translated output.

Chapter Generation and Key Moment Identification

To improve user engagement, Mux Robots can automatically identify key moments and generate chapter markers. This allows viewers to navigate long-form content more efficiently, a feature that has become standard on major platforms like YouTube. By providing these timestamps as structured data, Mux enables developers to build more interactive and user-friendly video players.

Interactive Q&A (Ask Questions)

Perhaps the most forward-looking feature is the ability to "ask questions" of a video asset. This workflow allows developers to query the content of a video, retrieving specific information or context without having to watch the entire clip. This has significant implications for educational platforms, corporate training, and archival research.

Strategic Technical Infrastructure

One of the primary value propositions of Mux Robots is its handling of the "operational work" that often stymies AI implementation. Building a reliable AI workflow involves more than just sending data to an LLM; it requires constant model evaluation, prompt tuning, and sophisticated orchestration to ensure reliability over time.

Mux Robots manages the infrastructure required to keep these workflows performant. This includes selecting the most efficient model for a specific task—balancing cost and accuracy—and optimizing the prompts used to generate results. By abstracting this complexity, Mux allows developers to focus on the application layer rather than the intricacies of AI infrastructure.

Furthermore, the system is built to be "AI-ready." Mux has indicated that it is continuing to invest in underlying primitives within Mux Video and Mux Data to provide richer inputs for Mux Robots. As model capabilities evolve, Mux can update the backend of its workflows without requiring developers to change their integration code.

Chronology of Development and Technical Preview

The rollout of Mux Robots follows a specific timeline designed to gather developer feedback and refine the product under real-world conditions.

- Initial Research and Open Source: The project began with the development of the @mux/ai open-source library, which tested the feasibility of running complex AI tasks on video assets.

- Technical Preview Launch: Mux Robots is currently in a "Technical Preview" phase. During this period, the company is actively seeking feedback on the initial six workflows and the user experience of the dashboard.

- Free Usage Period: To encourage adoption and testing, Mux has announced that usage of Mux Robots will be free through May 15, 2026, subject to a limit of 100 million "units."

- Future Transition: Following the technical preview, Mux intends to transition to a paid model based on these units. While exact pricing has not yet been finalized, units are accrued based on the duration of the video asset and the complexity of the specific AI workflow performed.

Data Privacy and Ethical Considerations

In an era where data privacy is a primary concern for enterprises, Mux has taken a firm stance on data handling. The company has explicitly stated that it does not use customer data to train its own models or those of its AI providers. Mux Robots is strictly an inference-based service. This commitment is crucial for organizations in regulated industries, such as healthcare or finance, who may be hesitant to use AI services that could potentially ingest sensitive proprietary information into a public training set.

Broader Industry Impact and Future Outlook

The launch of Mux Robots reflects a broader trend in the technology industry: the "intelligentization" of cloud infrastructure. Just as cloud providers moved from offering raw compute (IaaS) to managed platforms (PaaS), video infrastructure is moving toward "Intelligence-as-a-Service."

The Rise of the "Directives" Feature

Looking ahead, Mux has teased a forthcoming feature called "Directives." This is described as a "set-it-and-forget-it" automation tool. Instead of triggering AI jobs manually via the API for each video, developers will be able to define rules at the organizational level. For example, a directive could specify that every video uploaded to a certain environment must automatically undergo moderation and summarization. This creates a seamless, automated lifecycle for video assets, further reducing the friction between content upload and content intelligence.

Competitive Landscape

Mux’s move into AI puts it in more direct competition with hyperscale cloud providers like AWS (with its Rekognition and Elemental services) and Google Cloud (with its Video Intelligence API). However, Mux’s advantage lies in its developer-first approach and its deep integration with existing video streaming workflows. By providing a unified platform for both delivery and intelligence, Mux reduces the "integration tax" that developers often pay when stitching together services from multiple vendors.

Implications for Content Creators and Platforms

For smaller startups and independent developers, Mux Robots levels the playing field. Previously, building a system that could automatically summarize, moderate, and translate video required a dedicated team of machine learning engineers. Now, these capabilities are available via a single API call, allowing smaller teams to build sophisticated, AI-powered platforms that can compete with industry giants.

Conclusion and Implementation

Mux Robots is currently available to all Mux Video customers on "Pay as you go" plans or higher. To begin using the service, administrators must enable the Mux Robots section within the Mux Dashboard and accept the AI Services Addendum. Once enabled, developers can run jobs immediately through the dashboard or generate new API keys with the necessary permissions to integrate the service into their existing tech stacks.

As Mux iterates on this technical preview, the company expects to expand the library of available workflows based on customer demand. The ultimate goal is to make video as programmable for AI as it has become for streaming, effectively turning every frame of video into a valuable data point for business logic and user experience. By bridging the gap between video infrastructure and artificial intelligence, Mux is setting a new standard for what developers should expect from a video platform in the AI era.